GroupedFlux

Related Docs:

object GroupedFlux

| package publisher

Emit a single boolean true if all values of this sequence match the given predicate.

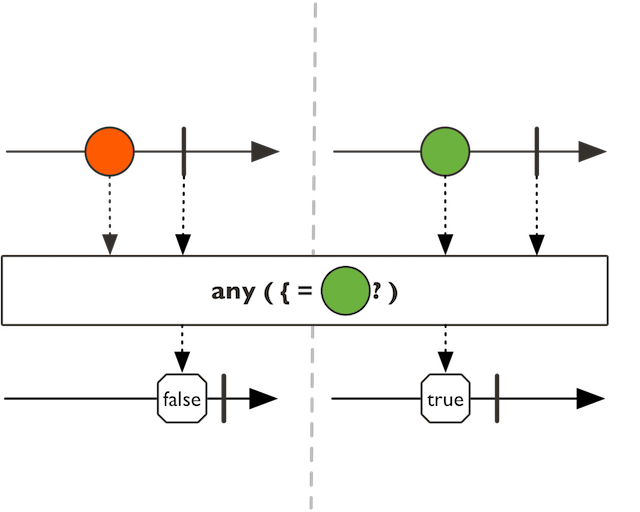

Emit a single boolean true if any of the values of this Flux sequence match the predicate.

Emit a single boolean true if any of the values of this Flux sequence match the predicate.

The implementation uses short-circuit logic and completes with true if the predicate matches a value.

predicate tested upon values

a new Flux with true if any value satisfies a predicate and false

otherwise

Immediately apply the given transformation to this Flux in order to generate a target type.

Immediately apply the given transformation to this Flux in order to generate a target type.

flux.as(Mono::from).subscribe()

the returned type

the Function1 to immediately map this Flux into a target type instance.

a an instance of P

Flux.compose for a bounded conversion to Publisher

Blocks until the upstream signals its first value or completes.

Blocks until the upstream signals its first value or completes.

max duration timeout to wait for.

the Some value or None

Blocks until the upstream signals its first value or completes.

Blocks until the upstream signals its first value or completes.

the Some value or None

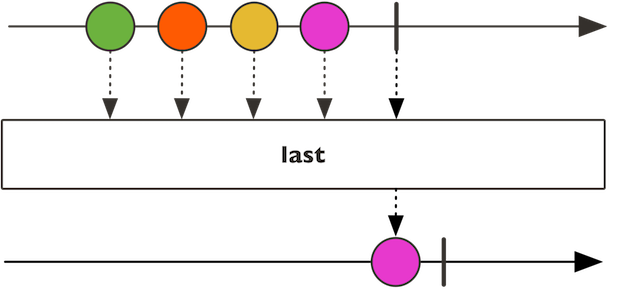

Blocks until the upstream completes and return the last emitted value.

Blocks until the upstream completes and return the last emitted value.

max duration timeout to wait for.

the last value or None

Blocks until the upstream completes and return the last emitted value.

Blocks until the upstream completes and return the last emitted value.

the last value or None

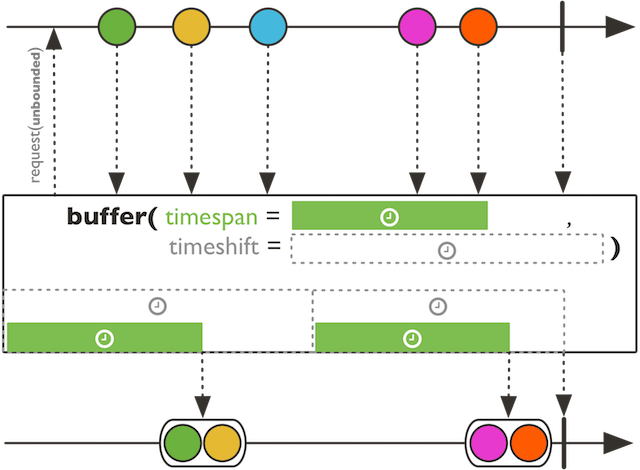

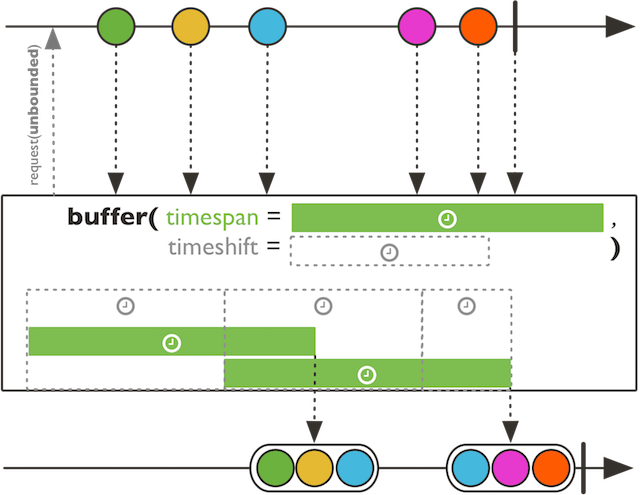

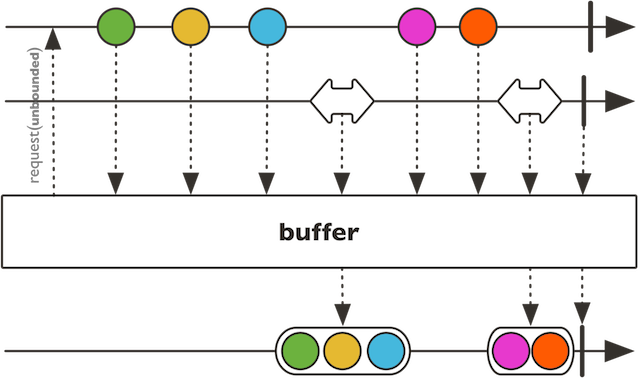

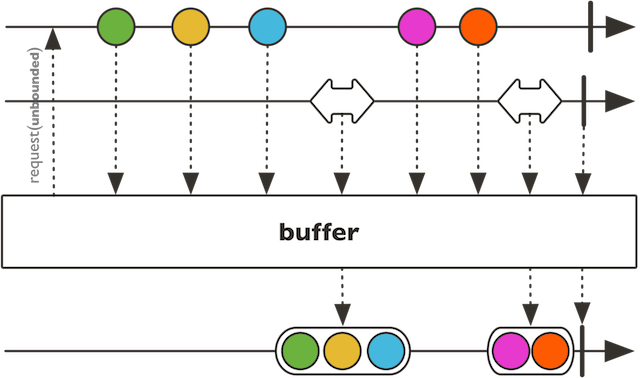

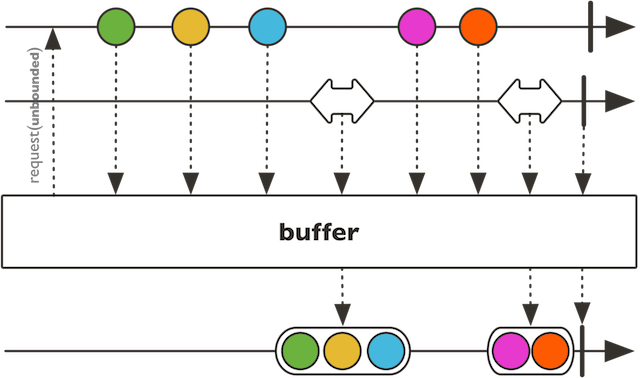

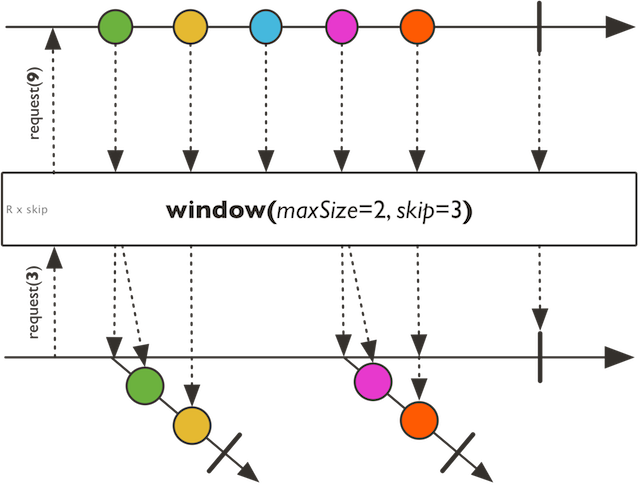

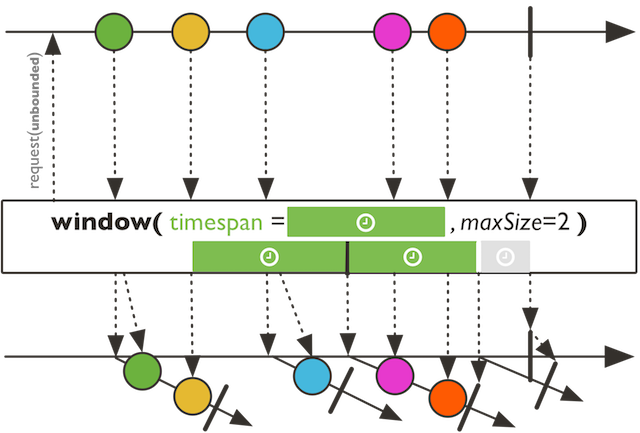

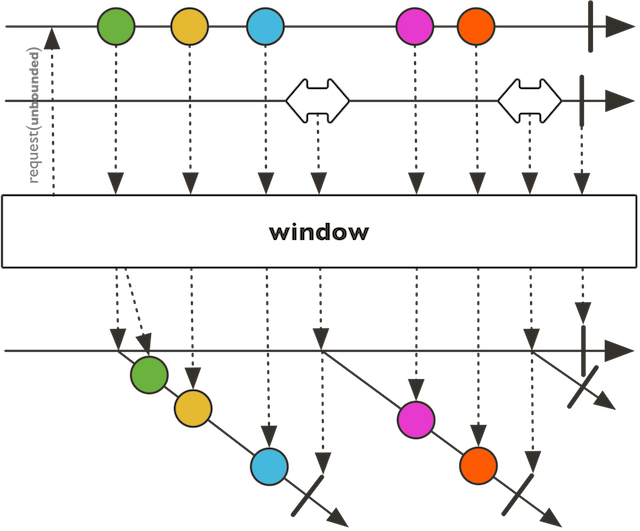

Collect incoming values into multiple Seq delimited by the given timeshift period.

Collect incoming values into multiple Seq delimited by the given timeshift period. Each Seq

bucket will last until the timespan has elapsed, thus releasing the bucket to the returned Flux.

When timeshift > timespan : dropping buffers

When timeshift < timespan : overlapping buffers

When timeshift == timespan : exact buffers

the duration to use to release buffered lists

the duration to use to create a new bucket

a microbatched Flux of Seq delimited by the given period timeshift and sized by timespan

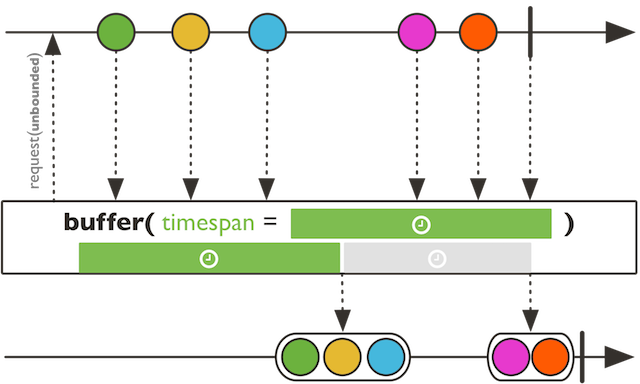

Collect incoming values into multiple Seq that will be pushed into the returned Flux every timespan.

Collect incoming values into multiple Seq delimited by the given Publisher signals.

Collect incoming values into multiple Seq delimited by the given Publisher signals.

the supplied Seq type

the other Publisher to subscribe to for emitting and recycling receiving bucket

the collection to use for each data segment

a microbatched Flux of Seq delimited by a Publisher

Collect incoming values into multiple Seq delimited by the given Publisher signals.

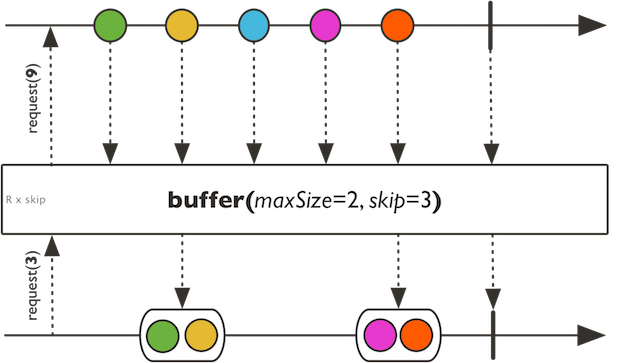

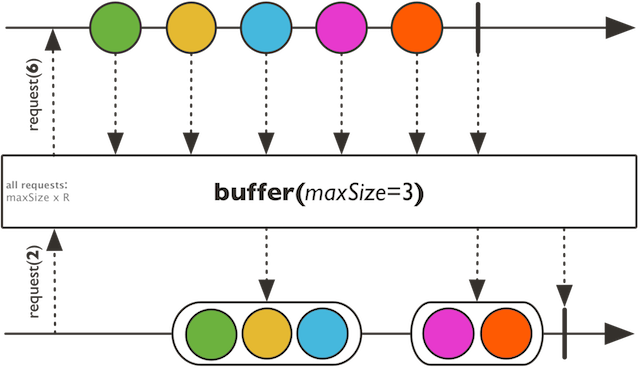

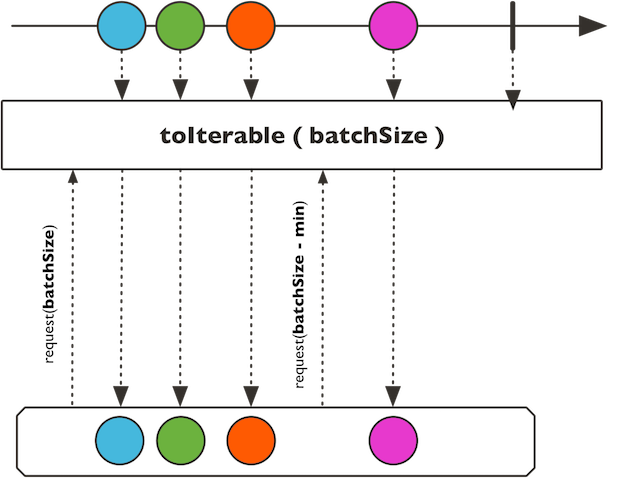

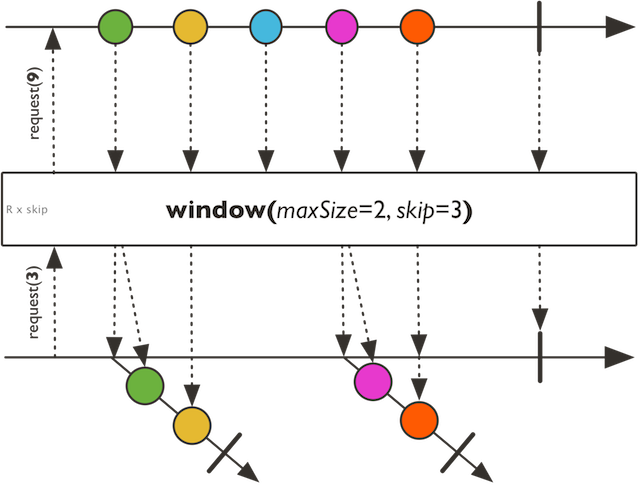

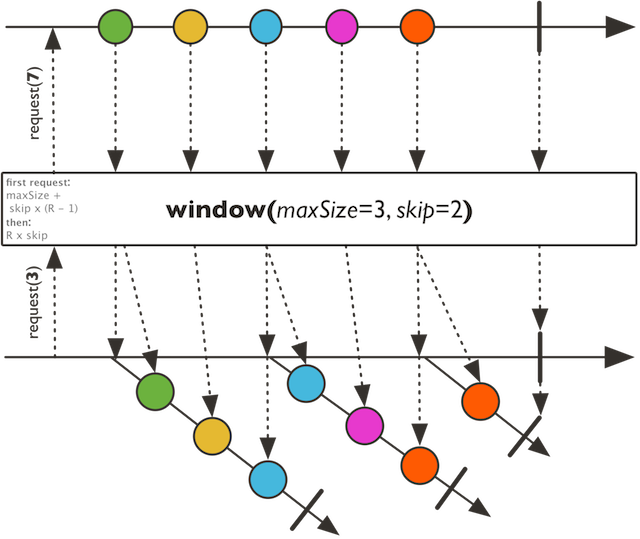

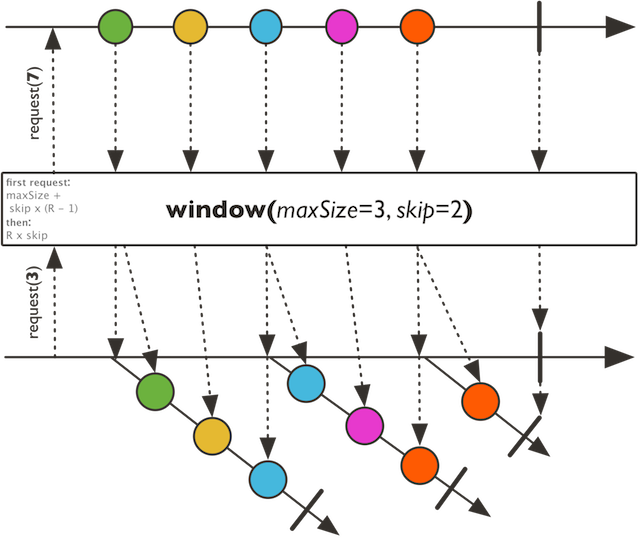

Collect incoming values into multiple mutable.Seq that will be pushed into the returned Flux when the given max size is reached or onComplete is received.

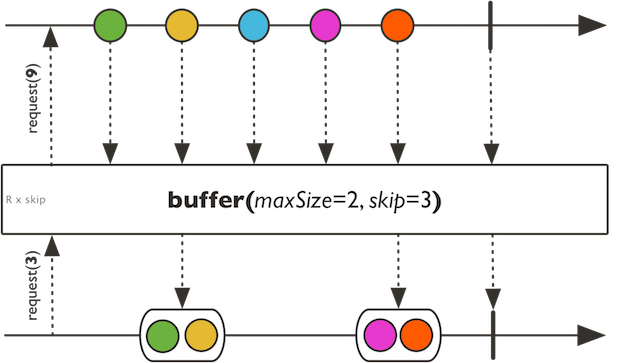

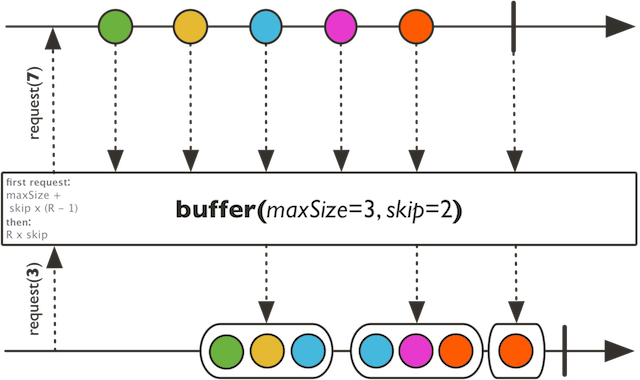

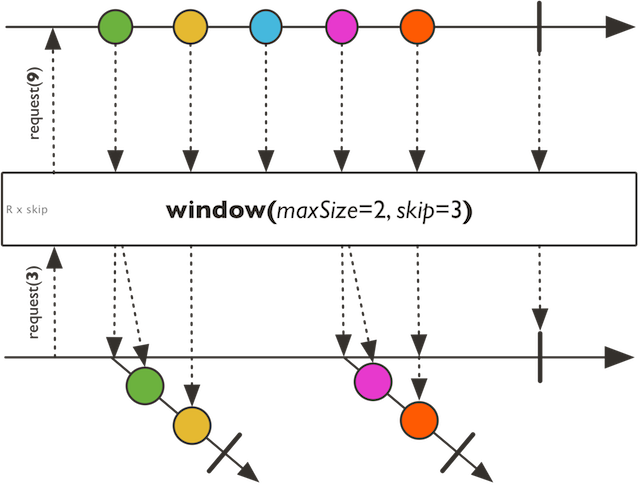

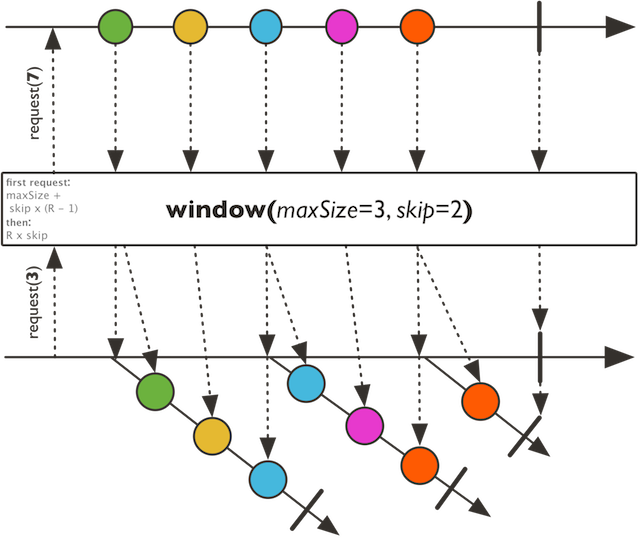

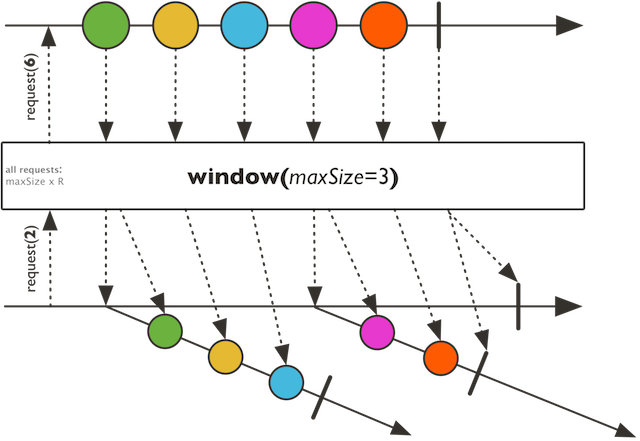

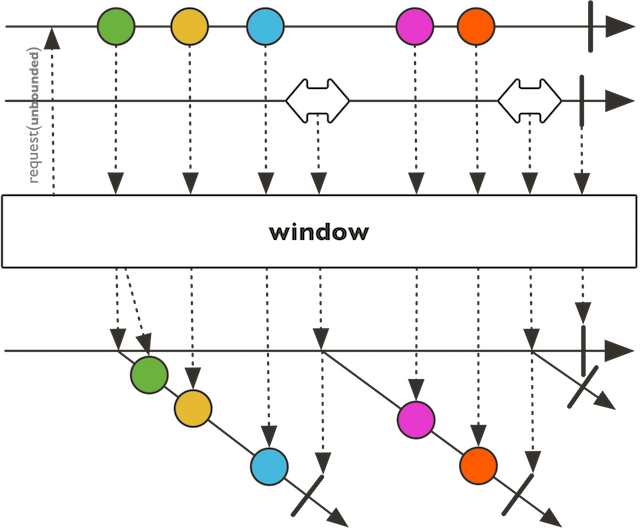

Collect incoming values into multiple mutable.Seq that will be pushed into the returned Flux when the given max size is reached or onComplete is received. A new container mutable.Seq will be created every given skip count.

When Skip > Max Size : dropping buffers

When Skip < Max Size : overlapping buffers

When Skip == Max Size : exact buffers

the supplied mutable.Seq type

the max collected size

the number of items to skip before creating a new bucket

the collection to use for each data segment

a microbatched Flux of possibly overlapped or gapped mutable.Seq

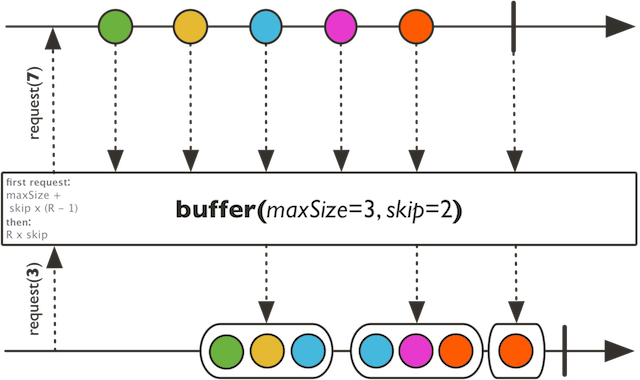

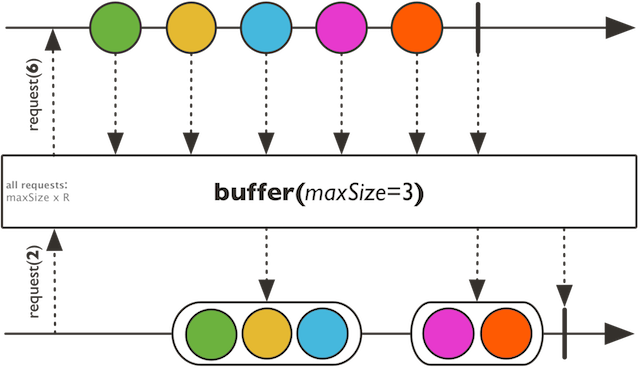

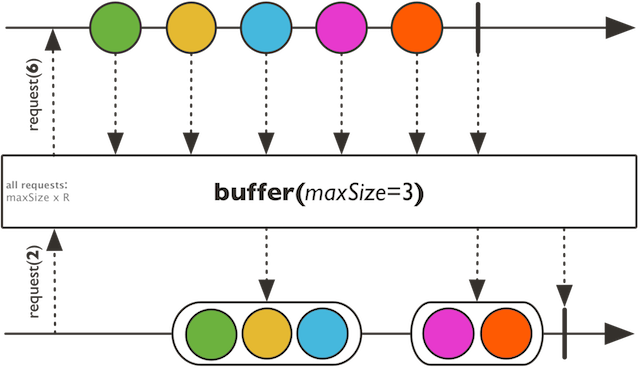

Collect incoming values into multiple Seq that will be pushed into the returned Flux when the given max size is reached or onComplete is received.

Collect incoming values into multiple Seq that will be pushed into the returned Flux when the given max size is reached or onComplete is received. A new container Seq will be created every given skip count.

When Skip > Max Size : dropping buffers

When Skip < Max Size : overlapping buffers

When Skip == Max Size : exact buffers

the max collected size

the number of items to skip before creating a new bucket

a microbatched Flux of possibly overlapped or gapped Seq

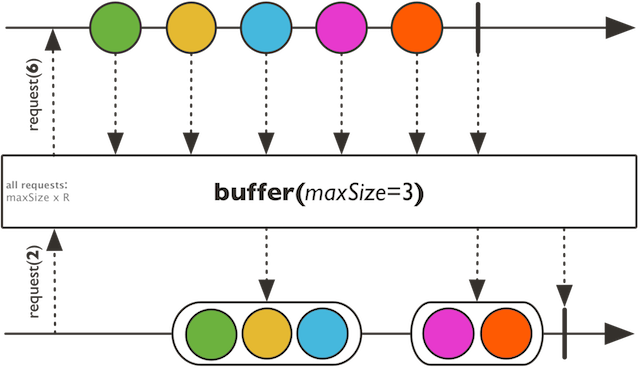

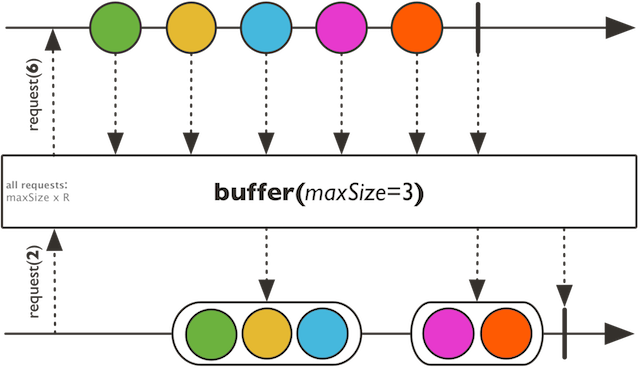

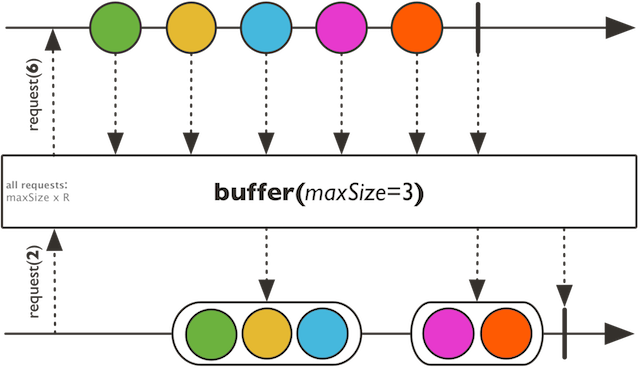

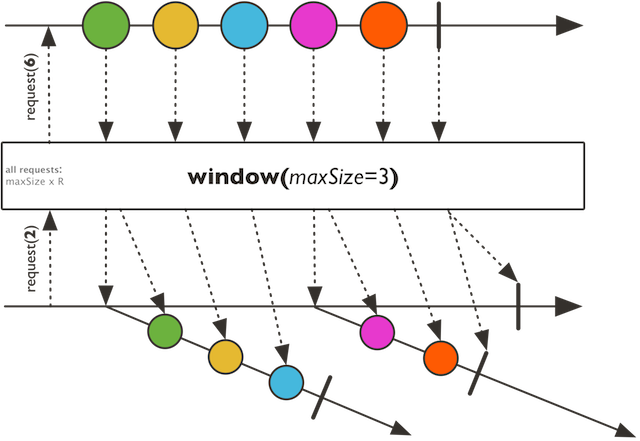

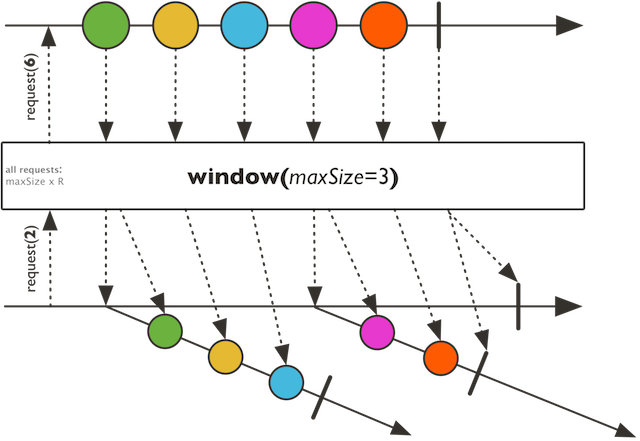

Collect incoming values into multiple Seq buckets that will be pushed into the returned Flux when the given max size is reached or onComplete is received.

Collect incoming values into multiple Seq buckets that will be pushed into the returned Flux when the given max size is reached or onComplete is received.

the supplied Seq type

the maximum collected size

the collection to use for each data segment

a microbatched Flux of Seq

Collect incoming values into multiple Seq buckets that will be pushed into the returned Flux when the given max size is reached or onComplete is received.

Collect incoming values into a Seq that will be pushed into the returned Flux on complete only.

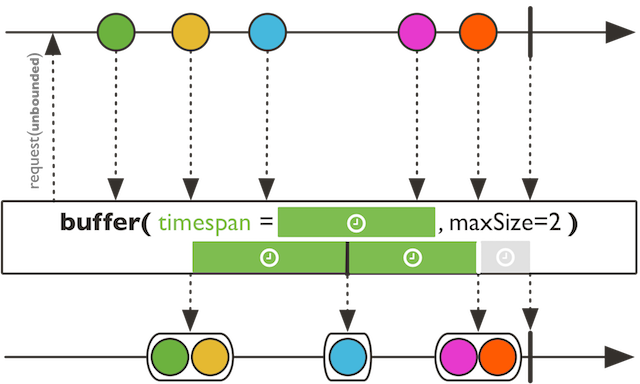

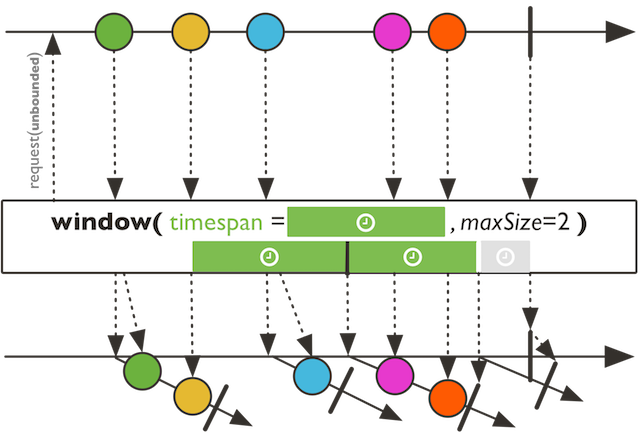

Collect incoming values into a Seq that will be pushed into the returned Flux every timespan OR maxSize items.

Collect incoming values into a Seq that will be pushed into the returned Flux every timespan OR maxSize items.

the supplied Seq type

the max collected size

the timeout to use to release a buffered list

the collection to use for each data segment

a microbatched Flux of Seq delimited by given size or a given period timeout

Collect incoming values into a Seq that will be pushed into the returned Flux every timespan OR maxSize items.

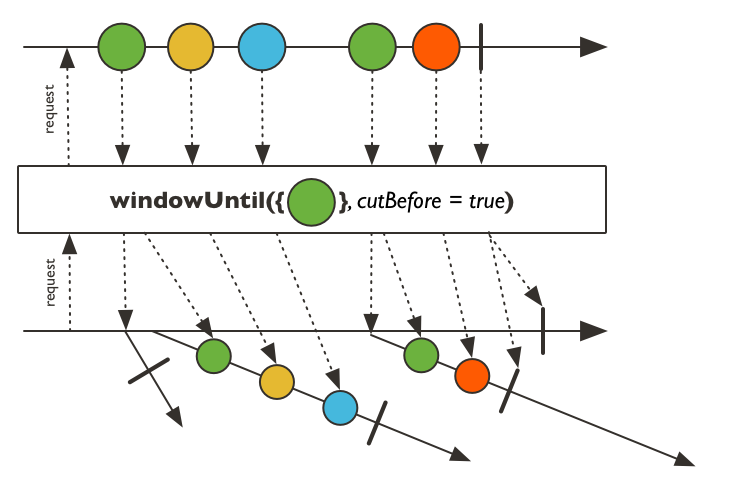

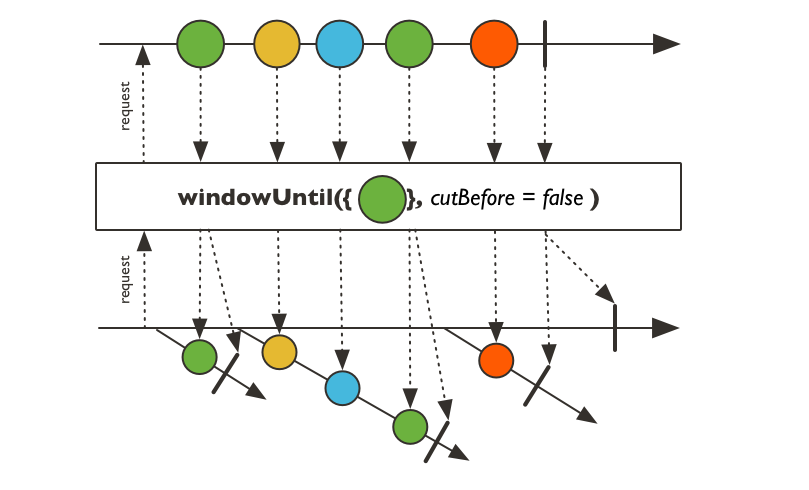

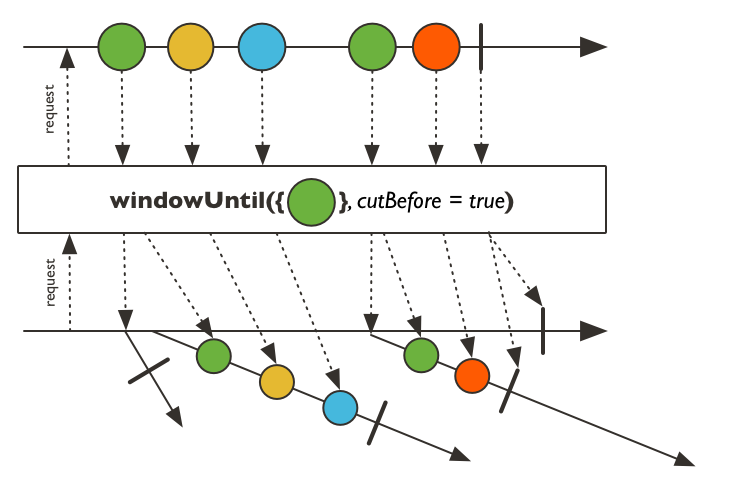

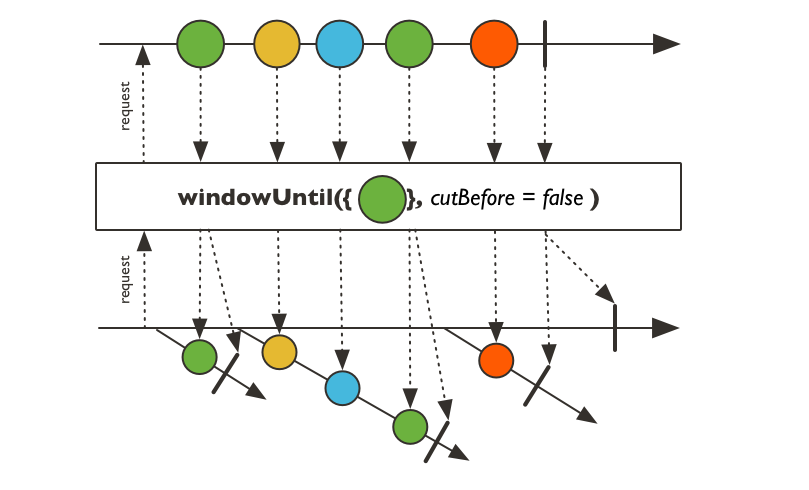

Collect incoming values into multiple Seq that will be pushed into the returned Flux each time the given predicate returns true.

Collect incoming values into multiple Seq that will be pushed into

the returned Flux each time the given predicate returns true. Note that

the buffer into which the element that triggers the predicate to return true

(and thus closes a buffer) is included depends on the cutBefore parameter:

set it to true to include the boundary element in the newly opened buffer, false to

include it in the closed buffer (as in Flux.bufferUntil).

On completion, if the latest buffer is non-empty and has not been closed it is emitted. However, such a "partial" buffer isn't emitted in case of onError termination.

a predicate that triggers the next buffer when it becomes true.

set to true to include the triggering element in the new buffer rather than the old.

a microbatched Flux of Seq

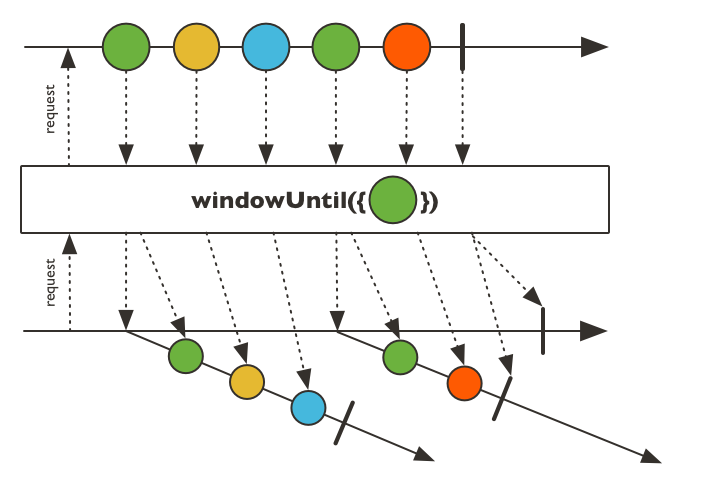

Collect incoming values into multiple Seq that will be pushed into the returned Flux each time the given predicate returns true.

Collect incoming values into multiple Seq that will be pushed into the returned Flux each time the given predicate returns true. Note that the element that triggers the predicate to return true (and thus closes a buffer) is included as last element in the emitted buffer.

On completion, if the latest buffer is non-empty and has not been closed it is emitted. However, such a "partial" buffer isn't emitted in case of onError termination.

a predicate that triggers the next buffer when it becomes true.

a microbatched Flux of Seq

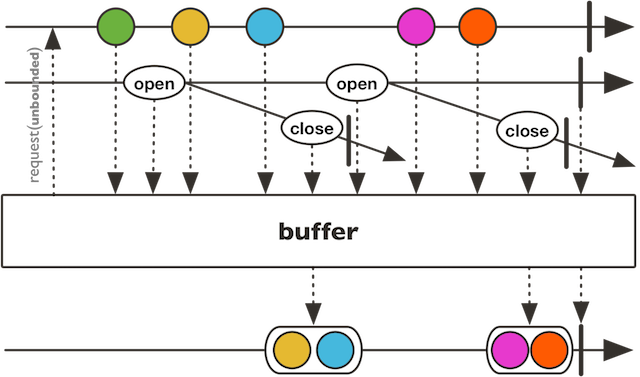

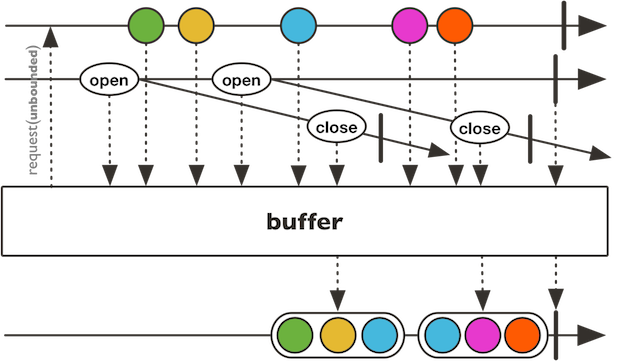

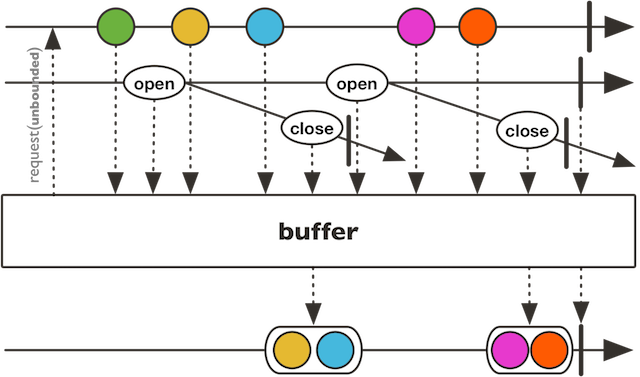

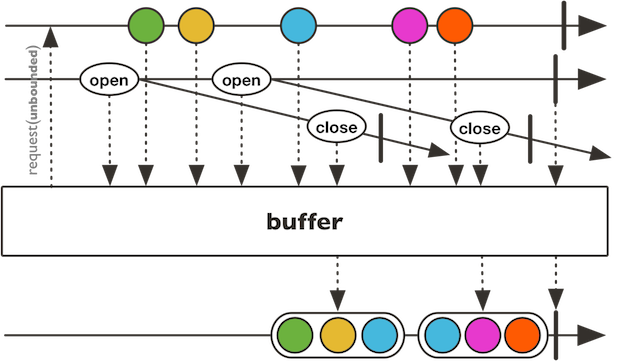

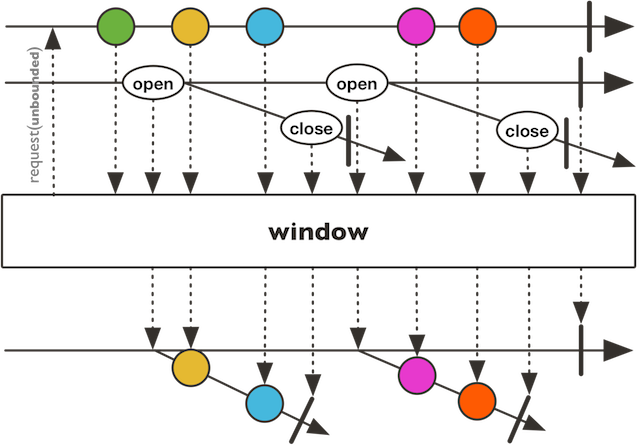

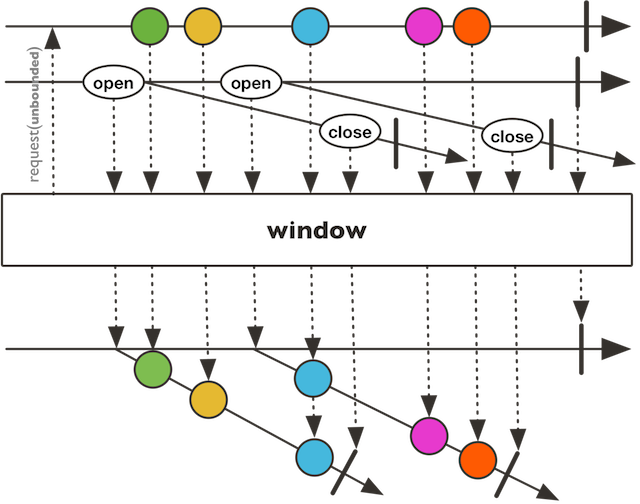

Collect incoming values into multiple Seq delimited by the given Publisher signals.

Collect incoming values into multiple Seq delimited by the given Publisher signals. Each Seq bucket will last until the mapped Publisher receiving the boundary signal emits, thus releasing the bucket to the returned Flux.

When Open signal is strictly not overlapping Close signal : dropping buffers

When Open signal is strictly more frequent than Close signal : overlapping buffers

When Open signal is exactly coordinated with Close signal : exact buffers

the element type of the bucket-opening sequence

the element type of the bucket-closing sequence

the supplied Seq type

a Publisher to subscribe to for creating new receiving bucket signals.

a Publisher factory provided the opening signal and returning a Publisher to subscribe to for emitting relative bucket.

the collection to use for each data segment

a microbatched Flux of Seq delimited by an opening Publisher and a relative closing Publisher

Collect incoming values into multiple Seq delimited by the given Publisher signals.

Collect incoming values into multiple Seq delimited by the given Publisher signals. Each Seq bucket will last until the mapped Publisher receiving the boundary signal emits, thus releasing the bucket to the returned Flux.

When Open signal is strictly not overlapping Close signal : dropping buffers

When Open signal is strictly more frequent than Close signal : overlapping buffers

When Open signal is exactly coordinated with Close signal : exact buffers

the element type of the bucket-opening sequence

the element type of the bucket-closing sequence

a Publisher to subscribe to for creating new receiving bucket signals.

a Publisher factory provided the opening signal and returning a Publisher to subscribe to for emitting relative bucket.

a microbatched Flux of Seq delimited by an opening Publisher and a relative closing Publisher

Collect incoming values into multiple Seq that will be pushed into the returned Flux.

Collect incoming values into multiple Seq that will be pushed into the returned Flux. Each buffer continues aggregating values while the given predicate returns true, and a new buffer is created as soon as the predicate returns false... Note that the element that triggers the predicate to return false (and thus closes a buffer) is NOT included in any emitted buffer.

On completion, if the latest buffer is non-empty and has not been closed it is emitted. However, such a "partial" buffer isn't emitted in case of onError termination.

a predicate that triggers the next buffer when it becomes false.

a microbatched Flux of Seq

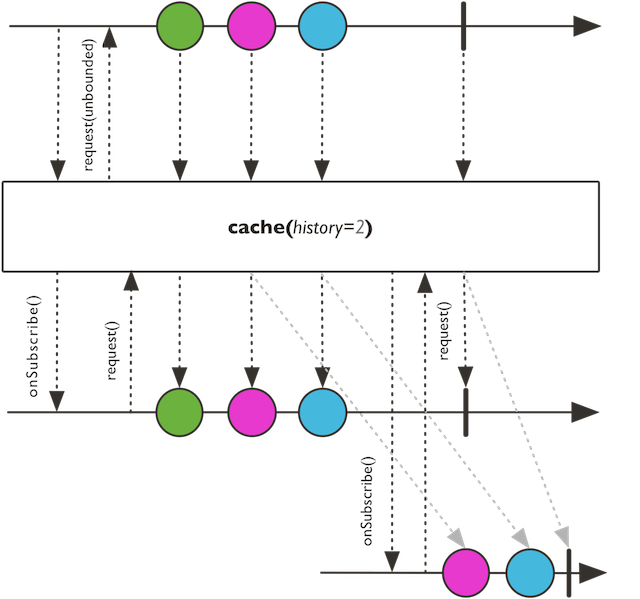

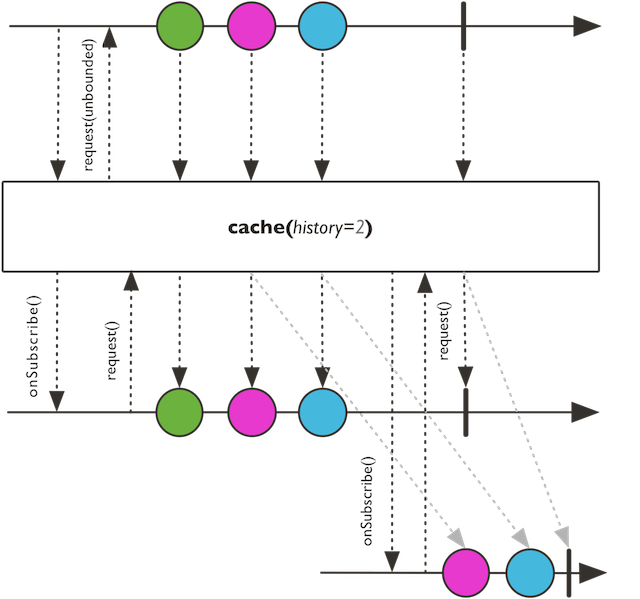

Turn this Flux into a hot source and cache last emitted signals for further Subscriber.

Turn this Flux into a hot source and cache last emitted signals for further Subscriber. Will retain up to the given history size with per-item expiry timeout.

number of events retained in history excluding complete and error

Time-to-live for each cached item.

a replaying Flux

Turn this Flux into a hot source and cache last emitted signals for further Subscriber.

Turn this Flux into a hot source and cache last emitted signals for further Subscriber.

Turn this Flux into a hot source and cache last emitted signals for further Subscriber. Will retain up to the given history size onNext signals. Completion and Error will also be replayed.

number of events retained in history excluding complete and error

a replaying Flux

Turn this Flux into a hot source and cache last emitted signals for further Subscriber.

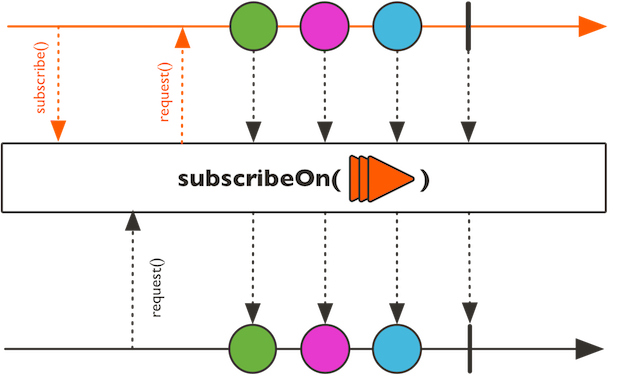

Prepare this Flux so that subscribers will cancel from it on a specified Scheduler.

Cast the current Flux produced type into a target produced type.

Activate assembly tracing for this particular Flux and give it a description that will be reflected in the assembly traceback in case of an error upstream of the checkpoint.

Activate assembly tracing for this particular Flux and give it a description that will be reflected in the assembly traceback in case of an error upstream of the checkpoint.

It should be placed towards the end of the reactive chain, as errors triggered downstream of it cannot be observed and augmented with assembly trace.

The description could for example be a meaningful name for the assembled flux or a wider correlation ID.

a description to include in the assembly traceback.

the assembly tracing Flux.

Activate assembly tracing for this particular Flux, in case of an error upstream of the checkpoint.

Activate assembly tracing for this particular Flux, in case of an error upstream of the checkpoint.

It should be placed towards the end of the reactive chain, as errors triggered downstream of it cannot be observed and augmented with assembly trace.

the assembly tracing Flux.

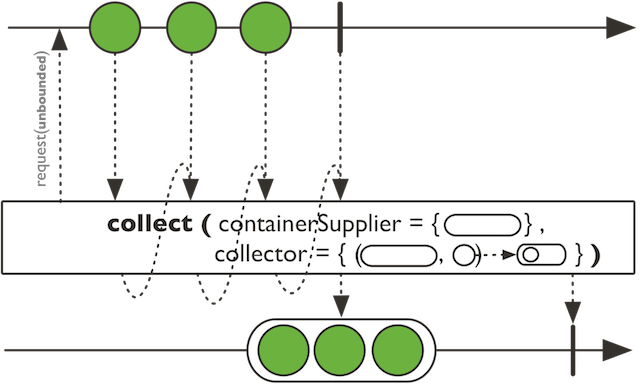

Collect the Flux sequence with the given collector and supplied container on subscribe.

Collect the Flux sequence with the given collector and supplied container on subscribe. The collected result will be emitted when this sequence completes.

the Flux collected container type

the supplier of the container instance for each Subscriber

the consumer of both the container instance and the current value

a Mono sequence of the collected value on complete

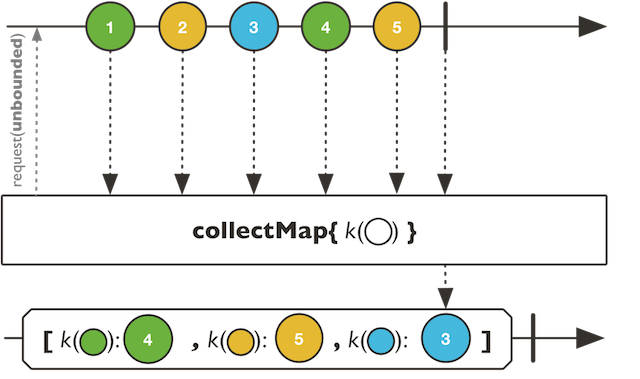

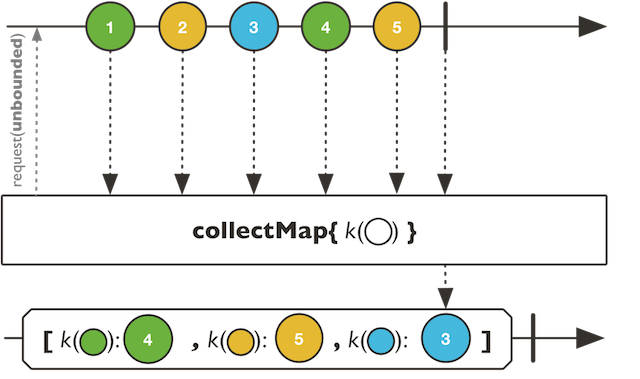

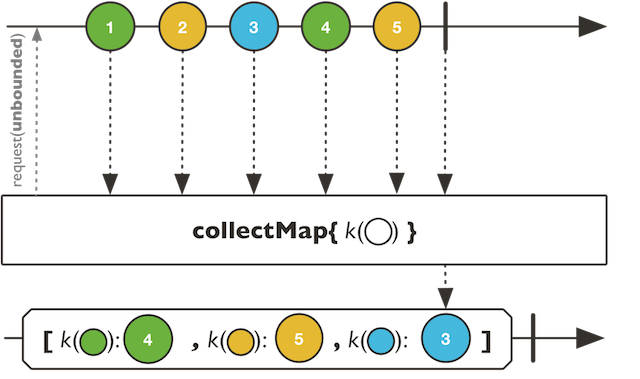

Convert all this Flux sequence into a supplied map where the key is extracted by the given function and the value will be the most recent extracted item for this key.

Convert all this Flux sequence into a supplied map where the key is extracted by the given function and the value will be the most recent extracted item for this key.

the key extracted from each value of this Flux instance

the value extracted from each value of this Flux instance

a Function1 to route items into a keyed Traversable

a Function1 to select the data to store from each item

a mutable.Map factory called for each Subscriber

Convert all this Flux sequence into a hashed map where the key is extracted by the given function and the value will be the most recent extracted item for this key.

Convert all this Flux sequence into a hashed map where the key is extracted by the given function and the value will be the most recent extracted item for this key.

the key extracted from each value of this Flux instance

the value extracted from each value of this Flux instance

a Function1 to route items into a keyed Traversable

a Function1 to select the data to store from each item

Convert all this Flux sequence into a hashed map where the key is extracted by the given Function1 and the value will be the most recent emitted item for this key.

Convert all this Flux sequence into a hashed map where the key is extracted by the given Function1 and the value will be the most recent emitted item for this key.

the key extracted from each value of this Flux instance

a Function1 to route items into a keyed Traversable

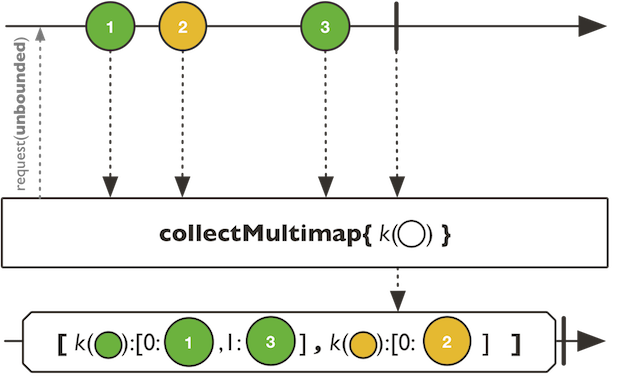

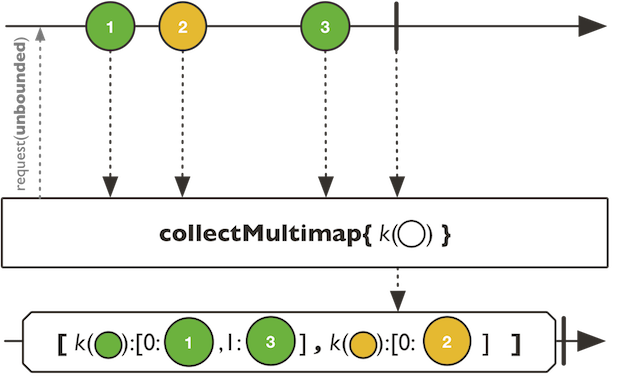

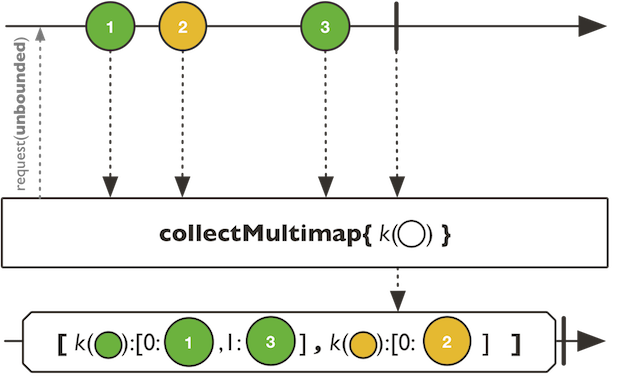

Convert this Flux sequence into a supplied map where the key is extracted by the given function and the value will be all the extracted items for this key.

Convert this Flux sequence into a supplied map where the key is extracted by the given function and the value will be all the extracted items for this key.

the key extracted from each value of this Flux instance

the value extracted from each value of this Flux instance

a Function1 to route items into a keyed Traversable

a Function1 to select the data to store from each item

a Map factory called for each Subscriber

Convert this Flux sequence into a hashed map where the key is extracted by the given function and the value will be all the extracted items for this key.

Convert this Flux sequence into a hashed map where the key is extracted by the given function and the value will be all the extracted items for this key.

the key extracted from each value of this Flux instance

the value extracted from each value of this Flux instance

a Function1 to route items into a keyed Traversable

a Function1 to select the data to store from each item

Convert this Flux sequence into a hashed map where the key is extracted by the given function and the value will be all the emitted item for this key.

Convert this Flux sequence into a hashed map where the key is extracted by the given function and the value will be all the emitted item for this key.

the key extracted from each value of this Flux instance

a Function1 to route items into a keyed Traversable

Accumulate this Flux sequence in a Seq that is emitted to the returned Mono on onComplete.

Accumulate and sort using the given comparator this Flux sequence in a Seq that is emitted to the returned Mono on onComplete.

Accumulate and sort this Flux sequence in a Seq that is emitted to the returned Mono on onComplete.

Defer the transformation of this Flux in order to generate a target Flux for each new Subscriber.

Defer the transformation of this Flux in order to generate a target Flux for each new Subscriber.

flux.compose(Mono::from).subscribe()

the item type in the returned Publisher

the Function1 to map this Flux into a target Publisher instance for each new subscriber

a new Flux

Flux.as for a loose conversion to an arbitrary type

Flux.transform for immmediate transformation of Flux

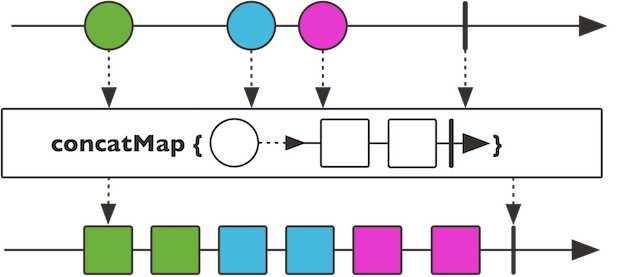

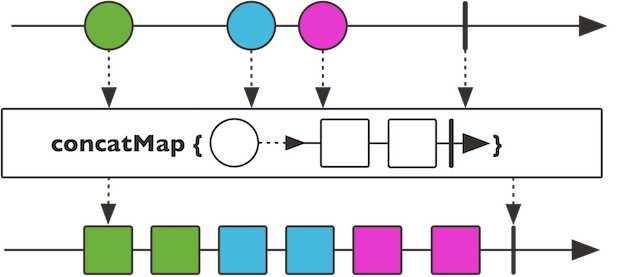

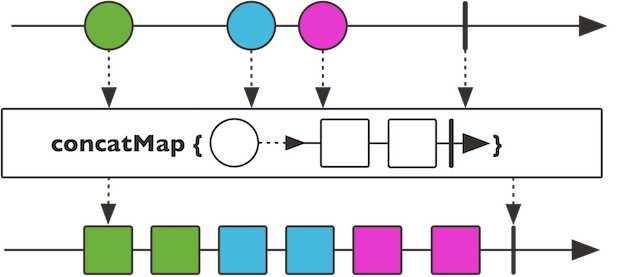

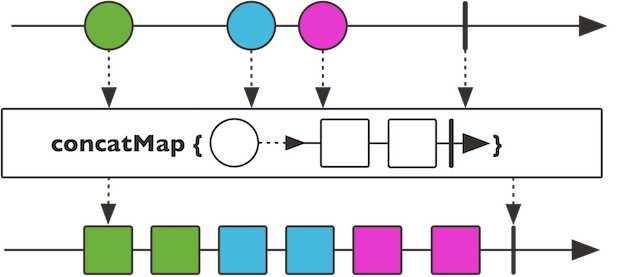

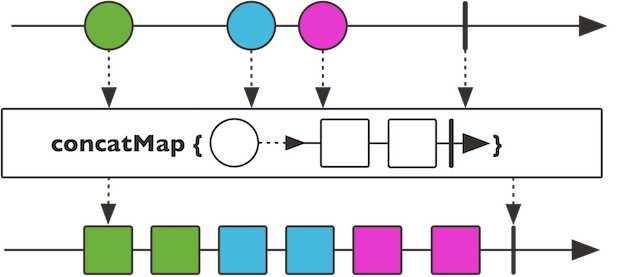

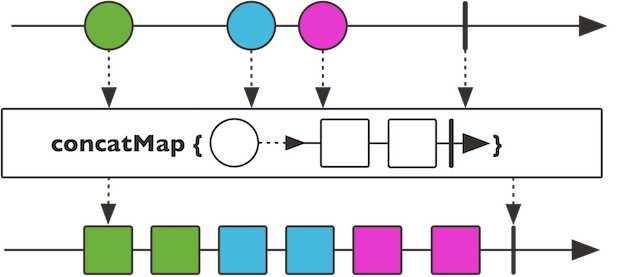

Bind dynamic sequences given this input sequence like Flux.flatMap, but preserve ordering and concatenate emissions instead of merging (no interleave).

Bind dynamic sequences given this input sequence like Flux.flatMap, but preserve ordering and concatenate emissions instead of merging (no interleave). Errors will immediately short circuit current concat backlog.

the produced concatenated type

the function to transform this sequence of T into concatenated sequences of V

the inner source produced demand

a concatenated Flux

Bind dynamic sequences given this input sequence like Flux.flatMap, but preserve ordering and concatenate emissions instead of merging (no interleave).

Bind dynamic sequences given this input sequence like Flux.flatMap, but preserve ordering and concatenate emissions instead of merging (no interleave). Errors will immediately short circuit current concat backlog.

the produced concatenated type

the function to transform this sequence of T into concatenated sequences of V

a concatenated Flux

Bind dynamic sequences given this input sequence like Flux.flatMap, but preserve ordering and concatenate emissions instead of merging (no interleave).

Bind dynamic sequences given this input sequence like Flux.flatMap, but preserve ordering and concatenate emissions instead of merging (no interleave).

Errors will be delayed after the current concat backlog if delayUntilEnd is false or after all sources if delayUntilEnd is true.

the produced concatenated type

the function to transform this sequence of T into concatenated sequences of V

delay error until all sources have been consumed instead of after the current source

the inner source produced demand

a concatenated Flux

Bind dynamic sequences given this input sequence like Flux.flatMap, but preserve ordering and concatenate emissions instead of merging (no interleave).

Bind dynamic sequences given this input sequence like Flux.flatMap, but preserve ordering and concatenate emissions instead of merging (no interleave).

Errors will be delayed after all concated sources terminate.

the produced concatenated type

the function to transform this sequence of T into concatenated sequences of V

the inner source produced demand

a concatenated Flux

Bind dynamic sequences given this input sequence like Flux.flatMap, but preserve ordering and concatenate emissions instead of merging (no interleave).

Bind dynamic sequences given this input sequence like Flux.flatMap, but preserve ordering and concatenate emissions instead of merging (no interleave).

Errors will be delayed after the current concat backlog.

the produced concatenated type

the function to transform this sequence of T into concatenated sequences of V

a concatenated Flux

Bind Iterable sequences given this input sequence like Flux.flatMapIterable, but preserve ordering and concatenate emissions instead of merging (no interleave).

Bind Iterable sequences given this input sequence like Flux.flatMapIterable, but preserve ordering and concatenate emissions instead of merging (no interleave).

Errors will be delayed after the current concat backlog.

the produced concatenated type

the function to transform this sequence of T into concatenated sequences of R

the inner source produced demand

a concatenated Flux

Bind Iterable sequences given this input sequence like Flux.flatMapIterable, but preserve ordering and concatenate emissions instead of merging (no interleave).

Bind Iterable sequences given this input sequence like Flux.flatMapIterable, but preserve ordering and concatenate emissions instead of merging (no interleave).

Errors will be delayed after the current concat backlog.

the produced concatenated type

the function to transform this sequence of T into concatenated sequences of R

a concatenated Flux

Concatenate emissions of this Flux with the provided Publisher (no interleave).

Counts the number of values in this Flux.

Provide a default unique value if this sequence is completed without any data

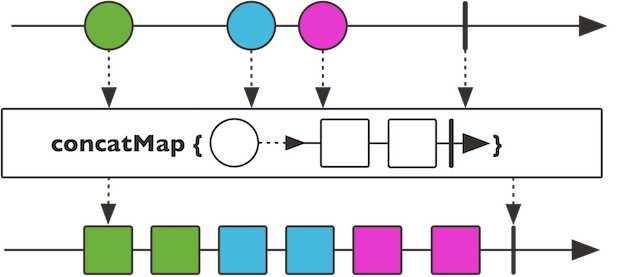

Delay each of this Flux elements (Subscriber.onNext signals) by a given duration, on a given Scheduler.

Delay each of this Flux elements (Subscriber.onNext signals) by a given duration, on a given Scheduler.

duration to delay each Subscriber.onNext signal

the Scheduler to use for delaying each signal

a delayed Flux

#delaySubscription(Duration) delaySubscription to introduce a delay at the beginning of the sequence only

Delay each of this Flux elements (Subscriber.onNext signals) by a given duration.

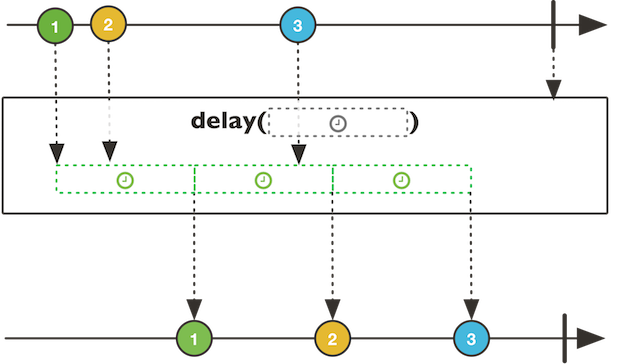

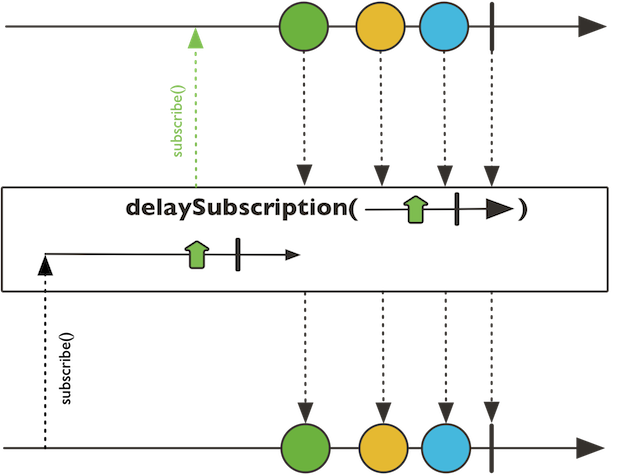

Delay the subscription to the main source until another Publisher signals a value or completes.

Delay the subscription to the main source until another Publisher signals a value or completes.

the other source type

a Publisher to signal by next or complete this Flux.subscribe

a delayed Flux

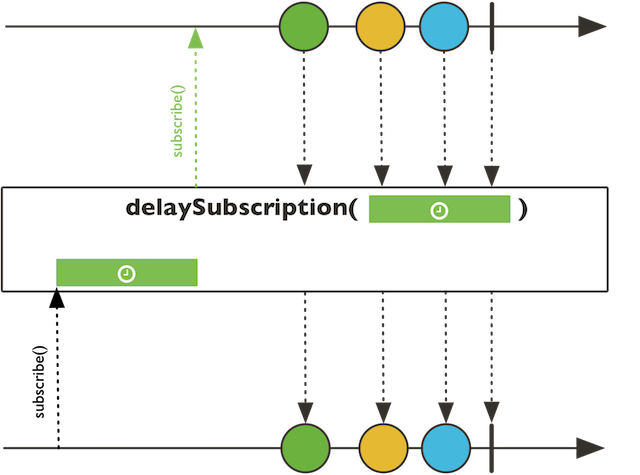

Delay the subscription to this Flux source until the given period elapses.

Delay the subscription to this Flux source until the given period elapses.

duration before subscribing this Flux

a time-capable Scheduler instance to run on

a delayed Flux

Delay the subscription to this Flux source until the given period elapses.

Delay the subscription to this Flux source until the given period elapses.

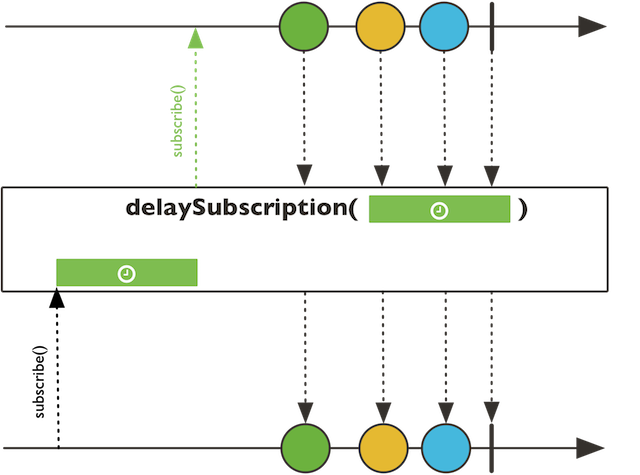

A "phantom-operator" working only if this Flux is a emits onNext, onError or onComplete reactor.core.publisher.Signal.

A "phantom-operator" working only if this Flux is a emits onNext, onError or onComplete reactor.core.publisher.Signal. The relative Subscriber callback will be invoked, error reactor.core.publisher.Signal will trigger onError and complete reactor.core.publisher.Signal will trigger onComplete.

the dematerialized type

a dematerialized Flux

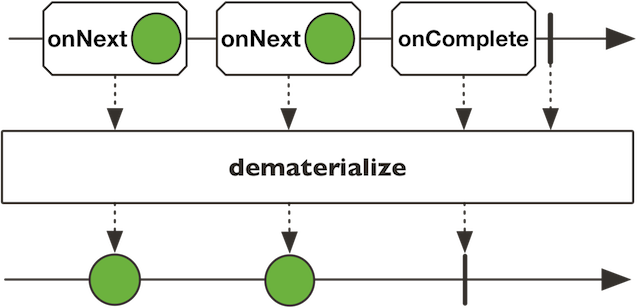

For each Subscriber, tracks this Flux values that have been seen and filters out duplicates given the extracted key.

For each Subscriber, tracks this Flux values that have been seen and filters out duplicates given the extracted key.

the type of the key extracted from each value in this sequence

function to compute comparison key for each element

a filtering Flux with values having distinct keys

For each Subscriber, tracks this Flux values that have been seen and filters out duplicates.

Filter out subsequent repetitions of an element (that is, if they arrive right after one another), as compared by a key extracted through the user provided Function1 and then comparing keys with the supplied Function2.

Filter out subsequent repetitions of an element (that is, if they arrive right after one another), as compared by a key extracted through the user provided Function1 and then comparing keys with the supplied Function2.

the type of the key extracted from each value in this sequence

function to compute comparison key for each element

predicate used to compare keys.

a filtering Flux with only one occurrence in a row of each element of the same key for which the predicate returns true (yet element keys can repeat in the overall sequence)

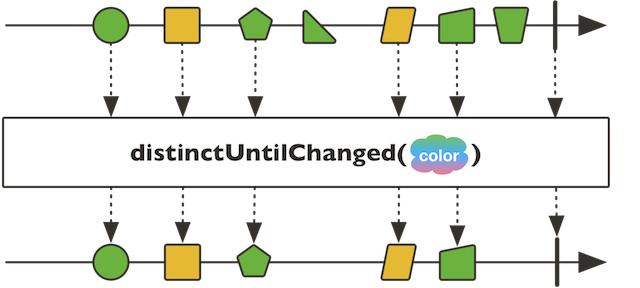

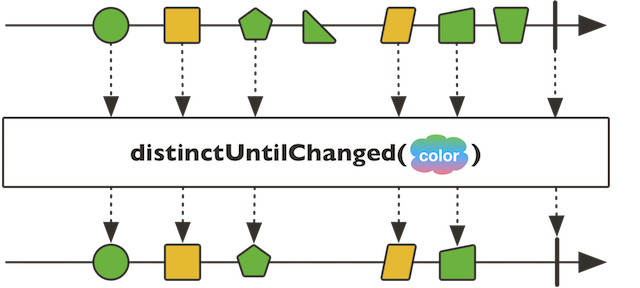

Filters out subsequent and repeated elements provided a matching extracted key.

Filters out subsequent and repeated elements provided a matching extracted key.

the type of the key extracted from each value in this sequence

function to compute comparison key for each element

a filtering Flux with conflated repeated elements given a comparison key

Filters out subsequent and repeated elements.

Triggered after the Flux terminates, either by completing downstream successfully or with an error.

Triggered when the Flux is cancelled.

Triggered when the Flux completes successfully.

Triggers side-effects when the Flux emits an item, fails with an error or completes successfully.

Triggers side-effects when the Flux emits an item, fails with an error or completes successfully. All these events are represented as a Signal that is passed to the side-effect callback. Note that this is an advanced operator, typically used for monitoring of a Flux.

the mandatory callback to call on Subscriber.onNext, Subscriber.onError and Subscriber#onComplete

an observed Flux

Signal

Triggered when the Flux completes with an error matching the given exception.

Triggered when the Flux completes with an error matching the given exception type.

Triggered when the Flux completes with an error.

Triggered when the Flux emits an item.

Attach a Long customer to this Flux that will observe any request to this Flux.

Triggered when the Flux is subscribed.

Triggered when the Flux terminates, either by completing successfully or with an error.

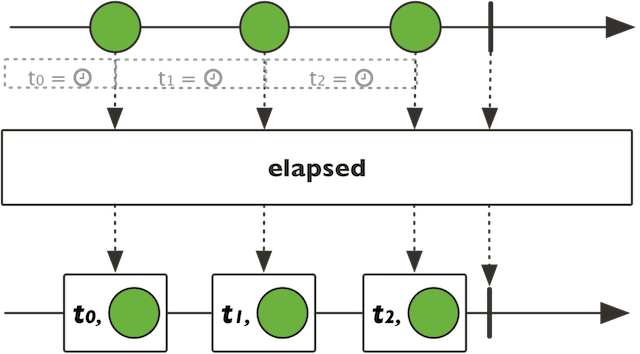

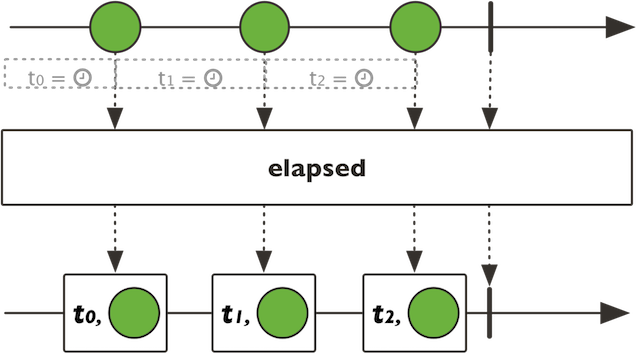

Map this Flux sequence into Tuple2 of T1

Long timemillis and T2 T associated data.

Map this Flux sequence into Tuple2 of T1

Long timemillis and T2 T associated data. The timemillis

corresponds to the elapsed time between the subscribe and the first next signal OR

between two next signals.

a Scheduler instance to read time from

a transforming Flux that emits tuples of time elapsed in milliseconds and matching data

Map this Flux sequence into Tuple2 of T1 Long timemillis and T2

T associated data.

Map this Flux sequence into Tuple2 of T1 Long timemillis and T2

T associated data. The timemillis corresponds to the elapsed time between the subscribe and the first

next signal OR between two next signals.

a transforming Flux that emits tuples of time elapsed in milliseconds and matching data

Emit only the element at the given index position or signals a default value if specified if the sequence is shorter.

Emit only the element at the given index position or IndexOutOfBoundsException if the sequence is shorter.

Recursively expand elements into a graph and emit all the resulting element using a breadth-first traversal strategy.

Recursively expand elements into a graph and emit all the resulting element using a breadth-first traversal strategy.

That is: emit the values from this Flux first, then expand each at a first level of recursion and emit all of the resulting values, then expand all of these at a second level and so on..

For example, given the hierarchical structure

A

- AA

- aa1

B

- BB

- bb1

Expands Flux.just(A, B) into

A B AA AB aa1 bb1

the Function1 applied at each level of recursion to expand values into a Publisher, producing a graph.

an breadth-first expanded Flux

Recursively expand elements into a graph and emit all the resulting element using a breadth-first traversal strategy.

Recursively expand elements into a graph and emit all the resulting element using a breadth-first traversal strategy.

That is: emit the values from this Flux first, then expand each at a first level of recursion and emit all of the resulting values, then expand all of these at a second level and so on..

For example, given the hierarchical structure

A

- AA

- aa1

B

- BB

- bb1

Expands Flux.just(A, B) into

A B AA AB aa1 bb1

the Function1 applied at each level of recursion to expand values into a Publisher, producing a graph.

a capacity hint to prepare the inner queues to accommodate n elements per level of recursion.

an breadth-first expanded Flux

Recursively expand elements into a graph and emit all the resulting element, in a depth-first traversal order.

Recursively expand elements into a graph and emit all the resulting element, in a depth-first traversal order.

That is: emit one value from this Flux, expand it and emit the first value at this first level of recursion, and so on... When no more recursion is possible, backtrack to the previous level and re-apply the strategy.

For example, given the hierarchical structure

A

- AA

- aa1

B

- BB

- bb1

Expands Flux.just(A, B) into

A AA aa1 B BB bb1

the Function1 applied at each level of recursion to expand values into a Publisher, producing a graph.

a Flux expanded depth-first

Recursively expand elements into a graph and emit all the resulting element, in a depth-first traversal order.

Recursively expand elements into a graph and emit all the resulting element, in a depth-first traversal order.

That is: emit one value from this Flux, expand it and emit the first value at this first level of recursion, and so on... When no more recursion is possible, backtrack to the previous level and re-apply the strategy.

For example, given the hierarchical structure

A

- AA

- aa1

B

- BB

- bb1

Expands Flux.just(A, B) into

A AA aa1 B BB bb1

the Function1 applied at each level of recursion to expand values into a Publisher, producing a graph.

a capacity hint to prepare the inner queues to accommodate n elements per level of recursion.

a Flux expanded depth-first

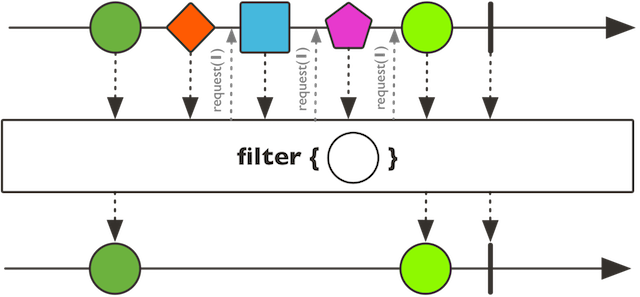

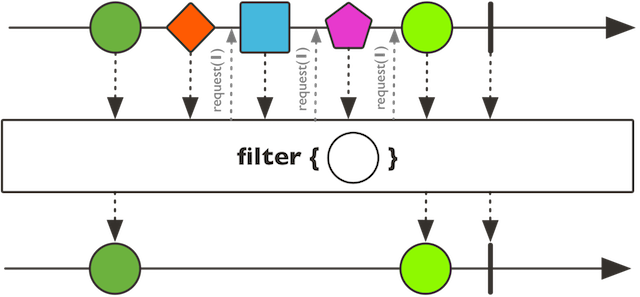

Evaluate each accepted value against the given predicate T => Boolean.

Evaluate each accepted value against the given predicate T => Boolean. If the predicate test succeeds, the value is passed into the new Flux. If the predicate test fails, the value is ignored and a request of 1 is emitted.

the Function1 predicate to test values against

a new Flux containing only values that pass the predicate test

Test each value emitted by this Flux asynchronously using a generated Publisher[Boolean] test.

Test each value emitted by this Flux asynchronously using a generated

Publisher[Boolean] test. A value is replayed if the first item emitted

by its corresponding test is true. It is dropped if its test is either

empty or its first emitted value is false.

Note that only the first value of the test publisher is considered, and unless it is a Mono, test will be cancelled after receiving that first value. Test publishers are generated and subscribed to in sequence.

the function generating a Publisher of Boolean for each value, to filter the Flux with

the maximum expected number of values to hold pending a result of their respective asynchronous predicates, rounded to the next power of two. This is capped depending on the size of the heap and the JVM limits, so be careful with large values (although eg. { @literal 65536} should still be fine). Also serves as the initial request size for the source.

a filtered Flux

Test each value emitted by this Flux asynchronously using a generated Publisher[Boolean] test.

Test each value emitted by this Flux asynchronously using a generated

Publisher[Boolean] test. A value is replayed if the first item emitted

by its corresponding test is true. It is dropped if its test is either

empty or its first emitted value is false.

Note that only the first value of the test publisher is considered, and unless it is a Mono, test will be cancelled after receiving that first value. Test publishers are generated and subscribed to in sequence.

the function generating a Publisher of Boolean for each value, to filter the Flux with

a filtered Flux

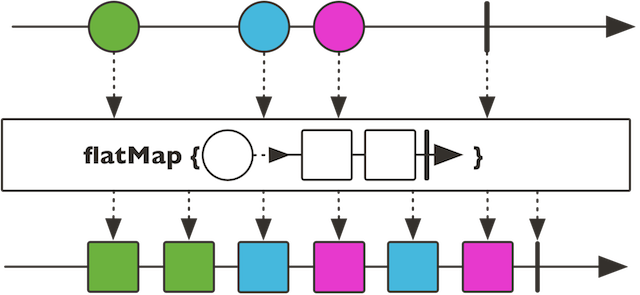

Transform the signals emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, so that they may interleave.

Transform the signals emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, so that they may interleave. OnError will be transformed into completion signal after its mapping callback has been applied.

the output Publisher type target

the Function1 to call on next data and returning a sequence to merge

the Function to call on error signal and returning a sequence to merge

the Function1 to call on complete signal and returning a sequence to merge

a new Flux

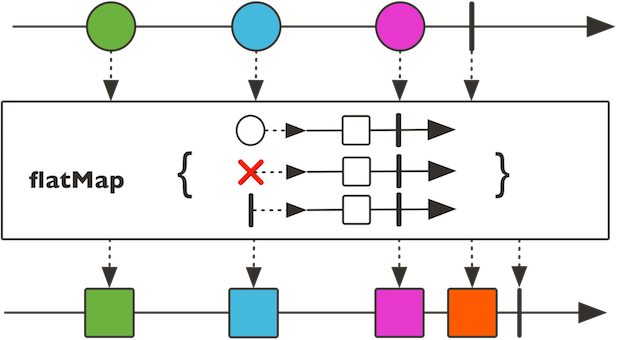

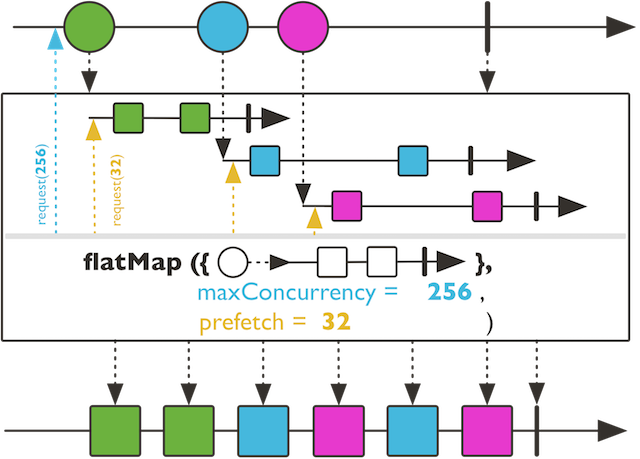

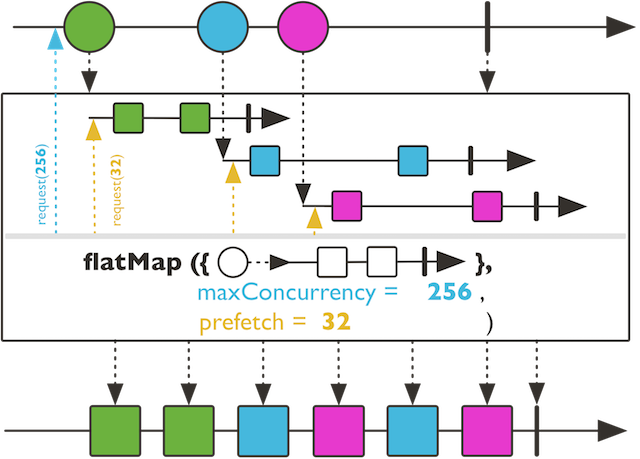

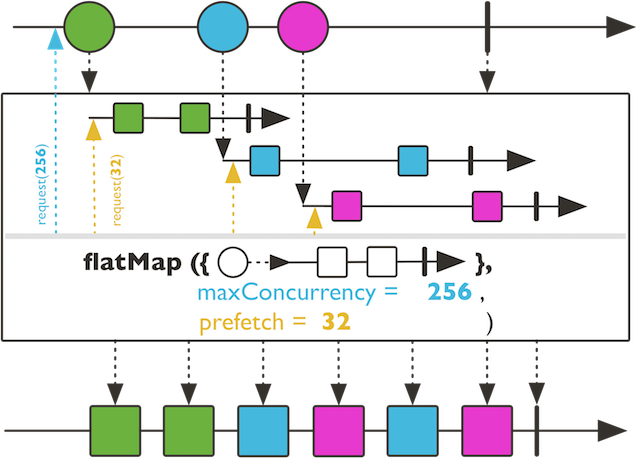

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, so that they may interleave.

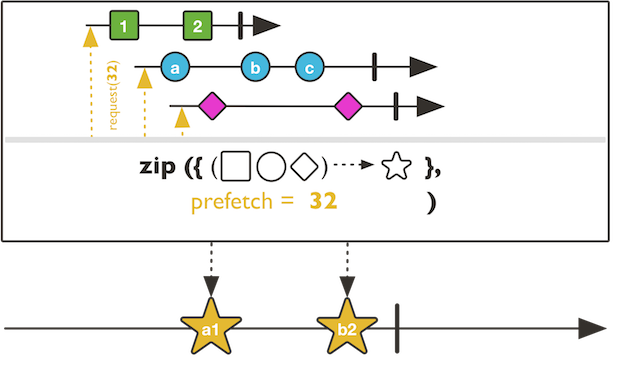

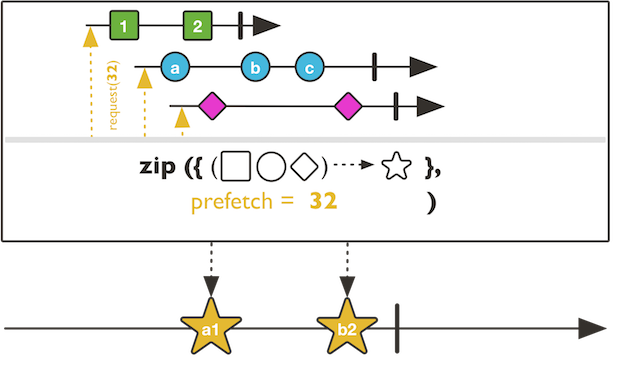

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, so that they may interleave. The concurrency argument allows to control how many merged Publisher can happen in parallel. The prefetch argument allows to give an arbitrary prefetch size to the merged Publisher.

the merged output sequence type

the Function1 to transform input sequence into N sequences Publisher

the maximum in-flight elements from this Flux sequence

the maximum in-flight elements from each inner Publisher sequence

a merged Flux

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, so that they may interleave.

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, so that they may interleave. The concurrency argument allows to control how many merged Publisher can happen in parallel.

the merged output sequence type

the Function1 to transform input sequence into N sequences Publisher

the maximum in-flight elements from this Flux sequence

a new Flux

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, so that they may interleave.

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, so that they may interleave.

the merged output sequence type

the Function1 to transform input sequence into N sequences Publisher

a new Flux

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, so that they may interleave.

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, so that they may interleave. The concurrency argument allows to control how many merged Publisher can happen in parallel. The prefetch argument allows to give an arbitrary prefetch size to the merged Publisher. This variant will delay any error until after the rest of the flatMap backlog has been processed.

the merged output sequence type

the Function1 to transform input sequence into N sequences Publisher

the maximum in-flight elements from this Flux sequence

the maximum in-flight elements from each inner Publisher sequence

a merged Flux

Transform the items emitted by this Flux into Iterable, then flatten the emissions from those by merging them into a single Flux.

Transform the items emitted by this Flux into Iterable, then flatten the emissions from those by merging them into a single Flux. The prefetch argument allows to give an arbitrary prefetch size to the merged Iterable.

the merged output sequence type

the Function1 to transform input sequence into N sequences Iterable

the maximum in-flight elements from each inner Iterable sequence

a merged Flux

Transform the items emitted by this Flux into Iterable, then flatten the elements from those by merging them into a single Flux.

Transform the items emitted by this Flux into Iterable, then flatten the elements from those by merging them into a single Flux. The prefetch argument allows to give an arbitrary prefetch size to the merged Iterable.

the merged output sequence type

the Function1 to transform input sequence into N sequences Iterable

a merged Flux

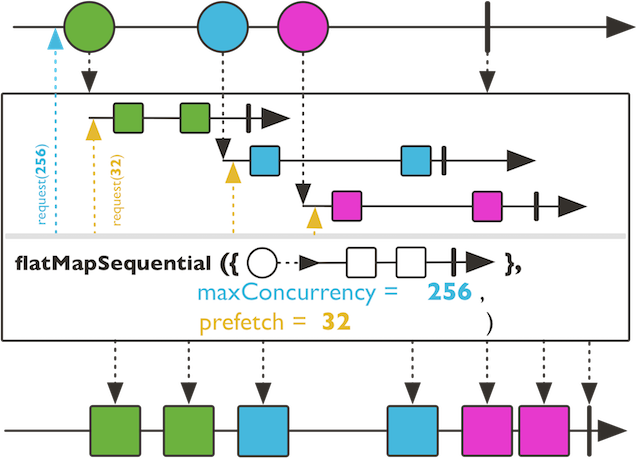

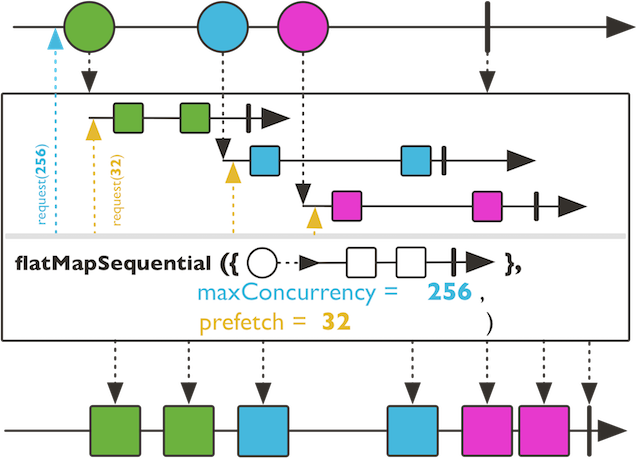

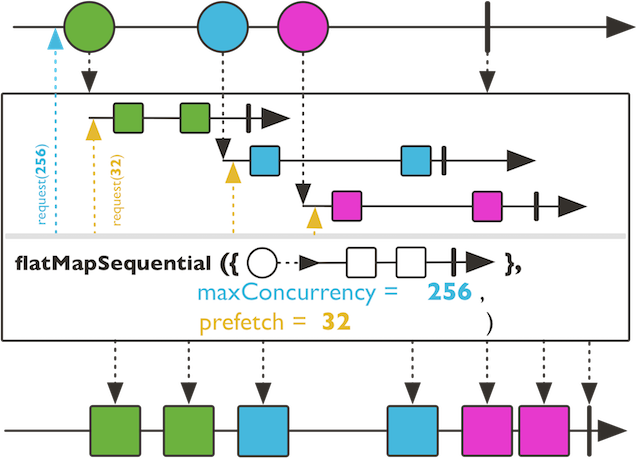

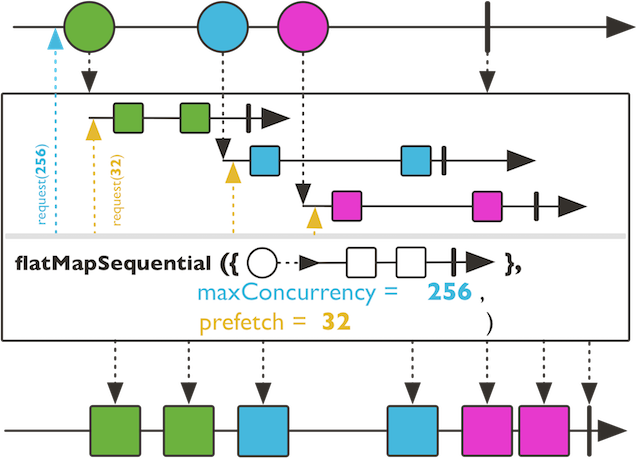

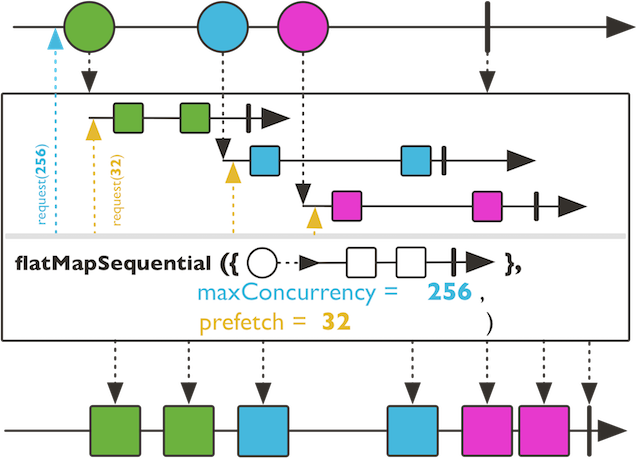

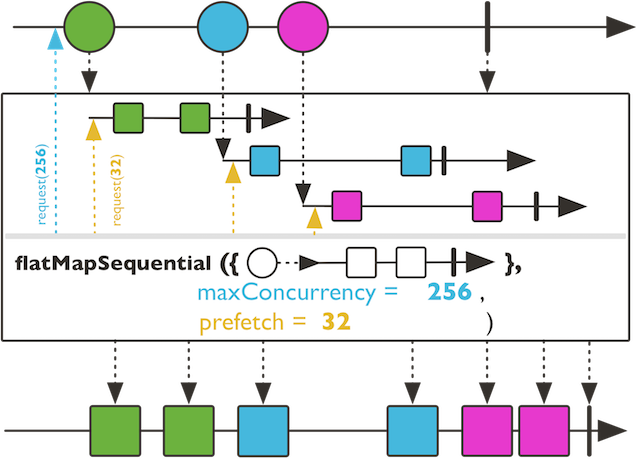

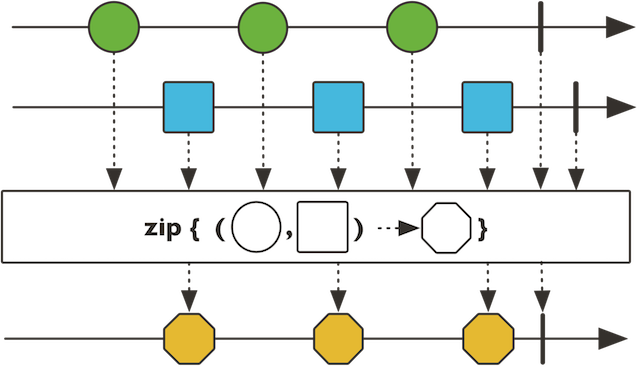

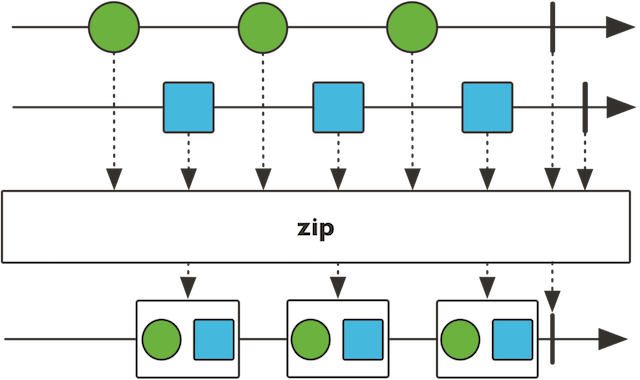

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, in order.

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, in order. Unlike concatMap, transformed inner Publishers are subscribed to eagerly. Unlike flatMap, their emitted elements are merged respecting the order of the original sequence. The concurrency argument allows to control how many merged Publisher can happen in parallel. The prefetch argument allows to give an arbitrary prefetch size to the merged Publisher.

the merged output sequence type

the Function1 to transform input sequence into N sequences Publisher

the maximum in-flight elements from this Flux sequence

the maximum in-flight elements from each inner Publisher sequence

a merged Flux

Transform the items emitted by this Flux Flux} into Publishers, then flatten the emissions from those by merging them into a single Flux, in order.

Transform the items emitted by this Flux Flux} into Publishers, then flatten the emissions from those by merging them into a single Flux, in order. Unlike concatMap, transformed inner Publishers are subscribed to eagerly. Unlike flatMap, their emitted elements are merged respecting the order of the original sequence. The concurrency argument allows to control how many merged Publisher can happen in parallel.

the merged output sequence type

the Function1 to transform input sequence into N sequences Publisher

the maximum in-flight elements from this Flux sequence

a merged Flux

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, in order.

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, in order. Unlike concatMap, transformed inner Publishers are subscribed to eagerly. Unlike flatMap, their emitted elements are merged respecting the order of the original sequence.

the merged output sequence type

the Function1 to transform input sequence into N sequences Publisher

a merged Flux

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, in order.

Transform the items emitted by this Flux into Publishers, then flatten the emissions from those by merging them into a single Flux, in order. Unlike concatMap, transformed inner Publishers are subscribed to eagerly. Unlike flatMap, their emitted elements are merged respecting the order of the original sequence. The concurrency argument allows to control how many merged Publisher can happen in parallel. The prefetch argument allows to give an arbitrary prefetch size to the merged Publisher. This variant will delay any error until after the rest of the flatMap backlog has been processed.

the merged output sequence type

the Function1 to transform input sequence into N sequences Publisher

the maximum in-flight elements from this Flux sequence

the maximum in-flight elements from each inner Publisher sequence

a merged Flux, subscribing early but keeping the original ordering

The prefetch configuration of the Flux

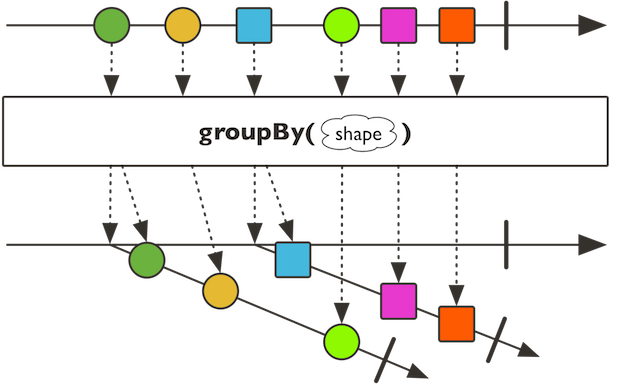

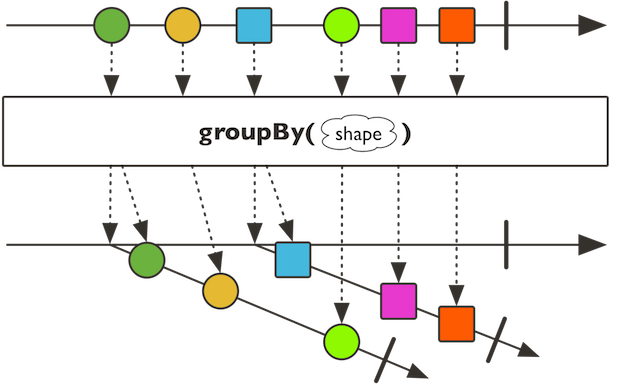

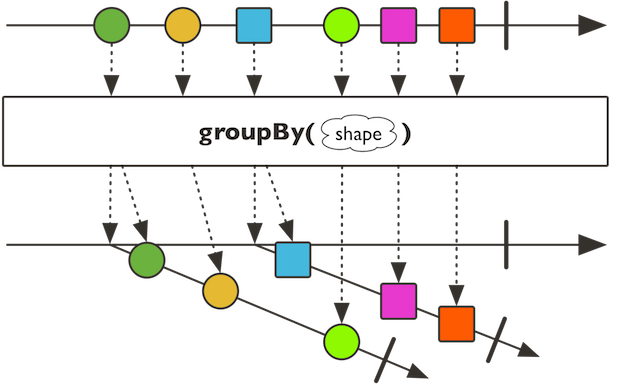

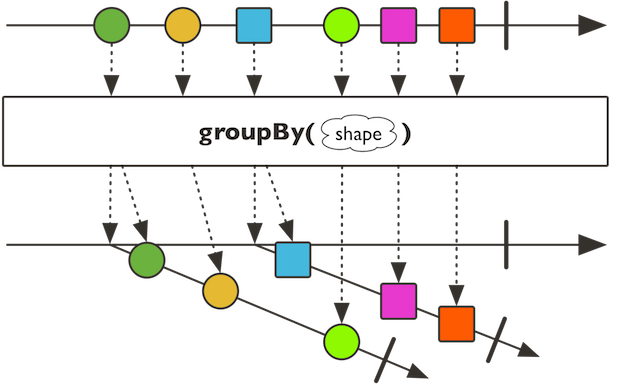

Re-route this sequence into dynamically created Flux for each unique key evaluated by the given key mapper.

Re-route this sequence into dynamically created Flux for each unique key evaluated by the given key mapper. It will use the given value mapper to extract the element to route.

the key type extracted from each value of this sequence

the value type extracted from each value of this sequence

the key mapping function that evaluates an incoming data and returns a key.

the value mapping function that evaluates which data to extract for re-routing.

the number of values to prefetch from the source

a Flux of GroupedFlux grouped sequences

Re-route this sequence into dynamically created Flux for each unique key evaluated by the given key mapper.

Re-route this sequence into dynamically created Flux for each unique key evaluated by the given key mapper. It will use the given value mapper to extract the element to route.

the key type extracted from each value of this sequence

the value type extracted from each value of this sequence

the key mapping function that evaluates an incoming data and returns a key.

the value mapping function that evaluates which data to extract for re-routing.

a Flux of GroupedFlux grouped sequences

Re-route this sequence into dynamically created Flux for each unique key evaluated by the given key mapper.

Re-route this sequence into dynamically created Flux for each unique key evaluated by the given key mapper.

the key type extracted from each value of this sequence

the key mapping Function1 that evaluates an incoming data and returns a key.

the number of values to prefetch from the source

a Flux of GroupedFlux grouped sequences

Re-route this sequence into dynamically created Flux for each unique key evaluated by the given key mapper.

Re-route this sequence into dynamically created Flux for each unique key evaluated by the given key mapper.

the key type extracted from each value of this sequence

the key mapping Function1 that evaluates an incoming data and returns a key.

a Flux of GroupedFlux grouped sequences

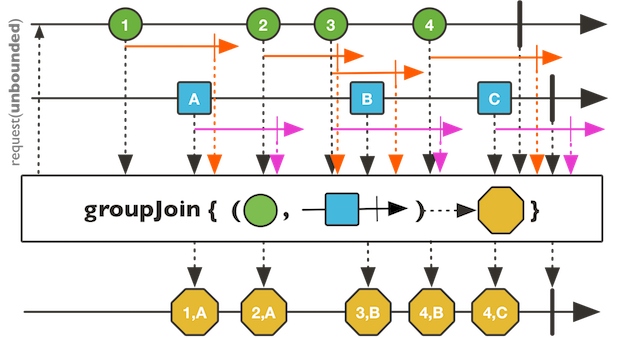

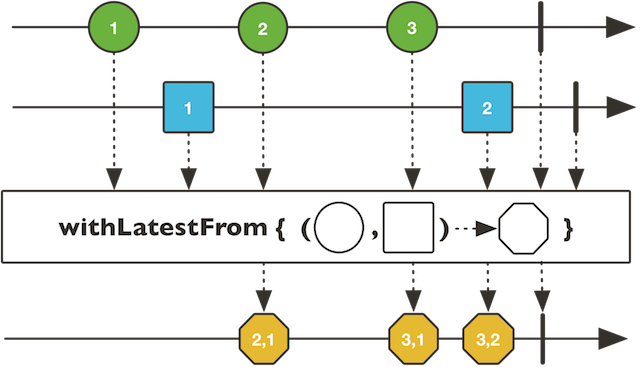

Returns a Flux that correlates two Publishers when they overlap in time and groups the results.

Returns a Flux that correlates two Publishers when they overlap in time and groups the results.

There are no guarantees in what order the items get combined when multiple items from one or both source Publishers overlap.

Unlike Flux.join, items from the right Publisher will be streamed into the right resultSelector argument Flux.

the type of the right Publisher

this Flux timeout type

the right Publisher timeout type

the combined result type

the other Publisher to correlate items from the source Publisher with

a function that returns a Publisher whose emissions indicate the duration of the values of the source Publisher

a function that returns a Publisher whose emissions indicate the

duration of the values of the right Publisher

a function that takes an item emitted by each Publisher and returns the value to be emitted by the resulting Publisher

a joining Flux

Handle the items emitted by this Flux by calling a biconsumer with the output sink for each onNext.

Handle the items emitted by this Flux by calling a biconsumer with the output sink for each onNext. At most one SynchronousSink.next call must be performed and/or 0 or 1 SynchronousSink.error or SynchronousSink.complete.

the transformed type

the handling BiConsumer

a transformed Flux

Emit a single boolean true if any of the values of this Flux sequence match the constant.

Emit a single boolean true if any of the values of this Flux sequence match the constant.

The implementation uses short-circuit logic and completes with true if the constant matches a value.

constant compared to incoming signals

a new Mono with true if any value satisfies a predicate and false

otherwise

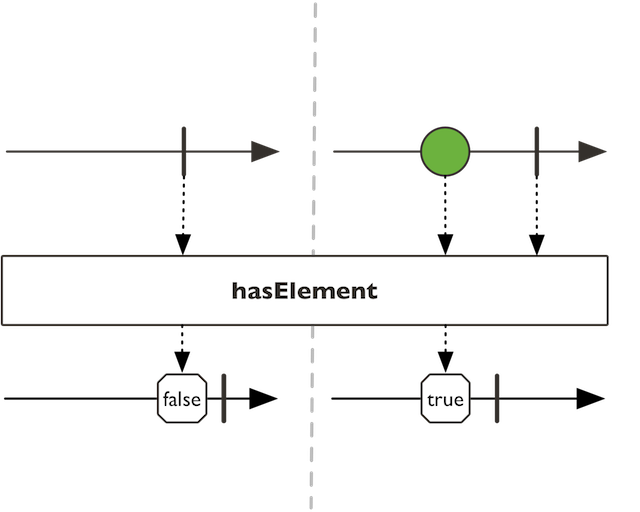

Emit a single boolean true if this Flux sequence has at least one element.

Hides the identities of this Flux and its Subscription as well.

Ignores onNext signals (dropping them) and only reacts on termination.

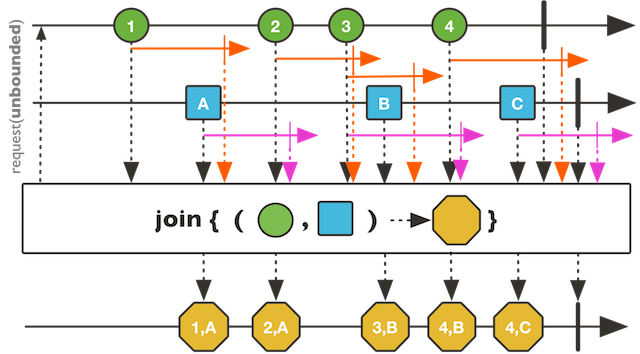

Returns a Flux that correlates two Publishers when they overlap in time and groups the results.

Returns a Flux that correlates two Publishers when they overlap in time and groups the results.

There are no guarantees in what order the items get combined when multiple items from one or both source Publishers overlap.

the type of the right Publisher

this Flux timeout type

the right Publisher timeout type

the combined result type

the other Publisher to correlate items from the source Publisher with

a function that returns a Publisher whose emissions indicate the duration of the values of the source Publisher

a function that returns a Publisher whose emissions indicate the duration of the values of the { @code right} Publisher

a function that takes an item emitted by each Publisher and returns the value to be emitted by the resulting Publisher

a joining Flux

Return defined identifier

Return defined identifier

defined identifier

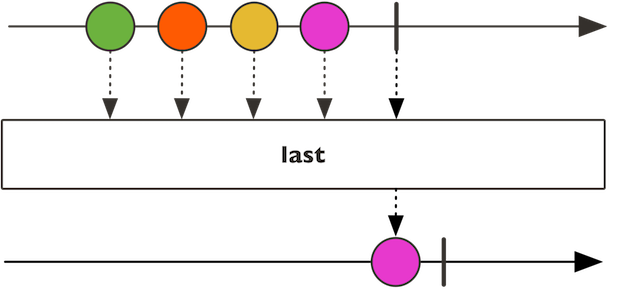

Signal the last element observed before complete signal or emit the defaultValue if empty.

Signal the last element observed before complete signal or emit the defaultValue if empty. For a passive version use Flux.takeLast

Signal the last element observed before complete signal or emit NoSuchElementException error if the source was empty.

Signal the last element observed before complete signal or emit NoSuchElementException error if the source was empty. For a passive version use Flux.takeLast

a limited Flux

Ensure that backpressure signals from downstream subscribers are capped at the

provided prefetchRate when propagated upstream, effectively rate limiting

the upstream Publisher.

Ensure that backpressure signals from downstream subscribers are capped at the

provided prefetchRate when propagated upstream, effectively rate limiting

the upstream Publisher.

Typically used for scenarios where consumer(s) request a large amount of data

(eg. Long.MaxValue) but the data source behaves better or can be optimized

with smaller requests (eg. database paging, etc...). All data is still processed.

Equivalent to flux.publishOn(Schedulers.immediate(), prefetchRate).subscribe()

the limit to apply to downstream's backpressure

a Flux limiting downstream's backpressure

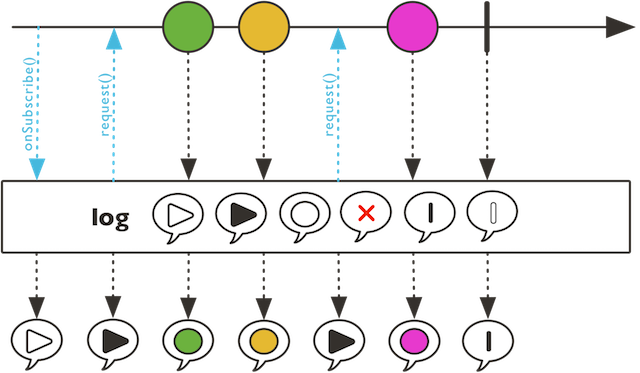

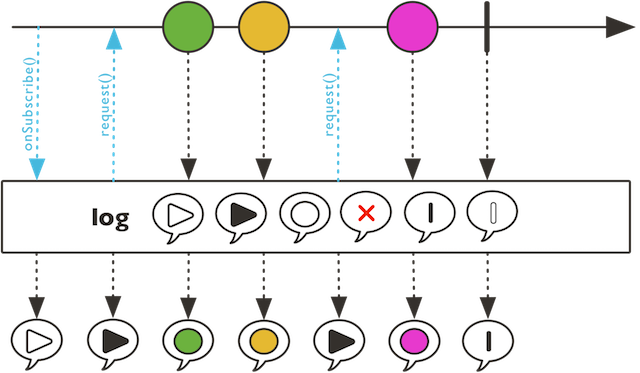

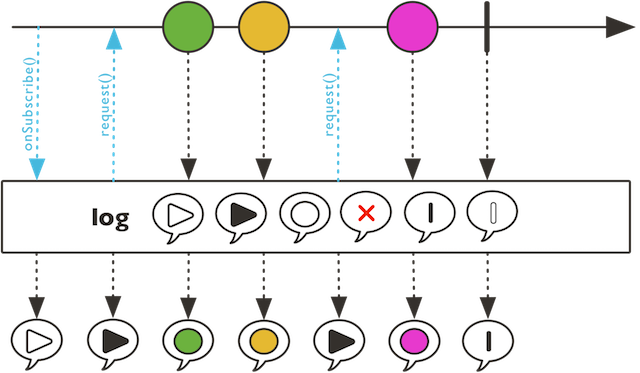

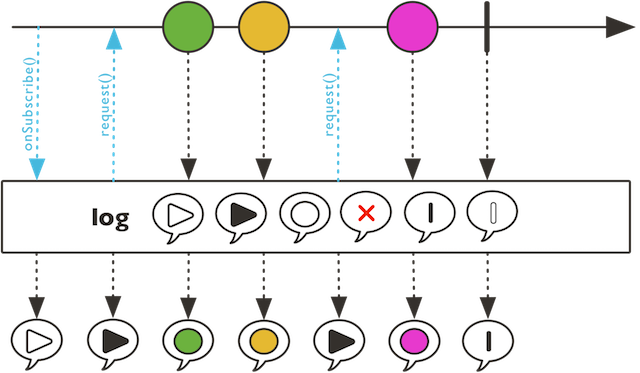

Observe Reactive Streams signals matching the passed filter options and use

Logger support to handle trace implementation.

Observe Reactive Streams signals matching the passed filter options and use

Logger support to handle trace implementation. Default will use the passed

Level and java.util.logging. If SLF4J is available, it will be used

instead.

Options allow fine grained filtering of the traced signal, for instance to only capture onNext and onError:

flux.log("category", Level.INFO, SignalType.ON_NEXT, SignalType.ON_ERROR)

@param category to be mapped into logger configuration (e.g. org.springframework

.reactor). If category ends with "." like "reactor.", a generated operator

suffix will complete, e.g. "reactor.Flux.Map".

@param level the [[Level]] to enforce for this tracing Flux (only FINEST, FINE,

INFO, WARNING and SEVERE are taken into account)

@param showOperatorLine capture the current stack to display operator

class/line number.

@param options a vararg [[SignalType]] option to filter log messages

@return a new unaltered [[Flux]]

@param category to be mapped into logger configuration (e.g. org.springframework

.reactor). If category ends with "." like "reactor.", a generated operator

suffix will complete, e.g. "reactor.Flux.Map".

@param level the [[Level]] to enforce for this tracing Flux (only FINEST, FINE,

INFO, WARNING and SEVERE are taken into account)

@param showOperatorLine capture the current stack to display operator

class/line number.

@param options a vararg [[SignalType]] option to filter log messages

@return a new unaltered [[Flux]]

Observe Reactive Streams signals matching the passed filter options and

use Logger support to

handle trace

implementation.

Observe Reactive Streams signals matching the passed filter options and

use Logger support to

handle trace

implementation. Default will

use the passed Level and java.util.logging. If SLF4J is available, it will be used instead.

Options allow fine grained filtering of the traced signal, for instance to only capture onNext and onError:

flux.log("category", Level.INFO, SignalType.ON_NEXT, SignalType.ON_ERROR)

@param category to be mapped into logger configuration (e.g. org.springframework

.reactor). If category ends with "." like "reactor.", a generated operator

suffix will complete, e.g. "reactor.Flux.Map".

@param level the [[Level]] to enforce for this tracing Flux (only FINEST, FINE,

INFO, WARNING and SEVERE are taken into account)

@param options a vararg [[SignalType]] option to filter log messages

@return a new unaltered [[Flux]]

@param category to be mapped into logger configuration (e.g. org.springframework

.reactor). If category ends with "." like "reactor.", a generated operator

suffix will complete, e.g. "reactor.Flux.Map".

@param level the [[Level]] to enforce for this tracing Flux (only FINEST, FINE,

INFO, WARNING and SEVERE are taken into account)

@param options a vararg [[SignalType]] option to filter log messages

@return a new unaltered [[Flux]]

Observe all Reactive Streams signals and use Logger support to handle trace implementation.

Observe all Reactive Streams signals and use Logger support to handle trace implementation. Default will use Level.INFO and java.util.logging. If SLF4J is available, it will be used instead.

to be mapped into logger configuration (e.g. org.springframework .reactor). If category ends with "." like "reactor.", a generated operator suffix will complete, e.g. "reactor.Flux.Map".

a new unaltered Flux

Observe all Reactive Streams signals and use Logger support to handle trace implementation.

Observe all Reactive Streams signals and use Logger support to handle trace implementation. Default will use Level.INFO and java.util.logging. If SLF4J is available, it will be used instead.

The default log category will be "reactor.*", a generated operator suffix will complete, e.g. "reactor.Flux.Map".

a new unaltered Flux

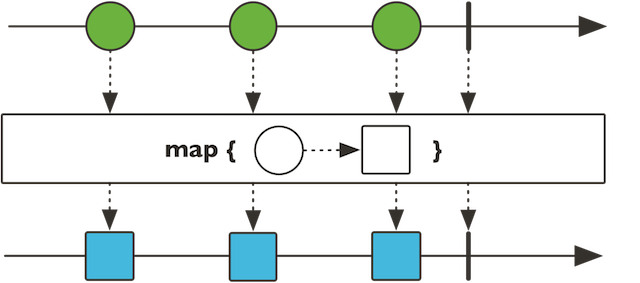

Transform the items emitted by this Flux by applying a function to each item.

Transform the items emitted by this Flux by applying a function to each item.

the transformed type

the transforming Function1

a transformed Flux

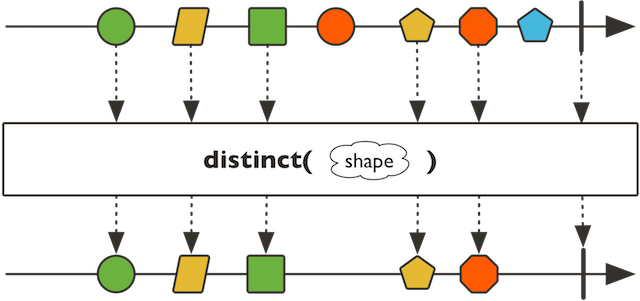

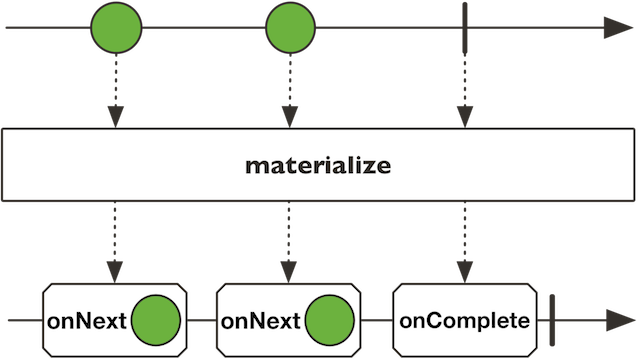

Transform the incoming onNext, onError and onComplete signals into Signal.

Transform the incoming onNext, onError and onComplete signals into Signal. Since the error is materialized as a Signal, the propagation will be stopped and onComplete will be emitted. Complete signal will first emit a Signal.complete and then effectively complete the flux.

a Flux of materialized Signal

Merge emissions of this Flux with the provided Publisher, so that they may interleave.

Give a name to this sequence, which can be retrieved using reactor.core.scala.Scannable.name() as long as this is the first reachable reactor.core.scala.Scannable.parents().

Give a name to this sequence, which can be retrieved using reactor.core.scala.Scannable.name() as long as this is the first reachable reactor.core.scala.Scannable.parents().

a name for the sequence

the same sequence, but bearing a name

Emit only the first item emitted by this Flux.

Evaluate each accepted value against the given Class type.

Evaluate each accepted value against the given Class type. If the predicate test succeeds, the value is passed into the new Flux. If the predicate test fails, the value is ignored and a request of 1 is emitted.

the Class type to test values against

a new Flux reduced to items converted to the matched type

Request an unbounded demand and push the returned Flux, or park the observed

elements if not enough demand is requested downstream, within a maxSize

limit.

Request an unbounded demand and push the returned Flux, or park the observed

elements if not enough demand is requested downstream, within a maxSize

limit. Over that limit, the overflow strategy is applied (see BufferOverflowStrategy).

A Consumer is immediately invoked when there is an overflow, receiving the

value that was discarded because of the overflow (which can be different from the

latest element emitted by the source in case of a

DROP_LATEST strategy).

Note that for the ERROR strategy, the overflow error will be delayed after the current backlog is consumed. The consumer is still invoked immediately.

maximum buffer backlog size before overflow callback is called

callback to invoke on overflow

strategy to apply to overflowing elements

a buffering Flux

Request an unbounded demand and push the returned Flux, or park the observed

elements if not enough demand is requested downstream, within a maxSize

limit.

Request an unbounded demand and push the returned Flux, or park the observed

elements if not enough demand is requested downstream, within a maxSize

limit. Over that limit, the overflow strategy is applied (see BufferOverflowStrategy).

Note that for the ERROR strategy, the overflow error will be delayed after the current backlog is consumed.

maximum buffer backlog size before overflow strategy is applied

strategy to apply to overflowing elements

a buffering Flux

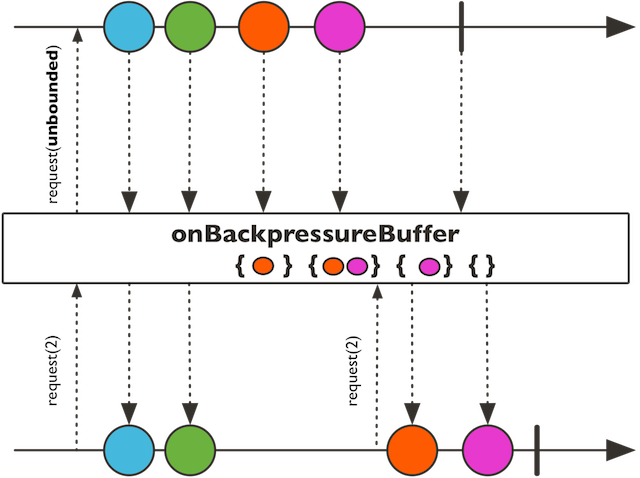

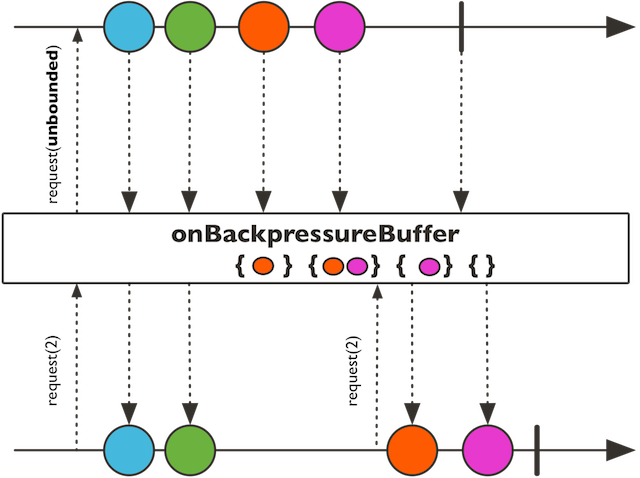

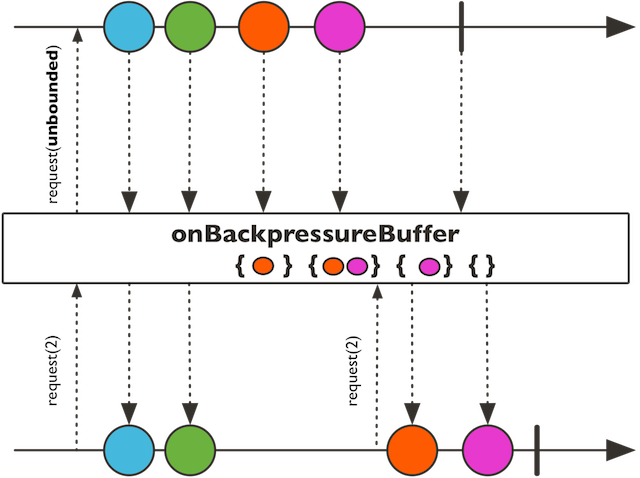

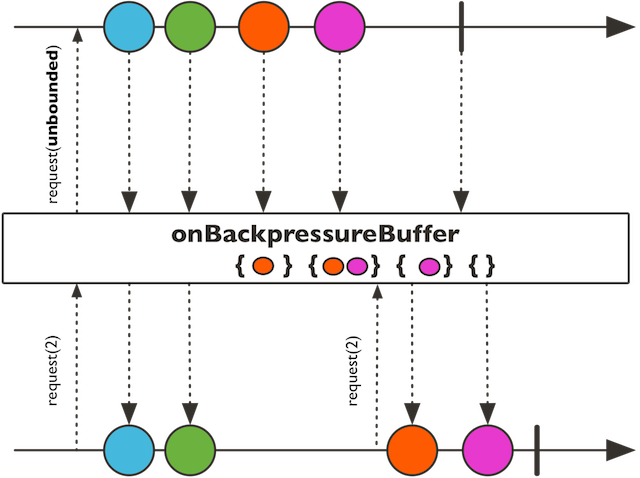

Request an unbounded demand and push the returned Flux, or park the observed elements if not enough demand is requested downstream.

Request an unbounded demand and push the returned Flux, or park the observed elements if not enough

demand is requested downstream. Overflow error will be delayed after the current

backlog is consumed. However the onOverflow will be immediately invoked.

maximum buffer backlog size before overflow callback is called

callback to invoke on overflow

a buffering Flux

Request an unbounded demand and push the returned Flux, or park the observed elements if not enough demand is requested downstream.

Request an unbounded demand and push the returned Flux, or park the observed elements if not enough demand is requested downstream. Errors will be immediately emitted on overflow regardless of the pending buffer.

maximum buffer backlog size before immediate error

a buffering Flux

Request an unbounded demand and push the returned Flux, or park the observed elements if not enough demand is requested downstream.

Request an unbounded demand and push the returned Flux, or drop and notify dropping consumer

with the observed elements if not enough demand is requested downstream.

Request an unbounded demand and push the returned Flux, or drop and notify dropping consumer

with the observed elements if not enough demand is requested downstream.

the Consumer called when an value gets dropped due to lack of downstream requests

a dropping Flux

Request an unbounded demand and push the returned Flux, or drop the observed elements if not enough demand is requested downstream.

Request an unbounded demand and push the returned Flux, or emit onError fom reactor.core.Exceptions.failWithOverflow if not enough demand is requested downstream.

Request an unbounded demand and push the returned Flux, or only keep the most recent observed item if not enough demand is requested downstream.

Transform the error emitted by this Flux by applying a function if the error matches the given predicate, otherwise let the error flow.

Transform the error emitted by this Flux by applying a function if the error matches the given type, otherwise let the error flow.

Transform the error emitted by this Flux by applying a function if the error matches the given type, otherwise let the error flow.

<img class="marble" src="https://raw.githubusercontent.com/reactor/reactor-core/v3.1.0.RC1/src/docs/marble/maperror.png"

the error type

the class of the exception type to react to

the error transforming Function1

a transformed Flux

Transform the error emitted by this Flux by applying a function.

Subscribe to a returned fallback publisher when an error matching the given type occurs.

Subscribe to a returned fallback publisher when an error matching the given type occurs.

Subscribe to a returned fallback publisher when any error occurs.

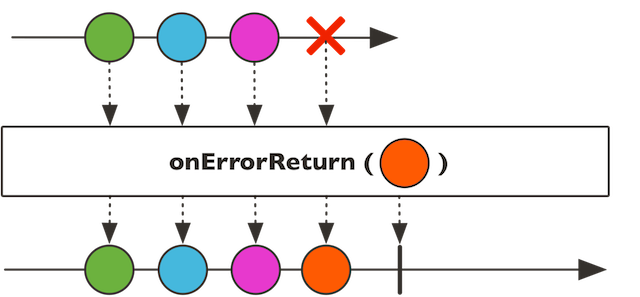

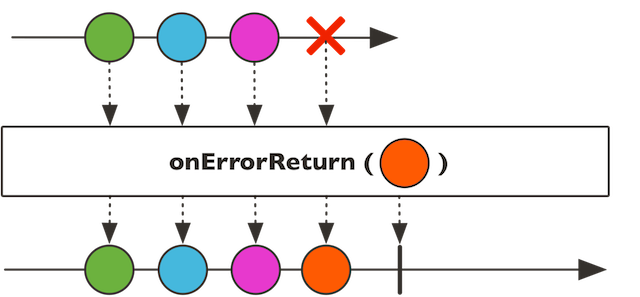

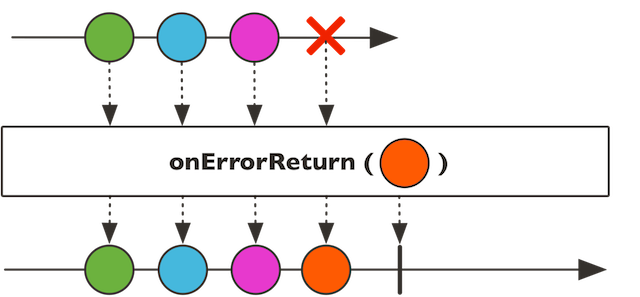

Fallback to the given value if an error matching the given predicate is observed on this Flux

Fallback to the given value if an error matching the given predicate is observed on this Flux

the error predicate to match

alternate value on fallback

a new Flux

Fallback to the given value if an error of a given type is observed on this Flux

Fallback to the given value if an error of a given type is observed on this Flux

the error type

the error type to match

alternate value on fallback

a new Flux

Fallback to the given value if an error is observed on this Flux

Fallback to the given value if an error is observed on this Flux

alternate value on fallback

a new Flux

Detaches the both the child Subscriber and the Subscription on termination or cancellation.

Pick the first Publisher between this Flux and another publisher to emit any signal (onNext/onError/onComplete) and replay all signals from that Publisher, effectively behaving like the fastest of these competing sources.

Pick the first Publisher between this Flux and another publisher to emit any signal (onNext/onError/onComplete) and replay all signals from that Publisher, effectively behaving like the fastest of these competing sources.

the Publisher to race with

the fastest sequence

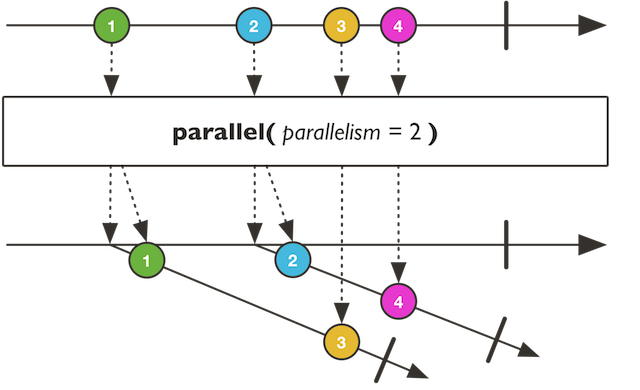

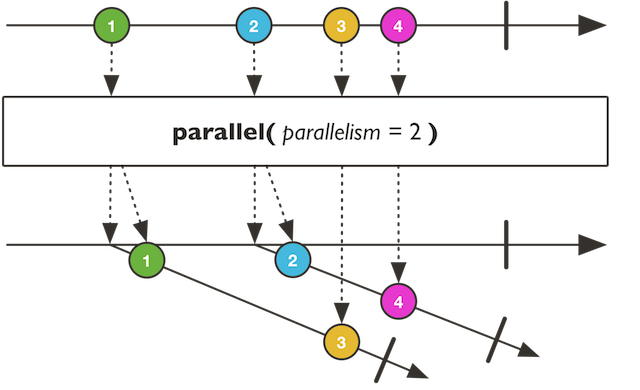

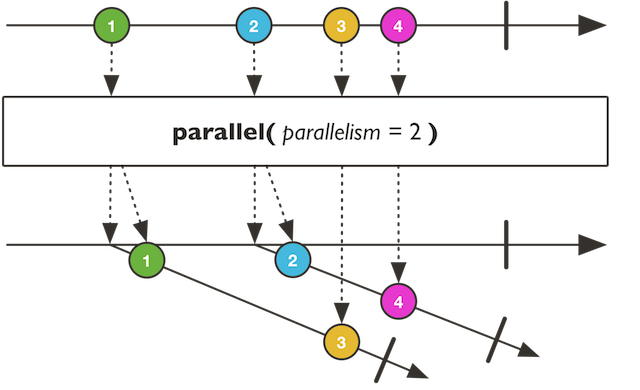

Prepare to consume this Flux on parallelism number of 'rails' in round-robin fashion and use custom prefetch amount and queue for dealing with the source Flux's values.

Prepare to consume this Flux on parallelism number of 'rails' in round-robin fashion and use custom prefetch amount and queue for dealing with the source Flux's values.

the number of parallel rails

the number of values to prefetch from the source

a new ParallelFlux instance

Prepare to consume this Flux on parallelism number of 'rails' in round-robin fashion.

Prepare to consume this Flux on parallelism number of 'rails' in round-robin fashion.

the number of parallel rails

a new ParallelFlux instance

Prepare to consume this Flux on number of 'rails' matching number of CPU in round-robin fashion.

Prepare to consume this Flux on number of 'rails' matching number of CPU in round-robin fashion.

a new ParallelFlux instance

Shares a sequence for the duration of a function that may transform it and consume it as many times as necessary without causing multiple subscriptions to the upstream.

Shares a sequence for the duration of a function that may transform it and consume it as many times as necessary without causing multiple subscriptions to the upstream.

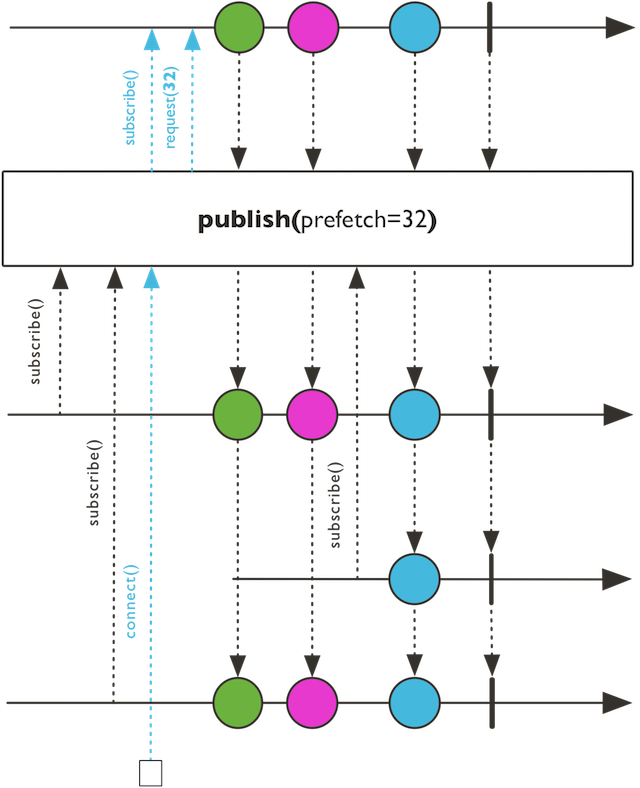

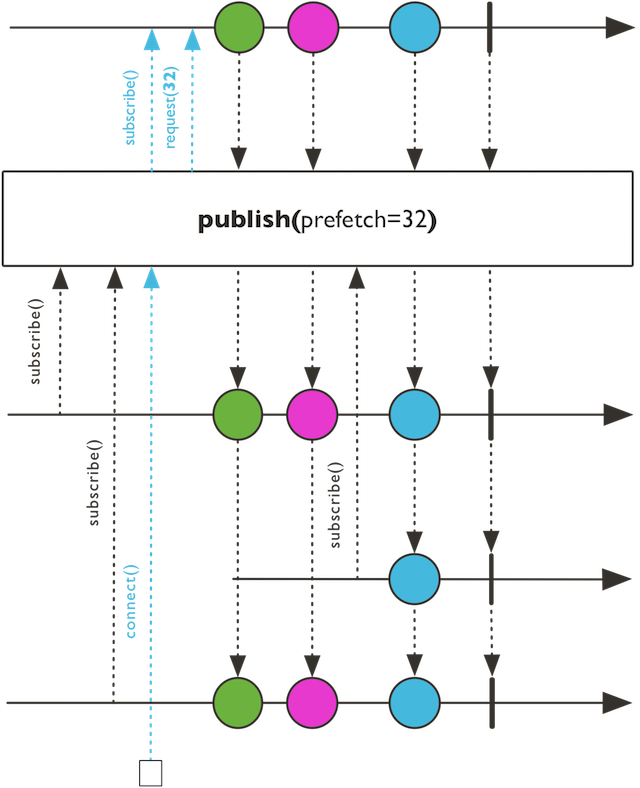

Prepare a ConnectableFlux which shares this Flux sequence and dispatches values to subscribers in a backpressure-aware manner.

Prepare a ConnectableFlux which shares this Flux sequence and dispatches values to subscribers in a backpressure-aware manner. This will effectively turn any type of sequence into a hot sequence.

Backpressure will be coordinated on Subscription.request and if any Subscriber is missing demand (requested = 0), multicast will pause pushing/pulling.

bounded requested demand

a new ConnectableFlux

Prepare a ConnectableFlux which shares this Flux sequence and dispatches values to subscribers in a backpressure-aware manner.

Prepare a ConnectableFlux which shares this Flux sequence and dispatches values to subscribers in a backpressure-aware manner. Prefetch will default to reactor.util.concurrent.Queues.SMALL_BUFFER_SIZE. This will effectively turn any type of sequence into a hot sequence.

Backpressure will be coordinated on Subscription.request and if any Subscriber is missing demand (requested = 0), multicast will pause pushing/pulling.

a new ConnectableFlux

Prepare a Mono which shares this Flux sequence and dispatches the first observed item to subscribers in a backpressure-aware manner.

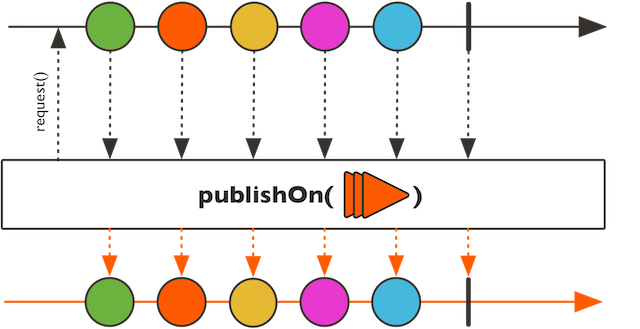

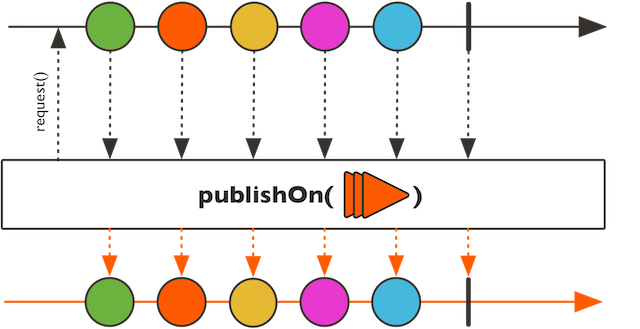

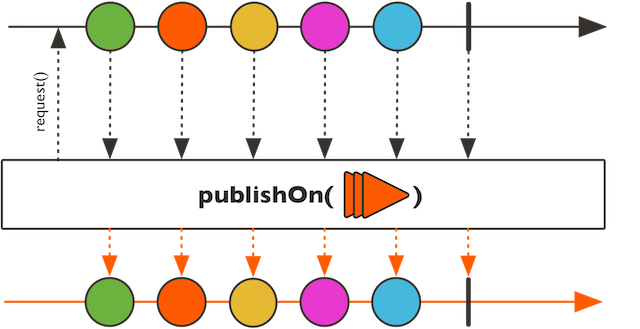

Run onNext, onComplete and onError on a supplied Scheduler reactor.core.scheduler.Scheduler.Worker.

Run onNext, onComplete and onError on a supplied Scheduler reactor.core.scheduler.Scheduler.Worker.

Typically used for fast publisher, slow consumer(s) scenarios.

flux.publishOn(Schedulers.single()).subscribe()

a checked { @link reactor.core.scheduler.Scheduler.Worker} factory

should the buffer be consumed before forwarding any error

the asynchronous boundary capacity

a Flux producing asynchronously

Run onNext, onComplete and onError on a supplied Scheduler reactor.core.scheduler.Scheduler.Worker.

Run onNext, onComplete and onError on a supplied Scheduler reactor.core.scheduler.Scheduler.Worker.

Typically used for fast publisher, slow consumer(s) scenarios.

flux.publishOn(Schedulers.single()).subscribe()

a checked reactor.core.scheduler.Scheduler.Worker factory

the asynchronous boundary capacity

a Flux producing asynchronously

Run onNext, onComplete and onError on a supplied Scheduler reactor.core.scheduler.Scheduler.Worker.

Run onNext, onComplete and onError on a supplied Scheduler reactor.core.scheduler.Scheduler.Worker.

Typically used for fast publisher, slow consumer(s) scenarios.

flux.publishOn(Schedulers.single()).subscribe()

a checked reactor.core.scheduler.Scheduler.Worker factory

a Flux producing asynchronously

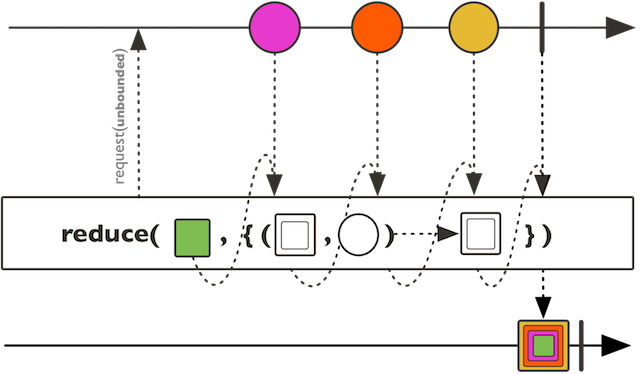

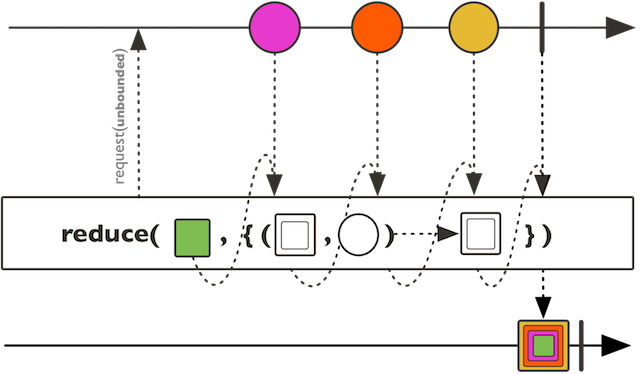

Accumulate the values from this Flux sequence into an object matching an initial value type.

Accumulate the values from this Flux sequence into an object matching an initial value type.

The arguments are the N-1 or initial value and N current item .

the type of the initial and reduced object

the initial left argument to pass to the reducing BiFunction

the reducing BiFunction

a reduced Flux

Aggregate the values from this Flux sequence into an object of the same type than the emitted items.

Accumulate the values from this Flux sequence into an object matching an initial value type.

Accumulate the values from this Flux sequence into an object matching an initial value type.

The arguments are the N-1 or initial value and N current item .

the type of the initial and reduced object

the initial left argument supplied on subscription to the reducing BiFunction

the reducing BiFunction

a reduced Flux

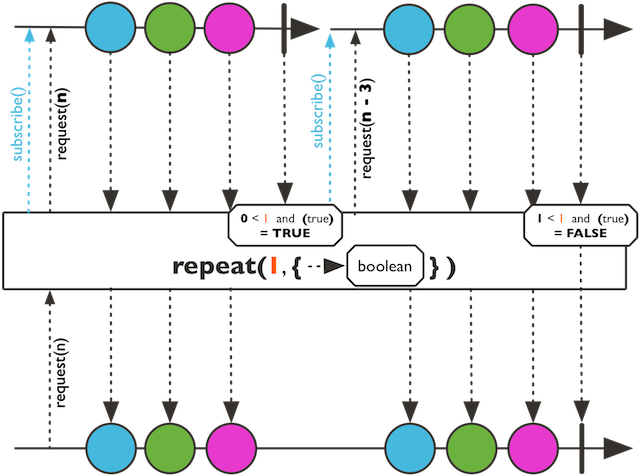

Repeatedly subscribe to the source if the predicate returns true after completion of the previous subscription.

Repeatedly subscribe to the source if the predicate returns true after completion of the previous subscription. A specified maximum of repeat will limit the number of re-subscribe.

the number of times to re-subscribe on complete

the boolean to evaluate on onComplete

an eventually repeated Flux on onComplete up to number of repeat specified OR matching predicate

Repeatedly subscribe to the source if the predicate returns true after completion of the previous subscription.

Repeatedly subscribe to the source if the predicate returns true after completion of the previous subscription.

Repeatedly subscribe to the source completion of the previous subscription.

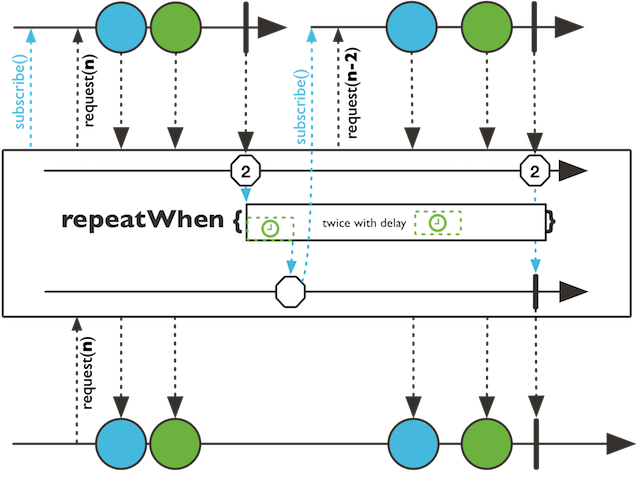

Repeatedly subscribe to this Flux when a companion sequence signals a number of emitted elements in response to the flux completion signal.

Repeatedly subscribe to this Flux when a companion sequence signals a number of emitted elements in response to the flux completion signal.

If the companion sequence signals when this Flux is active, the repeat attempt is suppressed and any terminal signal will terminate this Flux with the same signal immediately.

the Function1 providing a Flux signalling an exclusive number of emitted elements on onComplete and returning a Publisher companion.

an eventually repeated Flux on onComplete when the companion Publisher produces an onNext signal

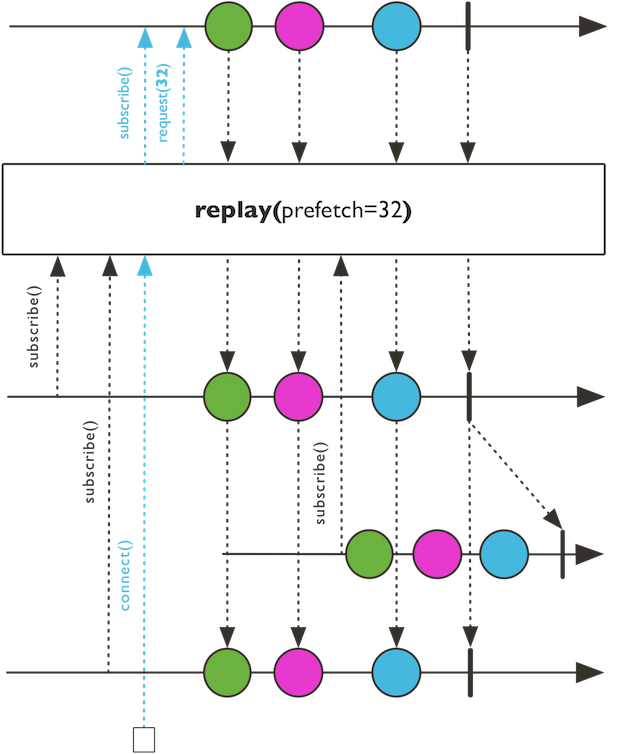

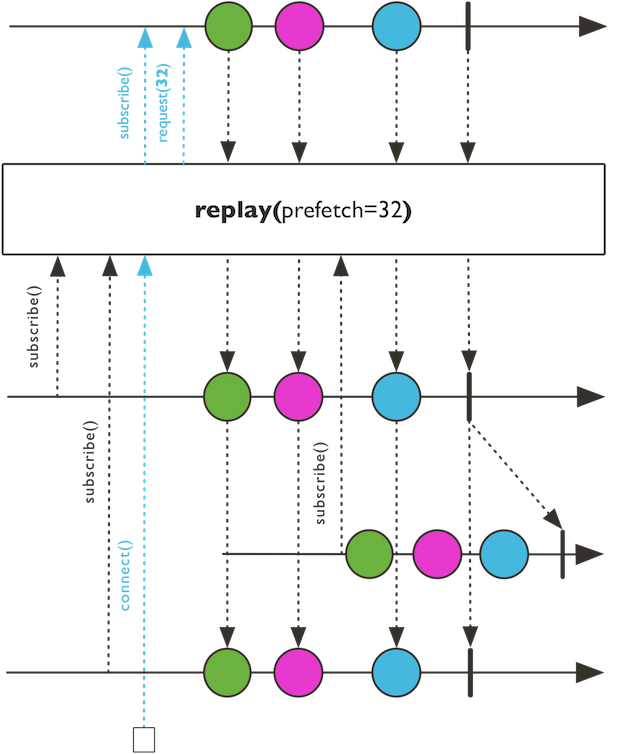

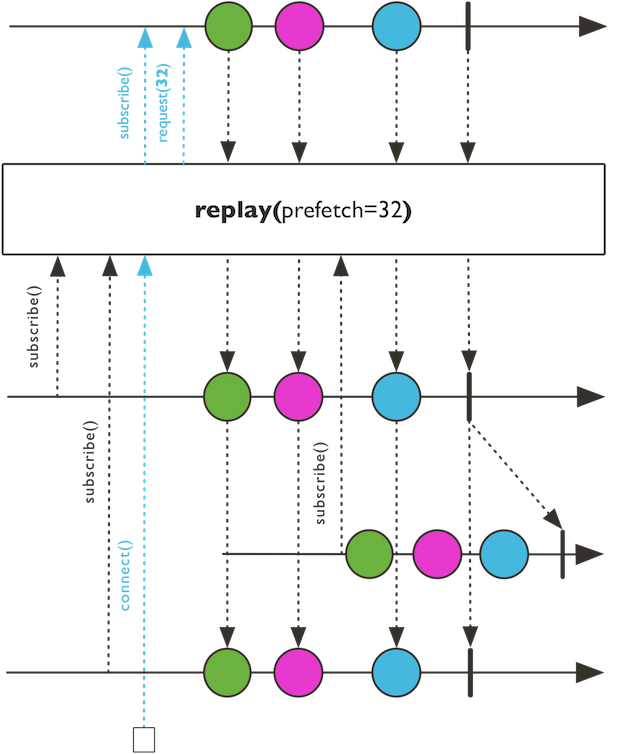

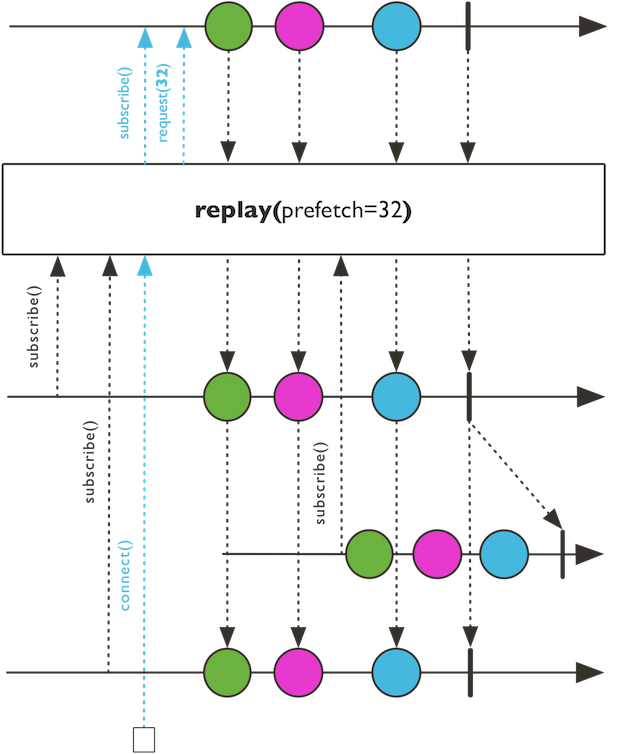

Turn this Flux into a connectable hot source and cache last emitted signals for further Subscriber.

Turn this Flux into a connectable hot source and cache last emitted signals for further Subscriber. Will retain up to the given history size onNext signals and given a per-item ttl. Completion and Error will also be replayed.

number of events retained in history excluding complete and error

Per-item timeout duration

a replaying ConnectableFlux

Turn this Flux into a connectable hot source and cache last emitted signals for further Subscriber.

Turn this Flux into a connectable hot source and cache last emitted signals for further Subscriber. Will retain each onNext up to the given per-item expiry timeout. Completion and Error will also be replayed.

Per-item timeout duration

a replaying ConnectableFlux

Turn this Flux into a connectable hot source and cache last emitted signals for further Subscriber.

Turn this Flux into a connectable hot source and cache last emitted signals for further Subscriber. Will retain up to the given history size onNext signals. Completion and Error will also be replayed.

number of events retained in history excluding complete and error

a replaying ConnectableFlux

Turn this Flux into a hot source and cache last emitted signals for further Subscriber.

Turn this Flux into a hot source and cache last emitted signals for further Subscriber. Will retain an unbounded amount of onNext signals. Completion and Error will also be replayed.

a replaying ConnectableFlux

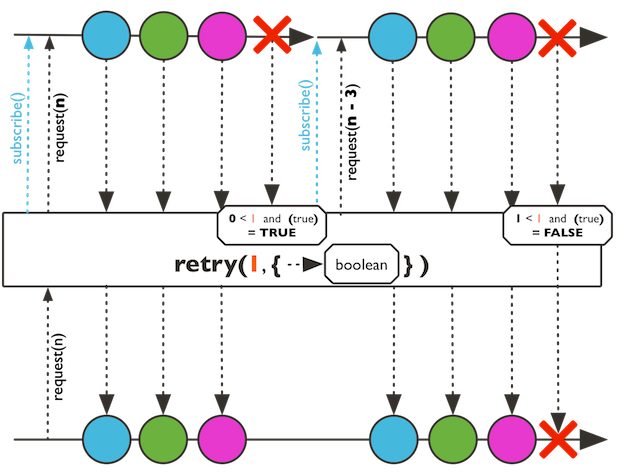

Re-subscribes to this Flux sequence up to the specified number of retries if it signals any

error and the given Predicate matches otherwise push the error downstream.

Re-subscribes to this Flux sequence up to the specified number of retries if it signals any

error and the given Predicate matches otherwise push the error downstream.

the number of times to tolerate an error

the predicate to evaluate if retry should occur based on a given error signal

a re-subscribing Flux on onError up to the specified number of retries and if the predicate matches.

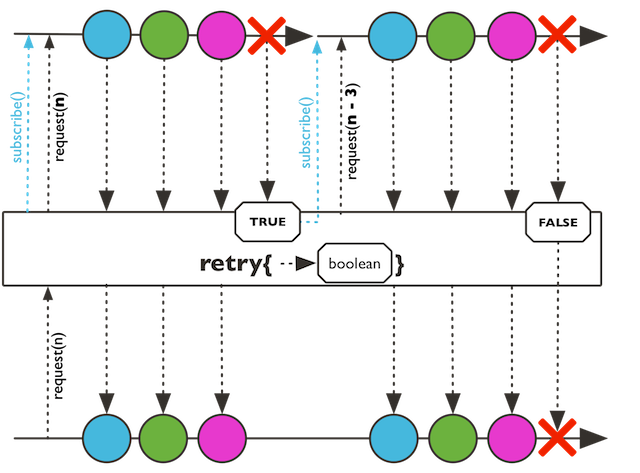

Re-subscribes to this Flux sequence if it signals any error

and the given Predicate matches otherwise push the error downstream.

Re-subscribes to this Flux sequence if it signals any error

and the given Predicate matches otherwise push the error downstream.

the predicate to evaluate if retry should occur based on a given error signal

a re-subscribing Flux on onError if the predicates matches.

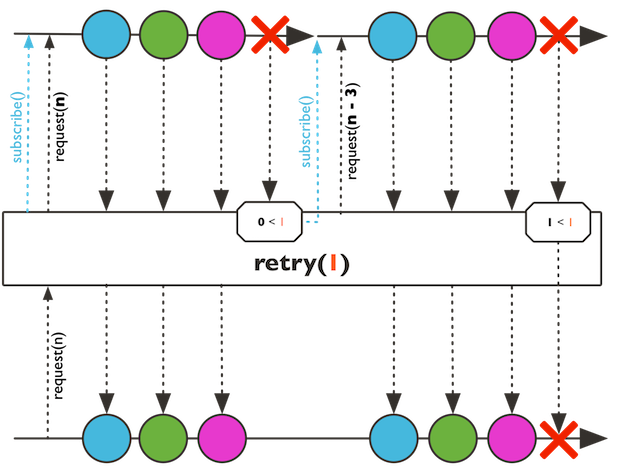

Re-subscribes to this Flux sequence if it signals any error either indefinitely or a fixed number of times.

Re-subscribes to this Flux sequence if it signals any error either indefinitely or a fixed number of times.

The times == Long.MAX_VALUE is treated as infinite retry.

the number of times to tolerate an error

a re-subscribing Flux on onError up to the specified number of retries.

Re-subscribes to this Flux sequence if it signals any error either indefinitely.

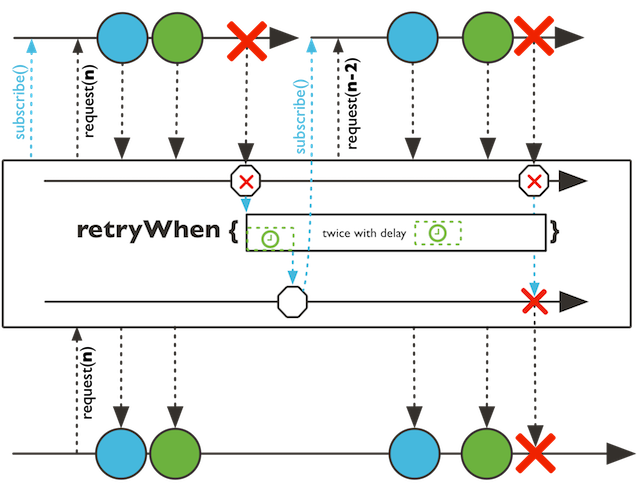

Retries this Flux when a companion sequence signals an item in response to this Flux error signal

Retries this Flux when a companion sequence signals an item in response to this Flux error signal

If the companion sequence signals when the Flux is active, the retry attempt is suppressed and any terminal signal will terminate the Flux source with the same signal immediately.

the Function1 providing a Flux signalling any error from the source sequence and returning a { @link Publisher} companion.

a re-subscribing Flux on onError when the companion Publisher produces an onNext signal

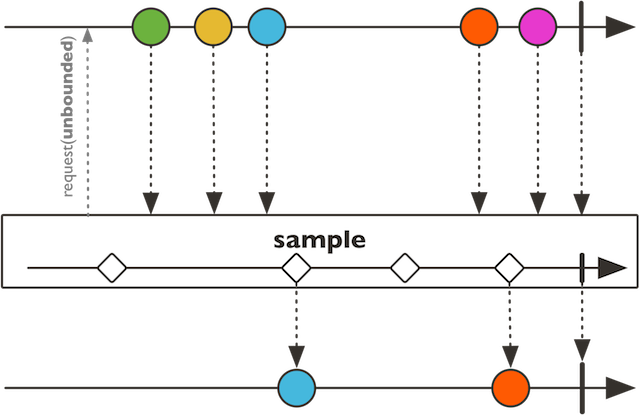

Sample this Flux and emit its latest value whenever the sampler Publisher signals a value.

Sample this Flux and emit its latest value whenever the sampler Publisher signals a value.

Termination of either Publisher will result in termination for the Subscriber as well.

Both Publisher will run in unbounded mode because the backpressure would interfere with the sampling precision.

the type of the sampler sequence

the sampler Publisher

a sampled Flux by last item observed when the sampler Publisher signals

Emit latest value for every given period of time.

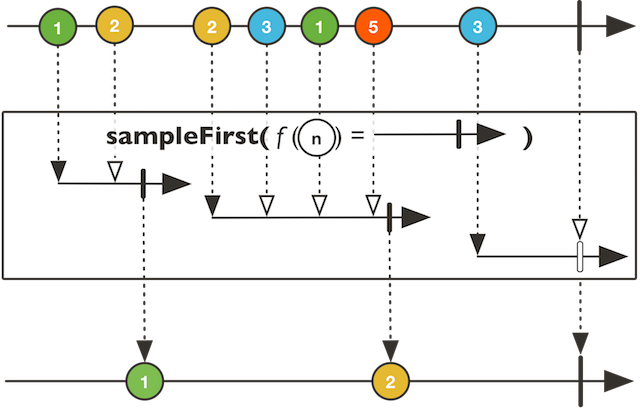

Take a value from this Flux then use the duration provided by a generated Publisher to skip other values until that sampler Publisher signals.

Take a value from this Flux then use the duration provided by a generated Publisher to skip other values until that sampler Publisher signals.

the companion reified type

select a Publisher companion to signal onNext or onComplete to stop excluding others values from this sequence

a sampled Flux by last item observed when the sampler signals

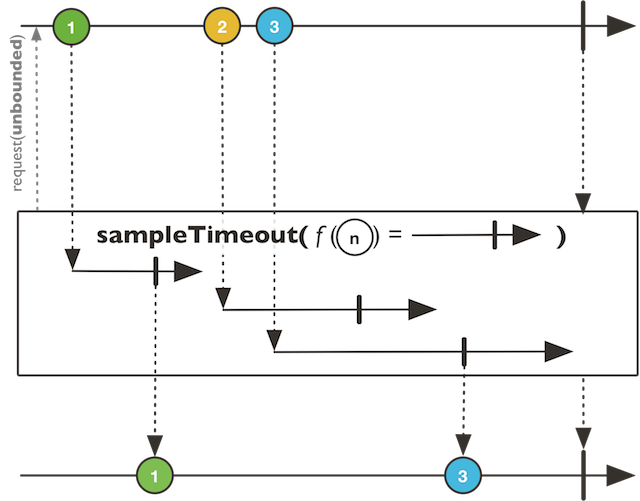

Take a value from this Flux then use the duration provided to skip other values.

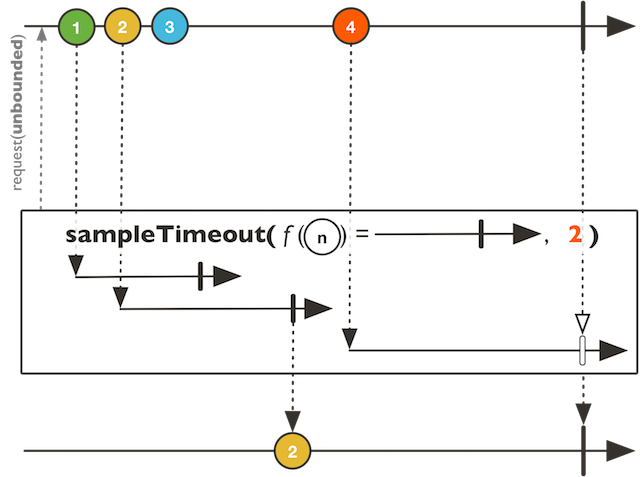

Emit the last value from this Flux only if there were no newer values emitted during the time window provided by a publisher for that particular last value.

Emit the last value from this Flux only if there were no newer values emitted during the time window provided by a publisher for that particular last value.

The provided maxConcurrency will keep a bounded maximum of concurrent timeouts and drop any new

items until at least one timeout terminates.

the throttling type

select a Publisher companion to signal onNext or onComplete to stop checking others values from this sequence and emit the selecting item

the maximum number of concurrent timeouts

a sampled Flux by last single item observed before a companion Publisher emits

Emit the last value from this Flux only if there were no new values emitted during the time window provided by a publisher for that particular last value.

Emit the last value from this Flux only if there were no new values emitted during the time window provided by a publisher for that particular last value.

the companion reified type

select a Publisher companion to signal onNext or onComplete to stop checking others values from this sequence and emit the selecting item

a sampled Flux by last single item observed before a companion Publisher emits

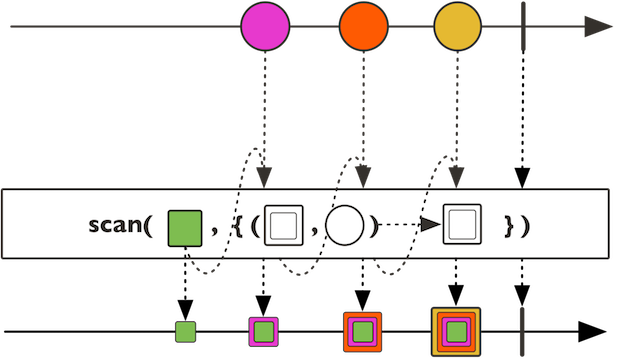

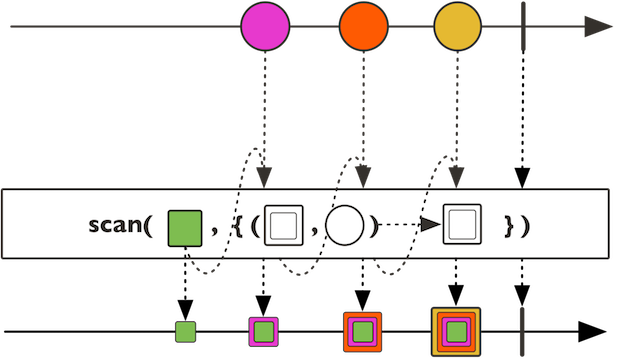

Aggregate this Flux values with the help of an accumulator BiFunction

and emits the intermediate results.

Aggregate this Flux values with the help of an accumulator BiFunction

and emits the intermediate results.

The accumulation works as follows:

result[0] = initialValue;

result[1] = accumulator(result[0], source[0])

result[2] = accumulator(result[1], source[1])

result[3] = accumulator(result[2], source[2])

...

the accumulated type

the initial argument to pass to the reduce function

the accumulating BiFunction

an accumulating Flux starting with initial state

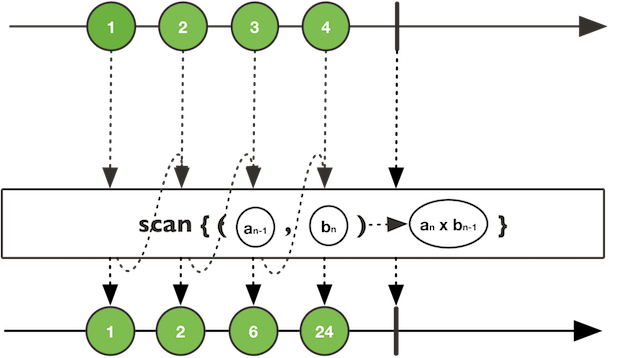

Accumulate this Flux values with an accumulator BiFunction and

returns the intermediate results of this function.

Accumulate this Flux values with an accumulator BiFunction and

returns the intermediate results of this function.

Unlike BiFunction), this operator doesn't take an initial value

but treats the first Flux value as initial value.

The accumulation works as follows:

result[0] = accumulator(source[0], source[1])

result[1] = accumulator(result[0], source[2])

result[2] = accumulator(result[1], source[3])

...

the accumulating BiFunction

an accumulating Flux

Aggregate this Flux values with the help of an accumulator BiFunction

and emits the intermediate results.

Aggregate this Flux values with the help of an accumulator BiFunction

and emits the intermediate results.

The accumulation works as follows:

result[0] = initialValue;

result[1] = accumulator(result[0], source[0])

result[2] = accumulator(result[1], source[1])

result[3] = accumulator(result[2], source[2])

...

the accumulated type

the initial supplier to init the first value to pass to the reduce function

the accumulating BiFunction

an accumulating Flux starting with initial state

Returns a new Flux that multicasts (shares) the original Flux.

Returns a new Flux that multicasts (shares) the original Flux. As long as there is at least one Subscriber this Flux will be subscribed and emitting data. When all subscribers have cancelled it will cancel the source Flux.

This is an alias for Flux.publish.ConnectableFlux.refCount.

a Flux that upon first subcribe causes the source Flux to subscribe once only, late subscribers might therefore miss items.

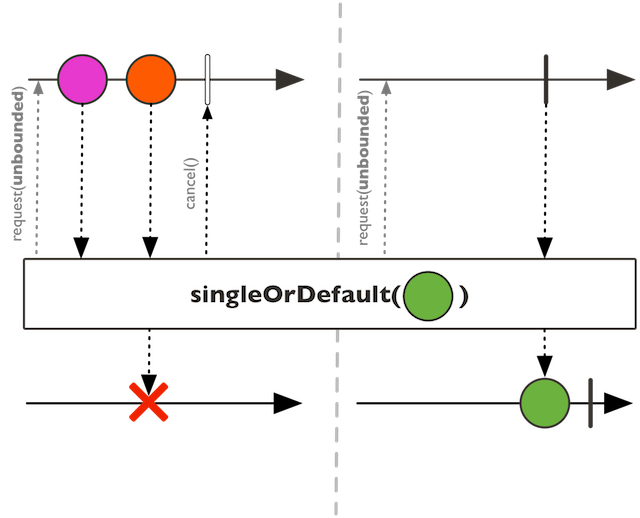

Expect and emit a single item from this Flux source or signal NoSuchElementException (or a default value) for empty source, IndexOutOfBoundsException for a multi-item source.

Expect and emit a single item from this Flux source or signal NoSuchElementException (or a default value) for empty source, IndexOutOfBoundsException for a multi-item source.

a single fallback item if this { @link Flux} is empty

a Mono with the eventual single item or a supplied default value

Expect and emit a single item from this Flux source or signal NoSuchElementException (or a default generated value) for empty source, IndexOutOfBoundsException for a multi-item source.

Expect and emit a zero or single item from this Flux source or NoSuchElementException for a multi-item source.

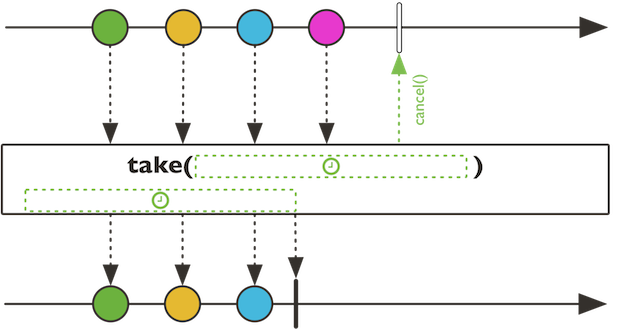

Skip elements from this Flux for the given time period.

Skip elements from this Flux for the given time period.

Skip next the specified number of elements from this Flux.

Skip the last specified number of elements from this Flux.

Skips values from this Flux until a Predicate returns true for the

value.

Skip values from this Flux until a specified Publisher signals an onNext or onComplete.

Skips values from this Flux while a Predicate returns true for the value.

Returns a Flux that sorts the events emitted by source Flux given the Ordering function.

Returns a Flux that sorts the events emitted by source Flux given the Ordering function.

Note that calling sorted with long, non-terminating or infinite sources

might cause OutOfMemoryError

a function that compares two items emitted by this Flux that indicates their sort order

a sorting Flux

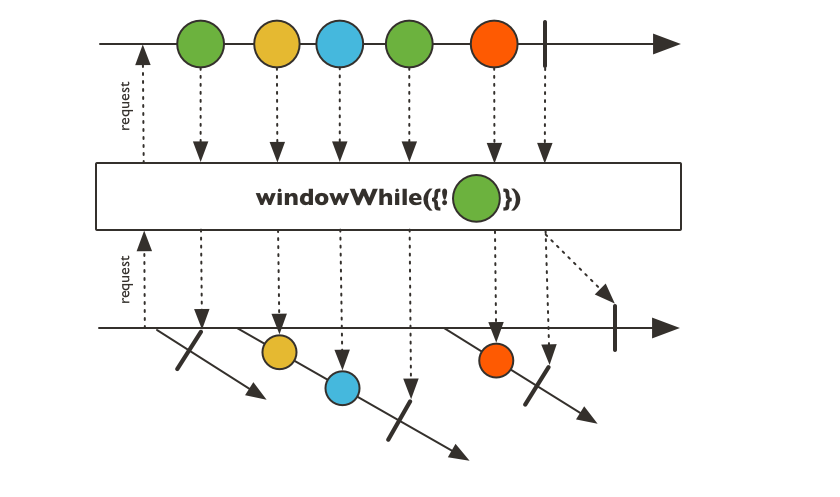

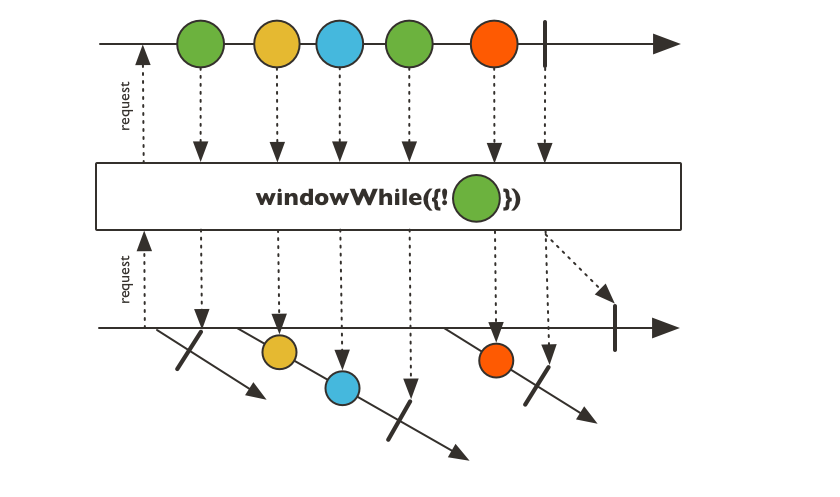

Returns a Flux that sorts the events emitted by source Flux.

Returns a Flux that sorts the events emitted by source Flux. Each item emitted by the Flux must implement Comparable with respect to all other items in the sequence.

Note that calling sort with long, non-terminating or infinite sources

might cause OutOfMemoryError. Use sequence splitting like

Flux.windowWhen to sort batches in that case.

a sorting Flux

Prepend the given Publisher sequence before this Flux sequence.

Prepend the given values before this Flux sequence.

Prepend the given Iterable before this Flux sequence.

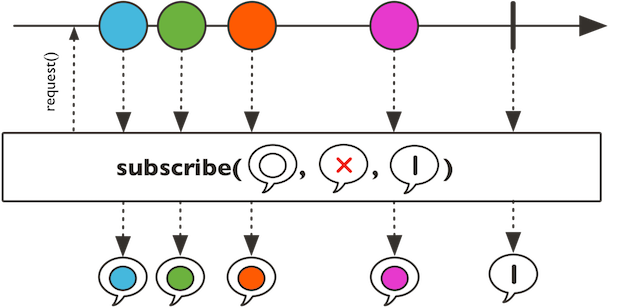

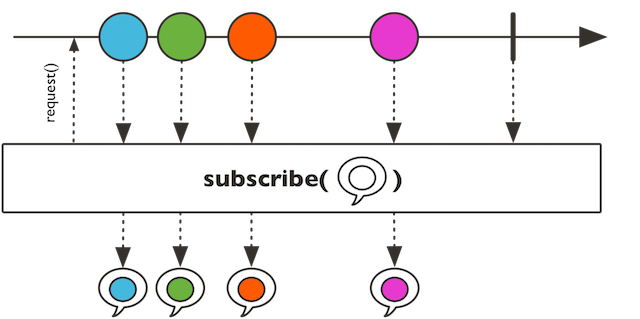

Subscribe consumer to this Flux that will consume all the

sequence.

Subscribe consumer to this Flux that will consume all the

sequence. It will let the provided subscriptionConsumer

request the adequate amount of data, or request unbounded demand

Long.MAX_VALUE if no such consumer is provided.

For a passive version that observe and forward incoming data see Flux.doOnNext, Flux.doOnError, Flux.doOnComplete and Flux.doOnSubscribe.

For a version that gives you more control over backpressure and the request, see Flux.subscribe with a reactor.core.publisher.BaseSubscriber.

the consumer to invoke on each value

the consumer to invoke on error signal

the consumer to invoke on complete signal

the consumer to invoke on subscribe signal, to be used for the initial request, or null for max request

a new Disposable to dispose the Subscription

Subscribe consumer to this Flux that will consume all the

sequence.

Subscribe consumer to this Flux that will consume all the

sequence. It will request unbounded demand Long.MAX_VALUE.

For a passive version that observe and forward incoming data see Flux.doOnNext,

Flux.doOnError and Flux.doOnComplete.

For a version that gives you more control over backpressure and the request, see Flux.subscribe with a reactor.core.publisher.BaseSubscriber.

the consumer to invoke on each value

the consumer to invoke on error signal

the consumer to invoke on complete signal

a new Disposable to dispose the Subscription

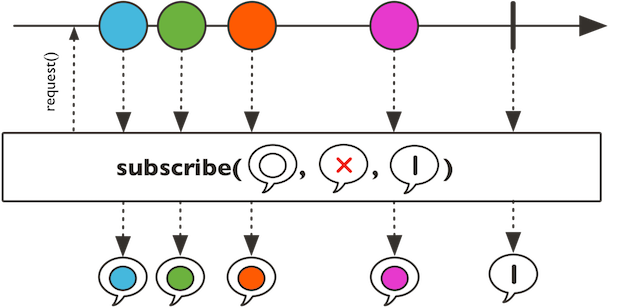

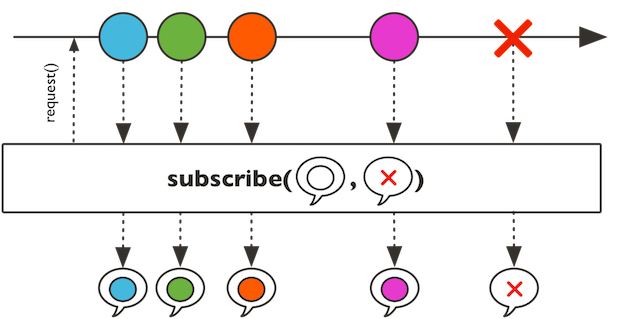

Subscribe consumer to this Flux that will consume all the

sequence.

Subscribe consumer to this Flux that will consume all the

sequence. It will request unbounded demand Long.MAX_VALUE.

For a passive version that observe and forward incoming data see

Flux.doOnNext and Flux.doOnError.

For a version that gives you more control over backpressure and the request, see Flux.subscribe with a reactor.core.publisher.BaseSubscriber.

the consumer to invoke on each next signal

the consumer to invoke on error signal

a new Disposable to dispose the Subscription

Subscribe a consumer to this Flux that will consume all the

sequence.

Subscribe a consumer to this Flux that will consume all the

sequence. It will request an unbounded demand.

For a passive version that observe and forward incoming data see Flux.doOnNext.

For a version that gives you more control over backpressure and the request, see Flux.subscribe with a reactor.core.publisher.BaseSubscriber.

the consumer to invoke on each value

a new Disposable to dispose the Subscription

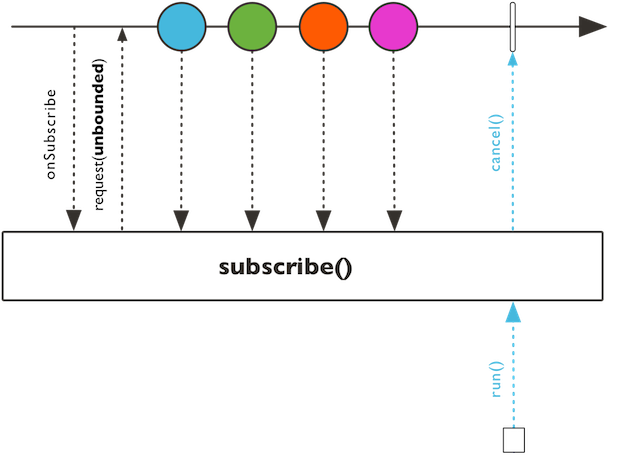

Start the chain and request unbounded demand.

Start the chain and request unbounded demand.

a Disposable task to execute to dispose and cancel the underlying Subscription