FluxProcessor

Related Docs:

object FluxProcessor

| package publisher

Alias for SFlux.concatWith

Alias for SFlux.concatWith

Emit a single boolean true if all values of this sequence match the given predicate.

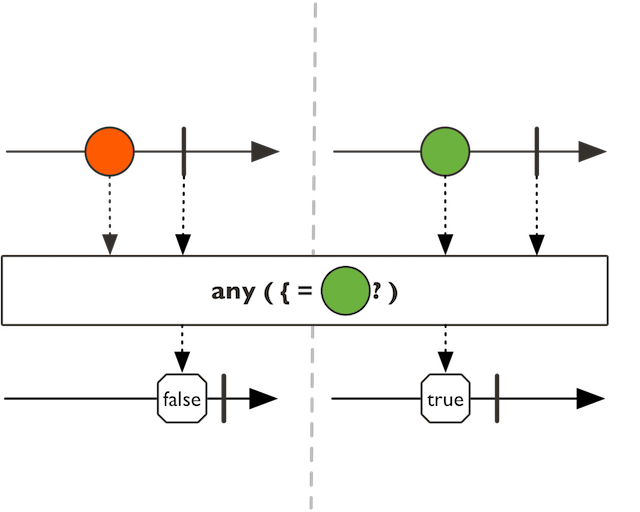

Emit a single boolean true if any of the values of this SFlux sequence match the predicate.

Emit a single boolean true if any of the values of this SFlux sequence match the predicate.

The implementation uses short-circuit logic and completes with true if the predicate matches a value.

predicate tested upon values

a new SFlux with true if any value satisfies a predicate and false

otherwise

Immediately apply the given transformation to this SFlux in order to generate a target type.

Immediately apply the given transformation to this SFlux in order to generate a target type.

flux.as(Mono::from).subscribe()

the returned type

the Function1 to immediately map this SFlux into a target type instance.

a an instance of P

SFlux.compose for a bounded conversion to Publisher

Blocks until the upstream signals its first value or completes.

Blocks until the upstream signals its first value or completes.

the Some value or None

Blocks until the upstream completes and return the last emitted value.

Blocks until the upstream completes and return the last emitted value.

max duration timeout to wait for.

the last value or None

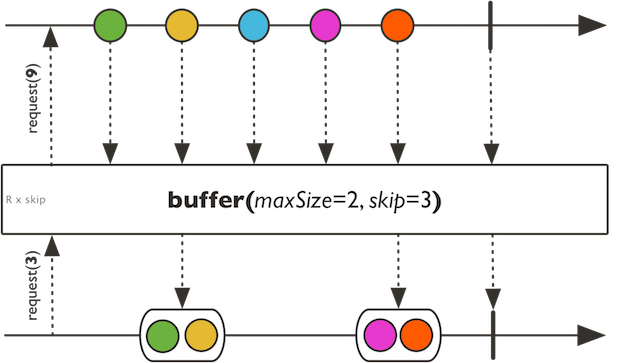

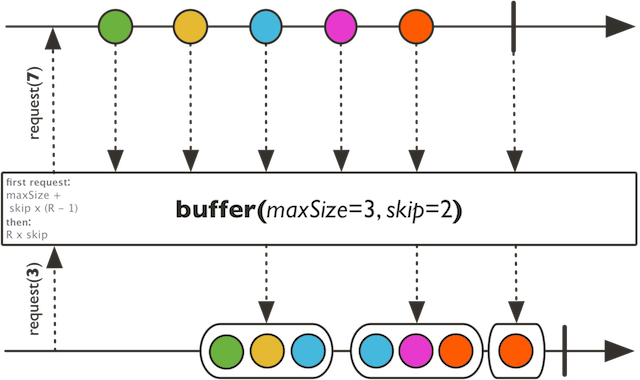

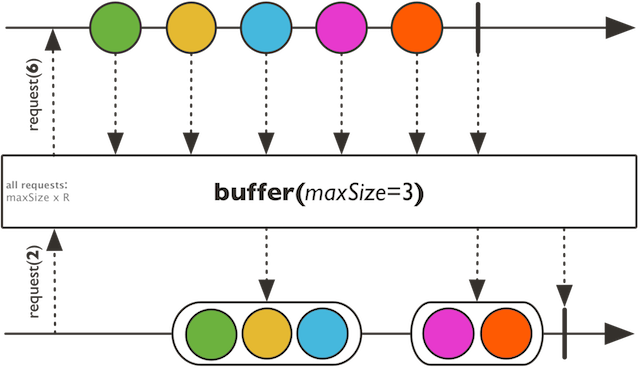

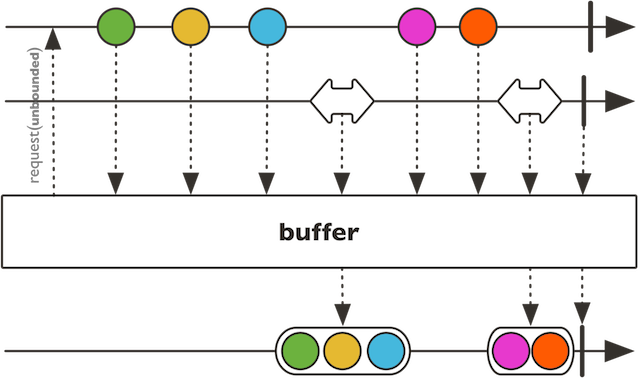

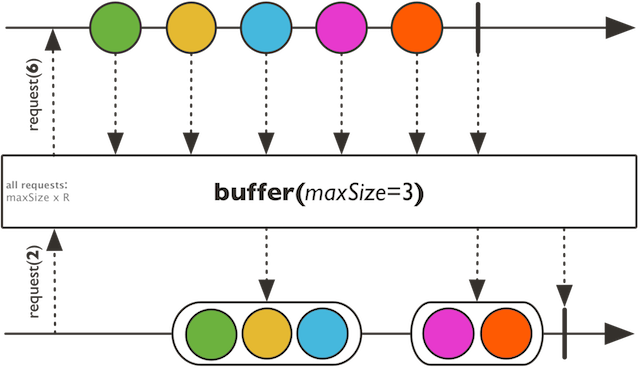

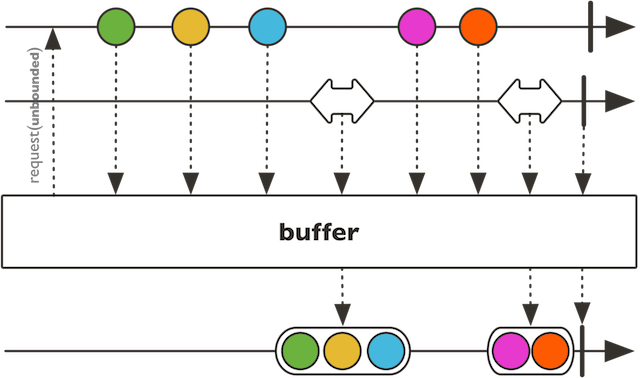

Collect incoming values into multiple Seq that will be pushed into the returned SFlux when the given max size is reached or onComplete is received.

Collect incoming values into multiple Seq that will be pushed into the returned SFlux when the given max size is reached or onComplete is received. A new container Seq will be created every given skip count.

When Skip > Max Size : dropping buffers

When Skip < Max Size : overlapping buffers

When Skip == Max Size : exact buffers

the supplied Seq type

the max collected size

the collection to use for each data segment

the number of items to skip before creating a new bucket

a microbatched SFlux of possibly overlapped or gapped Seq

Collect incoming values into multiple Seq delimited by the given Publisher signals.

Collect incoming values into multiple Seq delimited by the given Publisher signals.

the supplied Seq type

the other Publisher to subscribe to for emitting and recycling receiving bucket

the collection to use for each data segment

a microbatched SFlux of Seq delimited by a Publisher

Return the processor buffer capacity if any or Int.MaxValue

Return the processor buffer capacity if any or Int.MaxValue

processor buffer capacity if any or Int.MaxValue

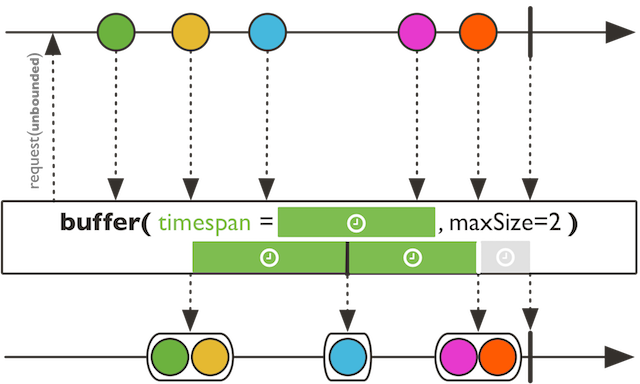

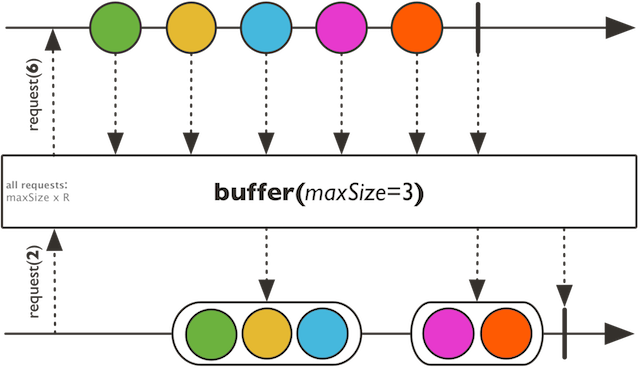

Collect incoming values into a Seq that will be pushed into the returned SFlux every timespan OR maxSize items.

Collect incoming values into a Seq that will be pushed into the returned SFlux every timespan OR maxSize items.

the supplied Seq type

the max collected size

the timeout to use to release a buffered list

the collection to use for each data segment

a microbatched SFlux of Seq delimited by given size or a given period timeout

Collect incoming values into multiple Seq that will be pushed into the returned SFlux each time the given predicate returns true.

Collect incoming values into multiple Seq that will be pushed into

the returned SFlux each time the given predicate returns true. Note that

the buffer into which the element that triggers the predicate to return true

(and thus closes a buffer) is included depends on the cutBefore parameter:

set it to true to include the boundary element in the newly opened buffer, false to

include it in the closed buffer (as in SFlux.bufferUntil).

On completion, if the latest buffer is non-empty and has not been closed it is emitted. However, such a "partial" buffer isn't emitted in case of onError termination.

a predicate that triggers the next buffer when it becomes true.

set to true to include the triggering element in the new buffer rather than the old.

a microbatched SFlux of Seq

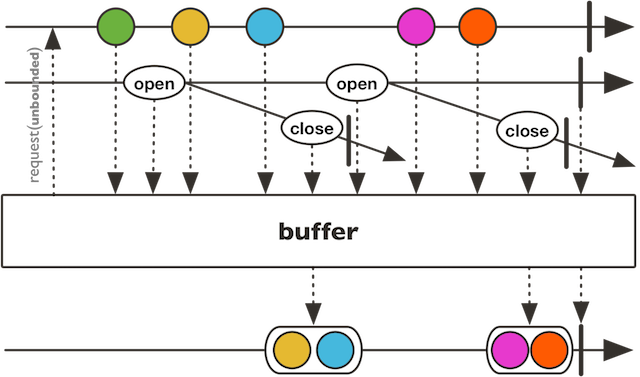

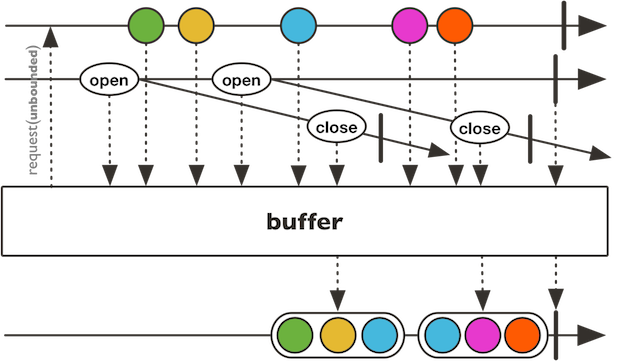

Collect incoming values into multiple Seq delimited by the given Publisher signals.

Collect incoming values into multiple Seq delimited by the given Publisher signals. Each Seq bucket will last until the mapped Publisher receiving the boundary signal emits, thus releasing the bucket to the returned SFlux.

When Open signal is strictly not overlapping Close signal : dropping buffers

When Open signal is strictly more frequent than Close signal : overlapping buffers

When Open signal is exactly coordinated with Close signal : exact buffers

the element type of the bucket-opening sequence

the element type of the bucket-closing sequence

the supplied Seq type

a Publisher to subscribe to for creating new receiving bucket signals.

a Publisher factory provided the opening signal and returning a Publisher to subscribe to for emitting relative bucket.

the collection to use for each data segment

a microbatched SFlux of Seq delimited by an opening Publisher and a relative closing Publisher

Collect incoming values into multiple Seq that will be pushed into the returned SFlux.

Collect incoming values into multiple Seq that will be pushed into the returned SFlux. Each buffer continues aggregating values while the given predicate returns true, and a new buffer is created as soon as the predicate returns false... Note that the element that triggers the predicate to return false (and thus closes a buffer) is NOT included in any emitted buffer.

On completion, if the latest buffer is non-empty and has not been closed it is emitted. However, such a "partial" buffer isn't emitted in case of onError termination.

a predicate that triggers the next buffer when it becomes false.

a microbatched SFlux of Seq

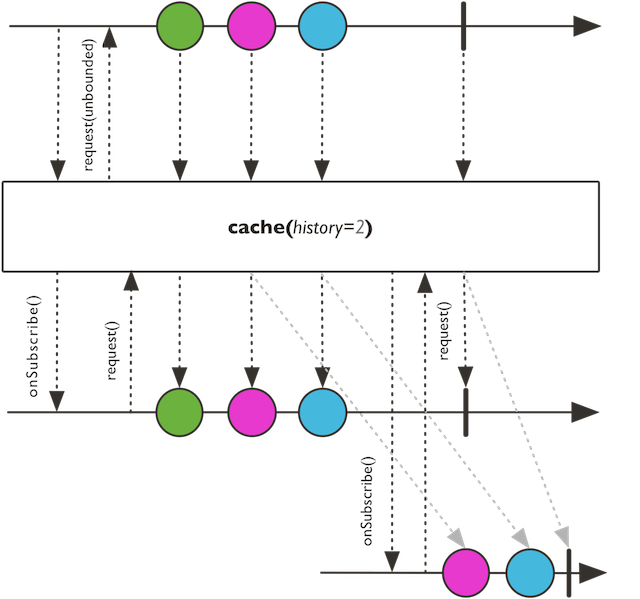

Turn this SFlux into a hot source and cache last emitted signals for further Subscriber.

Turn this SFlux into a hot source and cache last emitted signals for further Subscriber. Will retain up to the given history size with per-item expiry timeout.

number of events retained in history excluding complete and error

Time-to-live for each cached item.

a replaying SFlux

Prepare this SFlux so that subscribers will cancel from it on a specified Scheduler.

Cast the current SFlux produced type into a target produced type.

Activate assembly tracing or the lighter assembly marking depending on the

forceStackTrace option.

Activate assembly tracing or the lighter assembly marking depending on the

forceStackTrace option.

By setting the forceStackTrace parameter to true, activate assembly

tracing for this particular SFlux and give it a description that

will be reflected in the assembly traceback in case of an error upstream of the

checkpoint. Note that unlike SFlux.checkpoint(Option[String]), this will incur

the cost of an exception stack trace creation. The description could for

example be a meaningful name for the assembled flux or a wider correlation ID,

since the stack trace will always provide enough information to locate where this

Flux was assembled.

By setting forceStackTrace to false, behaves like

SFlux.checkpoint(Option[String]) and is subject to the same caveat in choosing the

description.

It should be placed towards the end of the reactive chain, as errors triggered downstream of it cannot be observed and augmented with assembly marker.

a description (must be unique enough if forceStackTrace is set to false).

false to make a light checkpoint without a stacktrace, true to use a stack trace.

the assembly marked SFlux.

Concatenate emissions of this SFlux with the provided Publisher (no interleave).

Provide a default unique value if this sequence is completed without any data

Delay each of this SFlux elements Subscriber#onNext signals)

by a given duration.

Delay each of this SFlux elements Subscriber#onNext signals)

by a given duration. Signals are delayed and continue on an user-specified

Scheduler, but empty sequences or immediate error signals are not delayed.

period to delay each Subscriber#onNext signal

a time-capable Scheduler instance to delay each signal on

a delayed SFlux

Return the number of active Subscriber or -1 if untracked.

Return the number of active Subscriber or -1 if untracked.

the number of active Subscriber or -1 if untracked

Current error if any, default to None

Current error if any, default to None

Current error if any, default to None

Divide this sequence into dynamically created SFlux (or groups) for each unique key, as produced by the provided keyMapper.

Divide this sequence into dynamically created SFlux (or groups) for each unique key, as produced by the provided keyMapper. Source elements are also mapped to a different value using the valueMapper. Note that groupBy works best with a low cardinality of groups, so chose your keyMapper function accordingly.

The groups need to be drained and consumed downstream for groupBy to work correctly.

Notably when the criteria produces a large amount of groups, it can lead to hanging

if the groups are not suitably consumed downstream (eg. due to a flatMap

with a maxConcurrency parameter that is set too low).

the key type extracted from each value of this sequence

the value type extracted from each value of this sequence

the key mapping function that evaluates an incoming data and returns a key.

the value mapping function that evaluates which data to extract for re-routing.

the number of values to prefetch from the source

a SFlux of SGroupedFlux grouped sequences

Handle the items emitted by this SFlux by calling a biconsumer with the output sink for each onNext.

Handle the items emitted by this SFlux by calling a biconsumer with the output sink for each onNext. At most one SynchronousSink.next(Anyref) call must be performed and/or 0 or 1 SynchronousSink.error(Throwable) or SynchronousSink.complete().

the transformed type

the handling Function2

a transformed SFlux

Return true if terminated with onComplete

Return true if terminated with onComplete

true if terminated with onComplete

Return true if any Subscriber is actively subscribed

Return true if any Subscriber is actively subscribed

true if any Subscriber is actively subscribed

Return true if terminated with onError

Return true if terminated with onError

true if terminated with onError

Return true if this FluxProcessor supports multithread producing

Return true if this FluxProcessor supports multithread producing

true if this FluxProcessor supports multithread producing

Has this upstream finished or "completed" / "failed" ?

Has this upstream finished or "completed" / "failed" ?

has this upstream finished or "completed" / "failed" ?

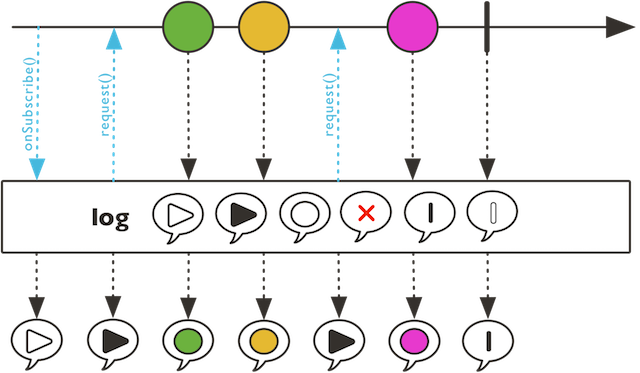

Observe all Reactive Streams signals and use Logger support to handle trace implementation.

Observe all Reactive Streams signals and use Logger support to handle trace implementation. Default will use Level.INFO and java.util.logging. If SLF4J is available, it will be used instead.

The default log category will be "reactor.*", a generated operator suffix will complete, e.g. "reactor.Flux.Map".

a new unaltered SFlux

Transform the items emitted by this SFlux by applying a synchronous function to each item.

Transform the items emitted by this SFlux by applying a synchronous function to each item.

the transformed type

the synchronous transforming Function1

a transformed { @link Flux}

Activate metrics for this sequence, provided there is an instrumentation facade on the classpath (otherwise this method is a pure no-op).

Activate metrics for this sequence, provided there is an instrumentation facade on the classpath (otherwise this method is a pure no-op).

Metrics are gathered on Subscriber events, and it is recommended to also name (and optionally tag) the sequence.

an instrumented SFlux

Check this Scannable and its Scannable.parents() for a name an return the first one that is reachable.

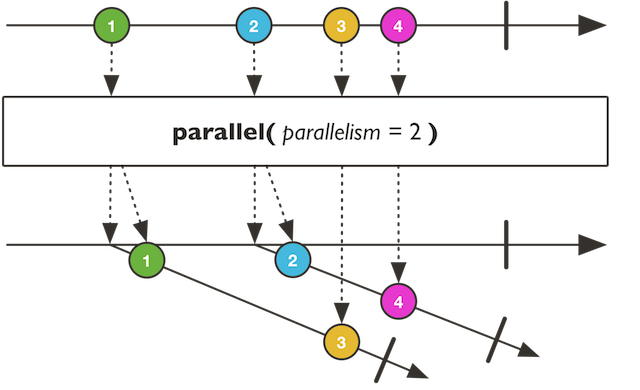

Prepare to consume this SFlux on number of 'rails' matching number of CPU in round-robin fashion.

Prepare to consume this SFlux on number of 'rails' matching number of CPU in round-robin fashion.

the number of parallel rails

the number of values to prefetch from the source

a new SParallelFlux instance

Return a Stream navigating the org.reactivestreams.Subscription chain (upward).

Return a Stream navigating the org.reactivestreams.Subscription chain (upward).

a Stream navigating the org.reactivestreams.Subscription chain (upward)

Multiple all element within this SFlux given the type element is Numeric

Prepare a ConnectableSFlux which shares this SFlux sequence and dispatches values to subscribers in a backpressure-aware manner.

Prepare a ConnectableSFlux which shares this SFlux sequence and dispatches values to subscribers in a backpressure-aware manner. This will effectively turn any type of sequence into a hot sequence.

Backpressure will be coordinated on org.reactivestreams.Subscription.request and if any Subscriber is missing demand (requested = 0), multicast will pause pushing/pulling.

bounded requested demand

a new ConnectableSFlux

Prepare a SMono which shares this SFlux sequence and dispatches the first observed item to subscribers in a backpressure-aware manner.

Retries this SFlux in response to signals emitted by a companion Publisher.

Retries this SFlux in response to signals emitted by a companion Publisher.

The companion is generated by the provided Retry instance, see Retry#max(long), Retry#maxInARow(long)

and Duration) for readily available strategy builders.

The operator generates a base for the companion, a SFlux of reactor.util.retry.Retry.RetrySignal which each give metadata about each retryable failure whenever this SFlux signals an error. The final companion should be derived from that base companion and emit data in response to incoming onNext (although it can emit less elements, or delay the emissions).

Terminal signals in the companion terminate the sequence with the same signal, so emitting an Subscriber#onError(Throwable)

will fail the resulting SFlux with that same error.

Note that the Retry.RetrySignal state can be transient and change between each source

onError or

onNext. If processed with a delay,

this could lead to the represented state being out of sync with the state at which the retry

was evaluated. Map it to Retry.RetrySignal#copy() right away to mediate this.

Note that if the companion Publisher created by the whenFactory

emits reactor.util.context.Context as trigger objects, these reactor.util.context.Context will be merged with

the previous Context:

Retry customStrategy = Retry.fromFunction(companion -> companion.handle((retrySignal, sink) -> { Context ctx = sink.currentContext(); int rl = ctx.getOrDefault("retriesLeft", 0); if (rl > 0) { sink.next(Context.of( "retriesLeft", rl - 1, "lastError", retrySignal.failure() ));else { sink.error(Exceptions.retryExhausted("retries exhausted", retrySignal.failure())); } })); Fluxretried = originalFlux.retryWhen(customStrategy); }

a { @link Flux} that retries on onError when a companion { @link Publisher} produces an onNext signal

Retry.backoff(long, Duration)

Retry.maxInARow(long)

Retry.max(long)

Sample this SFlux by periodically emitting an item corresponding to that SFlux latest emitted value within the periodical time window.

Sample this SFlux by periodically emitting an item corresponding to that SFlux latest emitted value within the periodical time window. Note that if some elements are emitted quicker than the timespan just before source completion, the last of these elements will be emitted along with the onComplete signal.

the duration of the window after which to emit the latest observed item

a SFlux sampled to the last item seen over each periodic window

Repeatedly take a value from this SFlux then skip the values that follow within a given duration.

Introspect a component's specific state attribute, returning an associated value specific to that component, or the default value associated with the key, or null if the attribute doesn't make sense for that particular component and has no sensible default.

Introspect a component's specific state attribute, returning an associated value specific to that component, or the default value associated with the key, or null if the attribute doesn't make sense for that particular component and has no sensible default.

a Attr to resolve for the component.

a value associated to the key or None if unmatched or unresolved

Reduce this SFlux values with an accumulator Function2 and also emit the intermediate results of this function.

Reduce this SFlux values with an accumulator Function2 and also emit the intermediate results of this function.

The accumulation works as follows:

result[0] = initialValue;

result[1] = accumulator(result[0], source[0])

result[2] = accumulator(result[1], source[1])

result[3] = accumulator(result[2], source[2])

...

the accumulated type

the initial value

an accumulating SFlux starting with initial state

Reduce this SFlux values with an accumulator Function2 and also emit the intermediate results of this function.

Reduce this SFlux values with an accumulator Function2 and also emit the intermediate results of this function.

Unlike Function2), this operator doesn't take an initial value

but treats the first SFlux value as initial value.

The accumulation works as follows:

result[0] = source[0]

result[1] = accumulator(result[0], source[1])

result[2] = accumulator(result[1], source[2])

result[3] = accumulator(result[2], source[3])

...

the accumulating Function2

an accumulating SFlux

Introspect a component's specific state attribute.

Introspect a component's specific state attribute. If there's no specific value in the component for that key, fall back to returning the provided non null default.

a Attr to resolve for the component.

a fallback value if key resolve to { @literal null}

a value associated to the key or the provided default if unmatched or unresolved

This method is used internally by components to define their key-value mappings in a single place.

This method is used internally by components to define their key-value mappings in a single place. Although it is ignoring the generic type of the Attr key, implementors should take care to return values of the correct type, and return None if no specific value is available.

For public consumption of attributes, prefer using Scannable.scan(Attr), which will return a typed value and fall back to the key's default if the component didn't define any mapping.

a { @link Attr} to resolve for the component.

the value associated to the key for that specific component, or null if none.

Reduce this SFlux values with an accumulator Function2 and also emit the intermediate results of this function.

Reduce this SFlux values with an accumulator Function2 and also emit the intermediate results of this function.

The accumulation works as follows:

result[0] = initialValue;

result[1] = accumulator(result[0], source[0])

result[2] = accumulator(result[1], source[1])

result[3] = accumulator(result[2], source[2])

...

the accumulated type

the initial value computation

the accumulating Function2

an accumulating SFlux starting with initial state

Create a FluxProcessor that safely gates multi-threaded producer

Create a FluxProcessor that safely gates multi-threaded producer

a serializing FluxProcessor

Returns serialization strategy.

Returns serialization strategy. If true, FluxProcessor.sink() will always be serialized. Otherwise sink is serialized only if => Unit) is invoked.

true to serialize any sink, false to delay serialization till onRequest

Create a FluxSink that safely gates multi-threaded producer Subscriber.onNext.

Create a FluxSink that safely gates multi-threaded producer Subscriber.onNext.

The returned FluxSink will not apply any FluxSink.OverflowStrategy and overflowing FluxSink.next will behave in two possible ways depending on the Processor:

the overflow strategy, see FluxSink.OverflowStrategy for the available strategies

a serializing FluxSink

Create a FluxSink that safely gates multi-threaded producer Subscriber.onNext.

Create a FluxSink that safely gates multi-threaded producer Subscriber.onNext.

The returned FluxSink will not apply any FluxSink.OverflowStrategy and overflowing FluxSink.next will behave in two possible ways depending on the Processor:

a serializing FluxSink

Prepend the given Publisher sequence to this SFlux sequence.

Return a meaningful String representation of this Scannable in its chain of Scannable.parents and Scannable.actuals.

Return a meaningful String representation of this Scannable in its chain of Scannable.parents and Scannable.actuals.

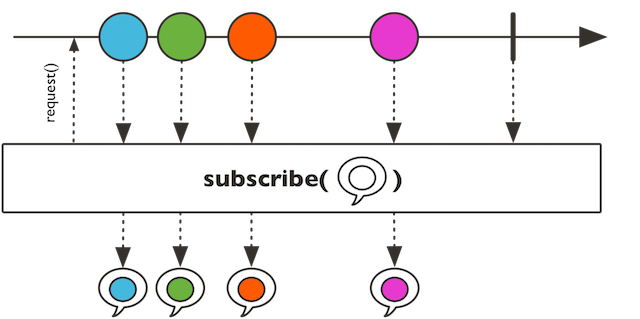

Subscribe to this SFlux and request unbounded demand.

Subscribe to this SFlux and request unbounded demand.

This version doesn't specify any consumption behavior for the events from the chain, especially no error handling, so other variants should usually be preferred.

a new Disposable that can be used to cancel the underlying org.reactivestreams.Subscription

Subscribe a consumer to this SFlux that will consume all the

sequence.

Subscribe a consumer to this SFlux that will consume all the

sequence. It will request an unbounded demand.

For a passive version that observe and forward incoming data see SFlux.doOnNext.

For a version that gives you more control over backpressure and the request, see SFlux.subscribe with a reactor.core.publisher.BaseSubscriber.

the consumer to invoke on each value

the consumer to invoke on error signal

the consumer to invoke on complete signal

a new Disposable to dispose the org.reactivestreams.Subscription

Provide an alternative if this sequence is completed without any data

Visit this Scannable and its Scannable.parents() and stream all the observed tags

Alias for skip(1)

(Since version reactor-scala-extensions 0.5.0) will be removed, use transformDeferred() instead

Use foldWith instead

Use retryWhen(Retry)

A base processor that exposes SFlux API for org.reactivestreams.Processor.

Implementors include reactor.core.publisher.UnicastProcessor, reactor.core.publisher.EmitterProcessor, reactor.core.publisher.ReplayProcessor, reactor.core.publisher.WorkQueueProcessor and reactor.core.publisher.TopicProcessor.

the input value type

the output value type