tf

Related Doc:

package api

Method for combining sparse embeddings.

Method for combining sparse embeddings.

Constant padding mode.

Constant padding mode.

The op pads input with zeros according to the paddings you specify. paddings is an integer tensor with shape

[n, 2], where n is the rank of input. For each dimension D of input, paddings(D, 0) indicates how many

zeros to add before the contents of input in that dimension, and paddings(D, 1) indicates how many zeros to

add after the contents of input in that dimension.

The padded size of each dimension D of the output is equal to

paddings(D, 0) + input.shape(D) + paddings(D, 1).

For example:

// 'input' = [[1, 2, 3], [4, 5, 6]] // 'paddings' = [[1, 1], [2, 2]] tf.pad(input, paddings, tf.ConstantPadding(0)) ==> [[0, 0, 0, 0, 0, 0, 0], [0, 0, 1, 2, 3, 0, 0], [0, 0, 4, 5, 6, 0, 0], [0, 0, 0, 0, 0, 0, 0]]

Padding mode.

Padding mode.

Partitioning strategy for the embeddings map.

Partitioning strategy for the embeddings map.

Ids are assigned to partitions in a contiguous manner.

Ids are assigned to partitions in a contiguous manner. In this case, 13 ids are split across 5 partitions as:

1, 2], [3, 4, 5], [6, 7, 8], [9, 10], [11, 12.

Combines sparse embeddings by using a weighted sum divided by the total weight.

Combines sparse embeddings by using a weighted sum divided by the total weight.

Each id is assigned to partition p = id % parameters.numPartitions.

Each id is assigned to partition p = id % parameters.numPartitions. For instance, 13 ids are split across 5

partitions as: 5, 10], [1, 6, 11], [2, 7, 12], [3, 8], [4, 9.

Reflective padding mode.

Reflective padding mode.

The op pads input with mirrored values according to the paddings you specify. paddings is an integer tensor

with shape [n, 2], where n is the rank of input. For each dimension D of input, paddings(D, 0)

indicates how many values to add before the contents of input in that dimension, and paddings(D, 1) indicates

how many values to add after the contents of input in that dimension. Both paddings(D, 0) and paddings(D, 1)

must be no greater than input.shape(D) - 1.

The padded size of each dimension D of the output is equal to

paddings(D, 0) + input.shape(D) + paddings(D, 1).

For example:

// 'input' = [[1, 2, 3], [4, 5, 6]] // 'paddings' = [[1, 1], [2, 2]] tf.pad(input, paddings, tf.ReflectivePadding) ==> [[6, 5, 4, 5, 6, 5, 4], [3, 2, 1, 2, 3, 2, 1], [6, 5, 4, 5, 6, 5, 4], [3, 2, 1, 2, 3, 2, 1]]

Combines sparse embeddings by using a weighted sum.

Combines sparse embeddings by using a weighted sum.

Combines sparse embeddings by using a weighted sum divided by the square root of the sum of the squares of the weights.

Combines sparse embeddings by using a weighted sum divided by the square root of the sum of the squares of the weights.

Symmetric padding mode.

Symmetric padding mode.

The op pads input with mirrored values according to the paddings you specify. paddings is an integer tensor

with shape [n, 2], where n is the rank of input. For each dimension D of input, paddings(D, 0)

indicates how many values to add before the contents of input in that dimension, and paddings(D, 1) indicates

how many values to add after the contents of input in that dimension. Both paddings(D, 0) and paddings(D, 1)

must be no greater than input.shape(D).

The padded size of each dimension D of the output is equal to

paddings(D, 0) + input.shape(D) + paddings(D, 1).

For example:

// 'input' = [[1, 2, 3], [4, 5, 6]] // 'paddings' = [[1, 1], [2, 2]] tf.pad(input, paddings, tf.SymmetricPadding) ==> [[2, 1, 1, 2, 3, 3, 2], [2, 1, 1, 2, 3, 3, 2], [5, 4, 4, 5, 6, 6, 5], [5, 4, 4, 5, 6, 6, 5]]

The abs op computes the absolute value of a tensor.

The abs op computes the absolute value of a tensor.

Given a tensor x of real numbers, the op returns a tensor containing the absolute value of each element in

x. For example, if x is an input element and y is an output element, the op computes y = |x|.

Given a tensor x of complex numbers, the op returns a tensor of type FLOAT32 or FLOAT64 that is the

magnitude value of each element in x. All elements in x must be complex numbers of the form a + bj. The

magnitude is computed as \sqrt{a2 + b2}. For example:

// Tensor 'x' is [[-2.25 + 4.75j], [-3.25 + 5.75j]] abs(x) ==> [5.25594902, 6.60492229]

Input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, INT32, INT64,

COMPLEX64, or COMPLEX128.

Name for the created op.

Created op output.

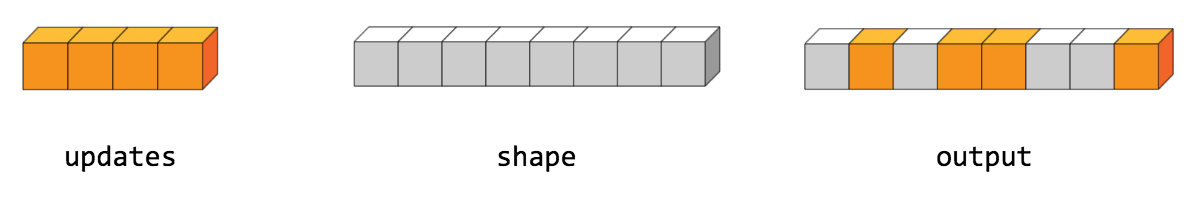

The accumulateN op adds all input tensors element-wise.

The accumulateN op adds all input tensors element-wise.

This op performs the same operation as the addN op, but it does not wait for all of its inputs to be ready

before beginning to sum. This can save memory if the inputs become available at different times, since the

minimum temporary storage is proportional to the output size, rather than the inputs size.

Input tensors.

Shape of the elements of inputs (in case it's not known statically and needs to be retained).

Created op name.

Created op output.

InvalidArgumentException If any of the inputs has a different data type and/or shape than the rest.

The acos op computes the inverse cosine of a tensor element-wise.

The acos op computes the inverse cosine of a tensor element-wise. I.e., y = \acos{x}.

Input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, INT32, INT64,

COMPLEX64, or COMPLEX128.

Name for the created op.

Created op output.

The acosh op computes the inverse hyperbolic cosine of a tensor element-wise.

The acosh op computes the inverse hyperbolic cosine of a tensor element-wise. I.e., y = \acosh{x}.

Input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, INT32, INT64,

COMPLEX64, or COMPLEX128.

Name for the created op.

Created op output.

The add op adds two tensors element-wise.

The add op adds two tensors element-wise. I.e., z = x + y.

NOTE: This op supports broadcasting. More information about broadcasting can be found [here](http://docs.scipy.org/doc/numpy/user/basics.broadcasting.html).

First input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, UINT8,

INT8, INT16, INT32, INT64, COMPLEX64, COMPLEX128, or STRING.

Second input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, UINT8,

INT8, INT16, INT32, INT64, COMPLEX64, COMPLEX128, or STRING.

Name for the created op.

Created op output.

The addBias op adds bias to value.

The addBias op adds bias to value.

The op is (mostly) a special case of add where bias is restricted to be one-dimensional (i.e., has rank

1). Broadcasting is supported and so value may have any number of dimensions. Unlike add, the type of

biasis allowed to differ from that of value value in the case where both types are quantized.

Value tensor.

Bias tensor that must be one-dimensional (i.e., it must have rank 1).

Data format of the input and output tensors. With the default format NWCFormat, the

bias tensor will be added to the last dimension of the value tensor. Alternatively, the

format could be NCWFormat, and the bias tensor would be added to the third-to-last

dimension.

Name for the created op.

Created op output.

The addN op adds all input tensors element-wise.

The addN op adds all input tensors element-wise.

Input tensors.

Created op name.

Created op output.

The all op computes the logical AND of elements across axes of a tensor.

The all op computes the logical AND of elements across axes of a tensor.

Reduces input along the axes given in axes. Unless keepDims is true, the rank of the tensor is reduced

by 1 for each entry in axes. If keepDims is true, the reduced axes are retained with size 1.

If axes is null, then all axes are reduced, and a tensor with a single element is returned.

For example:

// 'x' is [[true, true], [false, false]] all(x) ==> false all(x, 0) ==> [false, false] all(x, 1) ==> [true, false]

Input tensor to reduce.

Integer tensor containing the axes to reduce. If null, then all axes are reduced.

If true, retain the reduced axes.

Name for the created op.

Created op output.

The angle op returns the element-wise complex argument of a tensor.

The angle op returns the element-wise complex argument of a tensor.

Given a numeric tensor input, the op returns a tensor with numbers that are the complex angle of each element

in input. If the numbers in input are of the form a + bj, where *a* is the real part and *b* is the

imaginary part, then the complex angle returned by this operation is of the form atan2(b, a).

For example:

// 'input' is [-2.25 + 4.75j, 3.25 + 5.75j] angle(input) ==> [2.0132, 1.056]

If input is real-valued, then a tensor containing zeros is returned.

Input tensor.

Name for the created op.

Created op output.

IllegalArgumentException If the provided tensor is not numeric.

The any op computes the logical OR of elements across axes of a tensor.

The any op computes the logical OR of elements across axes of a tensor.

Reduces input along the axes given in axes. Unless keepDims is true, the rank of the tensor is reduced

by 1 for each entry in axes. If keepDims is true, the reduced axes are retained with size 1.

If axes is null, then all axes are reduced, and a tensor with a single element is returned.

For example:

// 'x' is [[true, true], [false, false]] any(x) ==> true any(x, 0) ==> [true, true] any(x, 1) ==> [true, false]

Input tensor to reduce.

Integer tensor containing the axes to reduce. If null, then all axes are reduced.

If true, retain the reduced axes.

Name for the created op.

Created op output.

The approximatelyEqual op computes the truth value of abs(x - y) < tolerance element-wise.

The approximatelyEqual op computes the truth value of abs(x - y) < tolerance element-wise.

NOTE: This op supports broadcasting. More information about broadcasting can be found [here](http://docs.scipy.org/doc/numpy/user/basics.broadcasting.html).

First input tensor.

Second input tensor.

Comparison tolerance value.

Name for the created op.

Created op output.

The argmax op returns the indices with the largest value across axes of a tensor.

The argmax op returns the indices with the largest value across axes of a tensor.

Note that in case of ties the identity of the return value is not guaranteed.

Input tensor.

Integer tensor containing the axes to reduce. If null, then all axes are reduced.

Data type for the output tensor. Must be INT32 or INT64.

Name for the created op.

Created op output.

The argmin op returns the indices with the smallest value across axes of a tensor.

The argmin op returns the indices with the smallest value across axes of a tensor.

Note that in case of ties the identity of the return value is not guaranteed.

Input tensor.

Integer tensor containing the axes to reduce. If null, then all axes are reduced.

Name for the created op.

Created op output.

The asin op computes the inverse sine of a tensor element-wise.

The asin op computes the inverse sine of a tensor element-wise. I.e., y = \asin{x}.

Input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, INT32, INT64,

COMPLEX64, or COMPLEX128.

Name for the created op.

Created op output.

The asinh op computes the inverse hyperbolic sine of a tensor element-wise.

The asinh op computes the inverse hyperbolic sine of a tensor element-wise. I.e., y = \asinh{x}.

Input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, INT32, INT64,

COMPLEX64, or COMPLEX128.

Name for the created op.

Created op output.

The assert op asserts that the provided condition is true.

The assert op asserts that the provided condition is true.

If condition evaluates to false, then the op prints all the op outputs in data. summarize determines how

many entries of the tensors to print.

Note that to ensure that assert executes, one usually attaches it as a dependency:

// Ensure maximum element of x is smaller or equal to 1. val assertOp = tf.assert(tf.lessEqual(tf.max(x), 1.0), Seq(x)) Op.createWith(controlDependencies = Set(assertOp)) { ... code using x ... }

Condition to assert.

Op outputs whose values are printed if condition is false.

Number of tensor entries to print.

Name for the created op.

Created op.

The assertAtMostNTrue op asserts that at most n of the provided predicates can evaluate to true at the

same time.

The assertAtMostNTrue op asserts that at most n of the provided predicates can evaluate to true at the

same time.

Sequence containing scalar boolean tensors, representing the predicates.

Maximum number of predicates allowed to be true.

Optional message to include in the error message, if the assertion fails.

Number of tensor entries to print.

Name for the created op.

Created op.

The assertEqual op asserts that the condition x == y holds element-wise.

The assertEqual op asserts that the condition x == y holds element-wise.

Example usage:

val output = tf.createWith(controlDependencies = Set(tf.assertEqual(x, y)) { x.sum() }

The condition is satisfied if for every pair of (possibly broadcast) elements x(i), y(i), we have

x(i) == y(i). If both x and y are empty, it is trivially satisfied.

First input tensor.

Second input tensor.

Optional message to include in the error message, if the assertion fails.

Op outputs whose values are printed if condition is false.

Number of tensor entries to print.

Name for the created op.

Created op.

The assertGreater op asserts that the condition x > y holds element-wise.

The assertGreater op asserts that the condition x > y holds element-wise.

Example usage:

val output = tf.createWith(controlDependencies = Set(tf.assertGreater(x, y)) { x.sum() }

The condition is satisfied if for every pair of (possibly broadcast) elements x(i), y(i), we have

x(i) > y(i). If both x and y are empty, it is trivially satisfied.

First input tensor.

Second input tensor.

Optional message to include in the error message, if the assertion fails.

Op outputs whose values are printed if condition is false.

Number of tensor entries to print.

Name for the created op.

Created op.

The assertGreaterEqual op asserts that the condition x >= y holds element-wise.

The assertGreaterEqual op asserts that the condition x >= y holds element-wise.

Example usage:

val output = tf.createWith(controlDependencies = Set(tf.assertGreaterEqual(x, y)) { x.sum() }

The condition is satisfied if for every pair of (possibly broadcast) elements x(i), y(i), we have

x(i) >= y(i). If both x and y are empty, it is trivially satisfied.

First input tensor.

Second input tensor.

Optional message to include in the error message, if the assertion fails.

Op outputs whose values are printed if condition is false.

Number of tensor entries to print.

Name for the created op.

Created op.

The assertLess op asserts that the condition x < y holds element-wise.

The assertLess op asserts that the condition x < y holds element-wise.

Example usage:

val output = tf.createWith(controlDependencies = Set(tf.assertLess(x, y)) { x.sum() }

The condition is satisfied if for every pair of (possibly broadcast) elements x(i), y(i), we have

x(i) < y(i). If both x and y are empty, it is trivially satisfied.

First input tensor.

Second input tensor.

Optional message to include in the error message, if the assertion fails.

Op outputs whose values are printed if condition is false.

Number of tensor entries to print.

Name for the created op.

Created op.

The assertLessEqual op asserts that the condition x <= y holds element-wise.

The assertLessEqual op asserts that the condition x <= y holds element-wise.

Example usage:

val output = tf.createWith(controlDependencies = Set(tf.assertLessEqual(x, y)) { x.sum() }

The condition is satisfied if for every pair of (possibly broadcast) elements x(i), y(i), we have

x(i) <= y(i). If both x and y are empty, it is trivially satisfied.

First input tensor.

Second input tensor.

Optional message to include in the error message, if the assertion fails.

Op outputs whose values are printed if condition is false.

Number of tensor entries to print.

Name for the created op.

Created op.

The assertNear op asserts that x and y are close element-wise.

The assertNear op asserts that x and y are close element-wise.

Example usage:

val output = tf.createWith(controlDependencies = Set(tf.assertNear(x, y, relTolerance, absTolerance)) { x.sum() }

The condition is satisfied if for every pair of (possibly broadcast) elements x(i), y(i), we have

tf.abs(x(i) - y(i)) <= absTolerance + relTolerance * tf.abs(y(i)). If both x and y are empty, it is

trivially satisfied.

First input tensor.

Second input tensor.

Comparison relative tolerance value.

Comparison absolute tolerance value.

Optional message to include in the error message, if the assertion fails.

Op outputs whose values are printed if condition is false.

Number of tensor entries to print.

Name for the created op.

Created op.

The assertNegative op asserts that the condition input < 0 holds element-wise.

The assertNegative op asserts that the condition input < 0 holds element-wise.

Example usage:

val output = tf.createWith(controlDependencies = Set(tf.assertNegative(x)) { x.sum() }

If input is an empty tensor, the condition is trivially satisfied.

Input tensor to check.

Optional message to include in the error message, if the assertion fails.

Op outputs whose values are printed if condition is false.

Number of tensor entries to print.

Name for the created op.

Created op.

The assertNonNegative op asserts that the condition input >= 0 holds element-wise.

The assertNonNegative op asserts that the condition input >= 0 holds element-wise.

Example usage:

val output = tf.createWith(controlDependencies = Set(tf.assertNonNegative(x)) { x.sum() }

If input is an empty tensor, the condition is trivially satisfied.

Input tensor to check.

Optional message to include in the error message, if the assertion fails.

Op outputs whose values are printed if condition is false.

Number of tensor entries to print.

Name for the created op.

Created op.

The assertNonPositive op asserts that the condition input <= 0 holds element-wise.

The assertNonPositive op asserts that the condition input <= 0 holds element-wise.

Example usage:

val output = tf.createWith(controlDependencies = Set(tf.assertNonPositive(x)) { x.sum() }

If input is an empty tensor, the condition is trivially satisfied.

Input tensor to check.

Optional message to include in the error message, if the assertion fails.

Op outputs whose values are printed if condition is false.

Number of tensor entries to print.

Name for the created op.

Created op.

The assertNoneEqual op asserts that the condition x != y holds element-wise.

The assertNoneEqual op asserts that the condition x != y holds element-wise.

Example usage:

val output = tf.createWith(controlDependencies = Set(tf.assertNoneEqual(x, y)) { x.sum() }

The condition is satisfied if for every pair of (possibly broadcast) elements x(i), y(i), we have

x(i) != y(i). If both x and y are empty, it is trivially satisfied.

First input tensor.

Second input tensor.

Optional message to include in the error message, if the assertion fails.

Op outputs whose values are printed if condition is false.

Number of tensor entries to print.

Name for the created op.

Created op.

The assertPositive op asserts that the condition input > 0 holds element-wise.

The assertPositive op asserts that the condition input > 0 holds element-wise.

Example usage:

val output = tf.createWith(controlDependencies = Set(tf.assertPositive(x)) { x.sum() }

If input is an empty tensor, the condition is trivially satisfied.

Input tensor to check.

Optional message to include in the error message, if the assertion fails.

Op outputs whose values are printed if condition is false.

Number of tensor entries to print.

Name for the created op.

Created op.

The atan op computes the inverse tangent of a tensor element-wise.

The atan op computes the inverse tangent of a tensor element-wise. I.e., y = \atan{x}.

Input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, INT32, INT64,

COMPLEX64, or COMPLEX128.

Name for the created op.

Created op output.

The atan2 op computes the inverse tangent of x / y element-wise, respecting signs of the arguments.

The atan2 op computes the inverse tangent of x / y element-wise, respecting signs of the arguments.

The op computes the angle \theta \in [-\pi, \pi] such that y = r \cos(\theta) and

x = r \sin(\theta), where r = \sqrt(x2 + y2).

First input tensor that must be one of the following types: FLOAT32, or FLOAT64.

Second input tensor that must be one of the following types: FLOAT32, or FLOAT64.

Name for the created op.

Created op output.

The atanh op computes the inverse hyperbolic tangent of a tensor element-wise.

The atanh op computes the inverse hyperbolic tangent of a tensor element-wise. I.e., y = \atanh{x}.

Input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, INT32, INT64,

COMPLEX64, or COMPLEX128.

Name for the created op.

Created op output.

The batchNormalization op applies batch normalization to input x, as described in

http://arxiv.org/abs/1502.03167.

The batchNormalization op applies batch normalization to input x, as described in

http://arxiv.org/abs/1502.03167.

The op normalizes a tensor by mean and variance, and optionally applies a scale and offset to it

beta + scale * (x - mean) / variance. mean, variance, offset and scale are all expected to be of one

of two shapes:

x, with identical sizes as x

for the dimensions that are not normalized over the "depth" dimension(s), and size 1 for the others, which

are being normalized over. mean and variance in this case would typically be the outputs of

tf.moments(..., keepDims = true) during training, or running averages thereof during inference.x, they may be

one-dimensional tensors of the same size as the "depth" dimension. This is the case, for example, for the

common [batch, depth] layout of fully-connected layers, and [batch, height, width, depth] for

convolutions. mean and variance in this case would typically be the outputs of

tf.moments(..., keepDims = false) during training, or running averages thereof during inference.Input tensor of arbitrary dimensionality.

Mean tensor.

Variance tensor.

Optional offset tensor, often denoted beta in equations.

Optional scale tensor, often denoted gamma in equations.

Small floating point number added to the variance to avoid division by zero.

Name for the created ops.

Batch-normalized tensor x.

The batchToSpace op rearranges (permutes) data from batches into blocks of spatial data, followed by cropping.

The batchToSpace op rearranges (permutes) data from batches into blocks of spatial data, followed by cropping.

More specifically, the op outputs a copy of the input tensor where values from the batch dimension are moved

in spatial blocks to the height and width dimensions, followed by cropping along the height and width

dimensions. This is the reverse functionality to that of spaceToBatch.

input is a 4-dimensional input tensor with shape

[batch * blockSize * blockSize, heightPad / blockSize, widthPad / blockSize, depth].

crops has shape [2, 2]. It specifies how many elements to crop from the intermediate result across the

spatial dimensions as follows: crops = cropBottom], [cropLeft, cropRight. The shape of the output

will be: [batch, heightPad - cropTom - cropBottom, widthPad - cropLeft - cropRight, depth].

Some examples:

// === Example #1 === // input = [[[[1]]], [[[2]]], [[[3]]], [[[4]]]] (shape = [4, 1, 1, 1]) // blockSize = 2 // crops = [[0, 0], [0, 0]] batchToSpace(input, blockSize, crops) ==> [[[[1], [2]], [[3], [4]]]] (shape = [1, 2, 2, 1]) // === Example #2 === // input = [[[1, 2, 3]], [[4, 5, 6]], [[7, 8, 9]], [[10, 11, 12]]] (shape = [4, 1, 1, 3]) // blockSize = 2 // crops = [[0, 0], [0, 0]] batchToSpace(input, blockSize, crops) ==> [[[[1, 2, 3], [4, 5, 6]], [[7, 8, 9], [10, 11, 12]]]] (shape = [1, 2, 2, 3]) // === Example #3 === // input = [[[[1], [3]], [[ 9], [11]]], // [[[2], [4]], [[10], [12]]], // [[[5], [7]], [[13], [15]]], // [[[6], [8]], [[14], [16]]]] (shape = [4, 2, 2, 1]) // blockSize = 2 // crops = [[0, 0], [0, 0]] batchToSpace(input, blockSize, crops) ==> [[[[ 1], [2], [3], [ 4]], [[ 5], [6], [7], [ 8]], [[ 9], [10], [11], [12]], [[13], [14], [15], [16]]]] (shape = [1, 4, 4, 1]) // === Example #4 === // input = [[[[0], [1], [3]]], [[[0], [ 9], [11]]], // [[[0], [2], [4]]], [[[0], [10], [12]]], // [[[0], [5], [7]]], [[[0], [13], [15]]], // [[[0], [6], [8]]], [[[0], [14], [16]]]] (shape = [8, 1, 3, 1]) // blockSize = 2 // crops = [[0, 0], [2, 0]] batchToSpace(input, blockSize, crops) ==> [[[[ 1], [2], [3], [ 4]], [[ 5], [6], [7], [ 8]]], [[[ 9], [10], [11], [12]], [[13], [14], [15], [16]]]] (shape = [2, 2, 4, 1])

4-dimensional input tensor with shape [batch, height, width, depth].

Block size which must be greater than 1.

2-dimensional INT32 or INT64 tensor containing non-negative integers with shape

[2, 2].

Name for the created op.

Created op output.

The batchToSpaceND op reshapes the "batch" dimension 0 into M + 1 dimensions of shape

blockShape + [batch] and interleaves these blocks back into the grid defined by the spatial dimensions

[1, ..., M], to obtain a result with the same rank as the input.

The batchToSpaceND op reshapes the "batch" dimension 0 into M + 1 dimensions of shape

blockShape + [batch] and interleaves these blocks back into the grid defined by the spatial dimensions

[1, ..., M], to obtain a result with the same rank as the input. The spatial dimensions of this intermediate

result are then optionally cropped according to crops to produce the output. This is the reverse functionality

to that of spaceToBatchND.

input is an N-dimensional tensor with shape inputShape = [batch] + spatialShape + remainingShape, where

spatialShape has M dimensions.

The op is equivalent to the following steps:

input to reshaped of shape:[blockShape(0), ..., blockShape(M-1), batch / product(blockShape), inputShape(1), ..., inputShape(N-1)]

2. Permute dimensions of reshaped to produce permuted of shape:

[batch / product(blockShape), inputShape(1), blockShape(0), ..., inputShape(N-1), blockShape(M-1), inputShape(M+1), ..., inputShape(N-1)]

3. Reshape permuted to produce reshapedPermuted of shape:

[batch / product(blockShape), inputShape(1) * blockShape(0), ..., inputShape(M) * blockShape(M-1), ..., inputShape(M+1), ..., inputShape(N-1)]

4. Crop the start and end of dimensions [1, ..., M] of reshapedPermuted according to crops to produce

the output of shape:

[batch / product(blockShape), inputShape(1) * blockShape(0) - crops(0, 0) - crops(0, 1), ..., inputShape(M) * blockShape(M-1) - crops(M-1, 0) - crops(M-1, 1), inputShape(M+1), ..., inputShape(N-1)]

Some exaples:

// === Example #1 === // input = [[[[1]]], [[[2]]], [[[3]]], [[[4]]]] (shape = [4, 1, 1, 1]) // blockShape = [2, 2] // crops = [[0, 0], [0, 0]] batchToSpaceND(input, blockShape, crops) ==> [[[[1], [2]], [[3], [4]]]] (shape = [1, 2, 2, 1]) // === Example #2 === // input = [[[1, 2, 3]], [[4, 5, 6]], [[7, 8, 9]], [[10, 11, 12]]] (shape = [4, 1, 1, 3]) // blockShape = [2, 2] // crops = [[0, 0], [0, 0]] batchToSpaceND(input, blockShape, crops) ==> [[[[1, 2, 3], [ 4, 5, 6]], [[7, 8, 9], [10, 11, 12]]]] (shape = [1, 2, 2, 3]) // === Example #3 === // input = [[[[1], [3]], [[ 9], [11]]], // [[[2], [4]], [[10], [12]]], // [[[5], [7]], [[13], [15]]], // [[[6], [8]], [[14], [16]]]] (shape = [4, 2, 2, 1]) // blockShape = [2, 2] // crops = [[0, 0], [0, 0]] batchToSpaceND(input, blockShape, crops) ==> [[[[ 1], [2], [3], [ 4]], [[ 5], [6], [7], [ 8]], [[ 9], [10], [11], [12]], [[13], [14], [15], [16]]]] (shape = [1, 4, 4, 1]) // === Example #4 === // input = [[[[0], [1], [3]]], [[[0], [ 9], [11]]], // [[[0], [2], [4]]], [[[0], [10], [12]]], // [[[0], [5], [7]]], [[[0], [13], [15]]], // [[[0], [6], [8]]], [[[0], [14], [16]]]] (shape = [8, 1, 3, 1]) // blockShape = [2, 2] // crops = [[0, 0], [2, 0]] batchToSpaceND(input, blockShape, crops) ==> [[[[[ 1], [2], [3], [ 4]], [[ 5], [6], [7], [ 8]]], [[[ 9], [10], [11], [12]], [[13], [14], [15], [16]]]] (shape = [2, 2, 4, 1])

N-dimensional tensor with shape inputShape = [batch] + spatialShape + remainingShape, where

spatialShape has M dimensions.

One-dimensional INT32 or INT64 tensor with shape [M] whose elements must all be

>= 1.

Two-dimensional INT32 or INT64 tensor with shape [M, 2] whose elements must all be

non-negative. crops(i) = [cropStart, cropEnd] specifies the amount to crop from input

dimension i + 1, which corresponds to spatial dimension i. It is required that

cropStart(i) + cropEnd(i) <= blockShape(i) * inputShape(i + 1).

Name for the created op.

Created op output.

The bidirectionalDynamicRNN op creates a bidirectional recurrent neural network (RNN) specified by the

provided RNN cell.

The bidirectionalDynamicRNN op creates a bidirectional recurrent neural network (RNN) specified by the

provided RNN cell. The op performs fully dynamic unrolling of the forward and backward RNNs.

The op takes the inputs and builds independent forward and backward RNNs. The output sizes of the forward and the backward RNN cells must match. The initial state for both directions can be provided and no intermediate states are ever returned -- the network is fully unrolled for the provided sequence length(s) of the sequence(s) or completely unrolled if sequence length(s) are not provided.

RNN cell to use for the forward direction.

RNN cell to use for the backward direction.

Input to the RNN loop.

Initial state to use for the forward RNN, which is a sequence of tensors with shapes

[batchSize, stateSize(i)], where i corresponds to the index in that sequence.

Defaults to a zero state.

Initial state to use for the backward RNN, which is a sequence of tensors with shapes

[batchSize, stateSize(i)], where i corresponds to the index in that sequence.

Defaults to a zero state.

Boolean value indicating whether the inputs are provided in time-major format (i.e.,

have shape [time, batch, depth]) or in batch-major format (i.e., have shape

[batch, time, depth]).

Number of RNN loop iterations allowed to run in parallel.

If true, GPU-CPU memory swapping support is enabled for the RNN loop.

Optional INT32 tensor with shape [batchSize] containing the sequence lengths for

each row in the batch.

Name prefix to use for the created ops.

Tuple containing: (i) the forward RNN cell tuple after the forward dynamic RNN loop is completed, and (ii)

the backward RNN cell tuple after the backward dynamic RNN loop is completed. The output of these tuples

has a time axis prepended to the shape of each tensor and corresponds to the RNN outputs at each iteration

in the loop. The state represents the RNN state at the end of the loop.

InvalidShapeException If the inputs or the provided sequence lengths have invalid or unknown shapes.

The binCount op counts the number of occurrences of each value in an integer tensor.

The binCount op counts the number of occurrences of each value in an integer tensor.

If minLength and maxLength are not provided, the op returns a vector with length max(input) + 1, if

input is non-empty, and length 0 otherwise.

If weights is not null, then index i of the output stores the sum of the value in weights at each

index where the corresponding value in input is equal to i.

INT32 tensor containing non-negative values.

If not null, this tensor must have the same shape as input. For each value in input, the

corresponding bin count will be incremented by the corresponding weight instead of 1.

If not null, this ensures the output has length at least minLength, padding with zeros at

the end, if necessary.

If not null, this skips values in input that are equal or greater than maxLength, ensuring

that the output has length at most maxLength.

If weights is null, this determines the data type used for the output tensor (i.e., the

tensor containing the bin counts).

Name for the created op.

Created op output.

The bitcast op bitcasts a tensor from one type to another without copying data.

The bitcast op bitcasts a tensor from one type to another without copying data.

Given a tensor input, the op returns a tensor that has the same buffer data as input, but with data type

dataType. If the input data type T is larger (in terms of number of bytes), then the output data type

dataType, then the shape changes from [...] to [..., sizeof(T)/sizeof(dataType)]. If T is smaller than

dataType, then the op requires that the rightmost dimension be equal to sizeof(dataType)/sizeof(T). The

shape then changes from [..., sizeof(type)/sizeof(T)] to [...].

*NOTE*: Bitcast is implemented as a low-level cast, so machines with different endian orderings will give different results.

Input tensor.

Target data type.

Name for the created op.

Created op output.

The booleanMask op applies the provided boolean mask to input.

The booleanMask op applies the provided boolean mask to input.

In general, 0 < mask.rank = K <= tensor.rank, and mask's shape must match the first K dimensions of

tensor's shape. We then have:

booleanMask(tensor, mask)(i, j1, --- , jd) = tensor(i1, --- , iK, j1, ---, jd), where (i1, ---, iK) is the

ith true entry of mask (in row-major order).

For example:

// 1-D example tensor = [0, 1, 2, 3] mask = [True, False, True, False] booleanMask(tensor, mask) ==> [0, 2] // 2-D example tensor = [[1, 2], [3, 4], [5, 6]] mask = [True, False, True] booleanMask(tensor, mask) ==> [[1, 2], [5, 6]]

N-dimensional tensor.

K-dimensional boolean tensor, where K <= N and K must be known statically.

Name for the created op output.

Created op output.

The broadcastGradientArguments op returns the reduction indices for computing the gradients of shape0

[operator] shape1 with broadcasting.

The broadcastGradientArguments op returns the reduction indices for computing the gradients of shape0

[operator] shape1 with broadcasting.

This is typically used by gradient computations for broadcasting operations.

First operand shape.

Second operand shape.

Name for the created op.

Tuple containing two op outputs, each containing the reduction indices for the corresponding op.

The broadcastShape op returns the broadcasted dynamic shape between two provided shapes, corresponding to the

shapes of the two arguments provided to an op that supports broadcasting.

The broadcastShape op returns the broadcasted dynamic shape between two provided shapes, corresponding to the

shapes of the two arguments provided to an op that supports broadcasting.

One-dimensional integer tensor representing the shape of the first argument.

One-dimensional integer tensor representing the shape of the first argument.

Name for the created op.

Created op output, which is a one-dimensional integer tensor representing the broadcasted shape.

The broadcastTo op returns a tensor with its shape broadcast to the provided shape.

The broadcastTo op returns a tensor with its shape broadcast to the provided shape. Broadcasting is the

process of making arrays to have compatible shapes for arithmetic operations. Two shapes are compatible if for

each dimension pair they are either equal or one of them is one. When trying to broadcast a tensor to a shape,

the op starts with the trailing dimension, and works its way forward.

For example:

val x = tf.constant(Tensor(1, 2, 3)) val y = tf.broadcastTo(x, Seq(3, 3)) y ==> [[1, 2, 3], [1, 2, 3], [1, 2, 3]]

In the above example, the input tensor with the shape of [1, 3] is broadcasted to the output tensor with a

shape of [3, 3].

Tensor to broadcast.

Shape to broadcast the provided tensor to.

Name for the created op.

Created op output.

The bucketize op bucketizes a tensor based on the provided boundaries.

The bucketize op bucketizes a tensor based on the provided boundaries.

For example:

// 'input' is [[-5, 10000], [150, 10], [5, 100]] // 'boundaries' are [0, 10, 100] bucketize(input, boundaries) ==> [[0, 3], [3, 2], [1, 3]]

Numeric tensor to bucketize.

Sorted sequence of Floats specifying the boundaries of the buckets.

Name for the created op.

Created op output.

$OpDocCallbackCallback

$OpDocCallbackCallback

Scala function input type (e.g., Tensor).

Op input type, which is the symbolic type corresponding to T (e.g., Output).

Scala function output type (e.g., Tensor).

Op output type, which is the symbolic type corresponding to R (e.g., Output).

Structure of data types corresponding to R (e.g., DataType).

Scala function to use for the callback op.

Input for the created op.

Data types of the Scala function outputs.

If true, the function should be considered stateful. If a function is stateless, when

given the same input it will return the same output and have no observable side effects.

Optimizations such as common subexpression elimination are only performed on stateless

operations.

Name for the created op.

Created op output.

The cases op creates a case operation.

The cases op creates a case operation.

The predicateFnPairs parameter is a sequence of pairs. Each pair contains a boolean scalar tensor and a

function that takes no parameters and creates the tensors to be returned if the boolean evaluates to true.

default is a function that returns the default value, used when all provided predicates evaluate to false.

All functions in predicateFnPairs as well as default (if provided) should return the same structure of

tensors, and with matching data types. If exclusive == true, all predicates are evaluated, and an exception is

thrown if more than one of the predicates evaluates to true. If exclusive == false, execution stops at the

first predicate which evaluates to true, and the tensors generated by the corresponding function are returned

immediately. If none of the predicates evaluate to true, the operation returns the tensors generated by

default.

Example 1:

// r = if (x < y) 17 else 23. val r = tf.cases( Seq(x < y -> () => tf.constant(17)), default = () => tf.constant(23))

Example 2:

// if (x < y && x > z) throw error. // r = if (x < y) 17 else if (x > z) 23 else -1. val r = tf.cases( Seq(x < y -> () => tf.constant(17), x > z -> tf.constant(23)), default = () => tf.constant(-1), exclusive = true)

Contains pairs of predicates and value functions for those predicates.

Default return value function, in case all predicates evaluate to false.

If true, only one of the predicates is allowed to be true at the same time.

Name prefix for the created ops.

Created op output structure, mirroring the return structure of the provided predicate functions.

InvalidDataTypeException If the data types of the tensors returned by the provided predicate functions

do not match.

The cast op casts a tensor to a new data type.

The cast op casts a tensor to a new data type.

The op casts x to the provided data type.

For example:

// `a` is a tensor with values [1.8, 2.2], and data type FLOAT32 cast(a, INT32) ==> [1, 2] // with data type INT32

**NOTE**: Only a smaller number of types are supported by the cast op. The exact casting rule is TBD. The

current implementation uses C++ static cast rules for numeric types, which may be changed in the future.

Tensor to cast.

Target data type.

Name for the created op.

Created op output.

The ceil op computes the smallest integer not greater than the current value of a tensor, element-wise.

The ceil op computes the smallest integer not greater than the current value of a tensor, element-wise.

Input tensor that must be one of the following types: HALF, FLOAT32, or FLOAT64.

Name for the created op.

Created op output.

The checkNumerics op checks a tensor for NaN and Inf values.

The checkNumerics op checks a tensor for NaN and Inf values.

When run, reports an InvalidArgument error if input has any values that are not-a-number (NaN) or infinity

(Inf). Otherwise, it acts as an identity op and passes input to the output, as-is.

Input tensor.

Prefix to print for the error message.

Name for the created op.

Created op output, which has the same value as the input tensor.

The clipByAverageNorm op clips tensor values to a specified maximum average l2-norm value.

The clipByAverageNorm op clips tensor values to a specified maximum average l2-norm value.

Given a tensor input, and a maximum clip value clipNorm, the op normalizes input so that its average

l2-norm is less than or equal to clipNorm. If the average l2-norm of input is already less than or equal to

clipNorm, then input is not modified. If the l2-norm is greater than clipNorm, then the op returns a

tensor of the same data type and shape as input, but with its values set to

input * clipNorm / l2NormAvg(input).

In this case, the average l2-norm of the output tensor is equal to clipNorm.

This op is typically used to clip gradients before applying them with an optimizer.

Input tensor.

0-D (scalar) tensor > 0, specifying the maximum clipping value.

Name prefix for created ops.

Created op output.

The clipByGlobalNorm op clips values of multiple tensors by the ratio of the sum of their norms.

The clipByGlobalNorm op clips values of multiple tensors by the ratio of the sum of their norms.

Given a sequence of tensors inputs, and a clipping ratio clipNorm, the op returns a sequence of clipped

tensors clipped, along with the global norm (globalNorm) of all tensors in inputs. Optionally, if you've

already computed the global norm for inputs, you can specify the global norm with globalNorm.

To perform the clipping, the values inputs(i) are set to: inputs(i) * clipNorm / max(globalNorm, clipNorm),

where: globalNorm = sqrt(sum(inputs.map(i => l2Norm(i)^2))).

If clipNorm > globalNorm then the tensors in inputs remain as they are. Otherwise, they are all shrunk by

the global ratio.

Any of the tensors in inputs that are null are ignored.

Note that this is generally considered as the "correct" way to perform gradient clipping (see, for example,

[Pascanu et al., 2012](http://arxiv.org/abs/1211.5063)). However, it is slower than clipByNorm() because all

the input tensors must be ready before the clipping operation can be performed.

Input tensors.

0-D (scalar) tensor > 0, specifying the maximum clipping value.

0-D (scalar) tensor containing the global norm to use. If not provided, globalNorm() is used

to compute the norm.

Name prefix for created ops.

Tuple containing the clipped tensors as well as the global norm that was used for the clipping.

The clipByNorm op clips tensor values to a specified maximum l2-norm value.

The clipByNorm op clips tensor values to a specified maximum l2-norm value.

Given a tensor input, and a maximum clip value clipNorm, the op normalizes input so that its l2-norm is

less than or equal to clipNorm, along the dimensions provided in axes. Specifically, in the default case

where all dimensions are used for the calculation, if the l2-norm of input is already less than or equal to

clipNorm, then input is not modified. If the l2-norm is greater than clipNorm, then the op returns a

tensor of the same data type and shape as input, but with its values set to

input * clipNorm / l2Norm(input).

In this case, the l2-norm of the output tensor is equal to clipNorm.

As another example, if input is a matrix and axes == [1], then each row of the output will have l2-norm

equal to clipNorm. If axes == [0] instead, each column of the output will be clipped.

This op is typically used to clip gradients before applying them with an optimizer.

Input tensor.

0-D (scalar) tensor > 0, specifying the maximum clipping value.

1-D (vector) INT32 tensor containing the dimensions to use for computing the l2-norm. If

null (the default), all dimensions are used.

Name prefix for created ops.

Created op output.

The clipByValue op clips tensor values to a specified min and max value.

The clipByValue op clips tensor values to a specified min and max value.

Given a tensor input, the op returns a tensor of the same type and shape as input, with its values clipped

to clipValueMin and clipValueMax. Any values less than clipValueMin are set to clipValueMin and any

values greater than clipValueMax are set to clipValueMax.

Input tensor.

0-D (scalar) tensor, or a tensor with the same shape as input, specifying the minimum value

to clip by.

0-D (scalar) tensor, or a tensor with the same shape as input, specifying the maximum value

to clip by.

Name prefix for created ops.

Created op output.

The complex op converts two real tensors to a complex tensor.

The complex op converts two real tensors to a complex tensor.

Given a tensor real representing the real part of a complex number, and a tensor imag representing the

imaginary part of a complex number, the op returns complex numbers element-wise of the form a + bj, where *a*

represents the real part and *b* represents the imag part. The input tensors real and imag must have the

same shape and data type.

For example:

// 'real' is [2.25, 3.25] // 'imag' is [4.75, 5.75] complex(real, imag) ==> [[2.25 + 4.75j], [3.25 + 5.75j]]

Tensor containing the real component. Must have FLOAT32 or FLOAT64 data type.

Tensor containing the imaginary component. Must have FLOAT32 or FLOAT64 data type.

Name for the created op.

Created op output with data type being either COMPLEX64 or COMPLEX128.

The concatenate op concatenates tensors along one dimension.

The concatenate op concatenates tensors along one dimension.

The op concatenates the list of tensors inputs along the dimension axis. If

inputs(i).shape = [D0, D1, ..., Daxis(i), ..., Dn], then the concatenated tensor will have shape

[D0, D1, ..., Raxis, ..., Dn], where Raxis = sum(Daxis(i)). That is, the data from the input tensors is

joined along the axis dimension.

For example:

// 't1' is equal to [[1, 2, 3], [4, 5, 6]] // 't2' is equal to [[7, 8, 9], [10, 11, 12]] concatenate(Array(t1, t2), 0) ==> [[1, 2, 3], [4, 5, 6], [7, 8, 9], [10, 11, 12]] concatenate(Array(t1, t2), 1) ==> [[1, 2, 3, 7, 8, 9], [4, 5, 6, 10, 11, 12]] // 't3' has shape [2, 3] // 't4' has shape [2, 3] concatenate(Array(t3, t4), 0).shape ==> [4, 3] concatenate(Array(t3, t4), 1).shape ==> [2, 6]

Note that, if you want to concatenate along a new axis, it may be better to use the stack op instead:

concatenate(tensors.map(t => expandDims(t, axis)), axis) == stack(tensors, axis)Input tensors to be concatenated.

Dimension along which to concatenate the input tensors. As in Python, indexing for the axis is

0-based. Positive axes in the range of [0, rank(values)) refer to the axis-th dimension, and

negative axes refer to the axis + rank(inputs)-th dimension.

Name for the created op.

Created op output.

The cond op returns trueFn() if the predicate predicate is true, else falseFn().

The cond op returns trueFn() if the predicate predicate is true, else falseFn().

trueFn and falseFn both return structures of tensors (e.g., lists of tensors). trueFn and falseFn must

have the same non-zero number and type of outputs. Note that the conditional execution applies only to the ops

defined in trueFn and falseFn.

For example, consider the following simple program:

val z = tf.multiply(a, b) val result = tf.cond(x < y, () => tf.add(x, z), () => tf.square(y))

If x < y, the tf.add operation will be executed and the tf.square operation will not be executed. Since

z is needed for at least one branch of the cond, the tf.multiply operation is always executed,

unconditionally. Although this behavior is consistent with the data-flow model of TensorFlow, it has

occasionally surprised some users who expected lazier semantics.

Note that cond calls trueFn and falseFn *exactly once* (inside the call to cond, and not at all during

Session.run()). cond stitches together the graph fragments created during the trueFn and falseFn calls

with some additional graph nodes to ensure that the right branch gets executed depending on the value of

predicate.

cond supports nested tensor structures, similar to Session.run(). Both trueFn and falseFn must return

the same (possibly nested) value structure of sequences, tuples, and/or maps.

NOTE: If the predicate always evaluates to some constant value and that can be inferred statically, then only the corresponding branch is built and no control flow ops are added. In some cases, this can significantly improve performance.

BOOLEAN scalar determining whether to return the result of trueFn or falseFn.

Function returning the computation to be performed if predicate is true.

Function returning the computation to be performed if predicate is false.

Name prefix for the created ops.

Created op output structure, mirroring the return structure of trueFn and falseFn.

InvalidDataTypeException If the data types of the tensors returned by trueFn and falseFn do not match.

The conjugate op returns the element-wise complex conjugate of a tensor.

The conjugate op returns the element-wise complex conjugate of a tensor.

Given a numeric tensor input, the op returns a tensor with numbers that are the complex conjugate of each

element in input. If the numbers in input are of the form a + bj, where *a* is the real part and *b* is

the imaginary part, then the complex conjugate returned by this operation is of the form a - bj.

For example:

// 'input' is [-2.25 + 4.75j, 3.25 + 5.75j] conjugate(input) ==> [-2.25 - 4.75j, 3.25 - 5.75j]

If input is real-valued, then it is returned unchanged.

Input tensor.

Name for the created op.

Created op output.

IllegalArgumentException If the provided tensor is not numeric.

The constant op returns a constant tensor.

The constant op returns a constant tensor.

The resulting tensor is populated with values of type dataType, as specified by the arguments value and

(optionally) shape (see examples below).

The argument value can be a constant value, or a tensor. If value is a one-dimensional tensor, then its

length should be equal to the number of elements implied by the shape argument (if specified).

The argument dataType is optional. If not specified, then its value is inferred from the type of value.

The argument shape is optional. If present, it specifies the dimensions of the resulting tensor. If not

present, the shape of value is used.

Constant value.

Data type of the resulting tensor. If not provided, its value will be inferred from the type

of value.

Shape of the resulting tensor.

Name for the created op.

Created op output.

InvalidShapeException If shape != null, verifyShape == true, and the shape of values does not match

the provided shape.

The conv2D op computes a 2-D convolution given 4-D input and filter tensors.

The conv2D op computes a 2-D convolution given 4-D input and filter tensors.

Given an input tensor of shape [batch, inHeight, inWidth, inChannels] and a filter / kernel tensor of shape

[filterHeight, filterWidth, inChannels, outChannels], the op performs the following:

[filterHeight * filterWidth * inChannels, outputChannels].

2. Extracts image patches from the input tensor to form a *virtual* tensor of shape

[batch, outHeight, outWidth, filterHeight * filterWidth * inChannels].

3. For each patch, right-multiplies the filter matrix and the image patch vector.For example, for the default NWCFormat:

output(b,i,j,k) = sum_{di,dj,q} input(b, stride1 * i + di, stride2 * j + dj, q) * filter(di,dj,q,k). Must have strides[0] = strides[3] = 1. For the most common case of the same horizontal and vertices strides,

strides = [1, stride, stride, 1].

4-D tensor whose dimension order is interpreted according to the value of dataFormat.

4-D tensor with shape [filterHeight, filterWidth, inChannels, outChannels].

Stride of the sliding window along the second dimension of input.

Stride of the sliding window along the third dimension of input.

Padding mode to use.

Format of the input and output data.

The dilation factor for each dimension of input. If set to k > 1, there will be k - 1

skipped cells between each filter element on that dimension. The dimension order is

determined by the value of dataFormat. Dilations in the batch and depth dimensions must

be set to 1.

Boolean value indicating whether or not to use CuDNN for the created op, if its placed on a GPU, as opposed to the TensorFlow implementation.

Name for the created op.

Created op output, which is a 4-D tensor whose dimension order depends on the value of dataFormat.

The conv2DBackpropFilter op computes the gradient of the conv2D op with respect to its filter tensor.

The conv2DBackpropFilter op computes the gradient of the conv2D op with respect to its filter tensor.

4-D tensor whose dimension order is interpreted according to the value of dataFormat.

Integer vector representing the shape of the original filter, which is a 4-D tensor.

4-D tensor containing the gradients w.r.t. the output of the convolution and whose shape

depends on the value of dataFormat.

Stride of the sliding window along the second dimension of input.

Stride of the sliding window along the third dimension of input.

Padding mode to use.

Format of the input and output data.

The dilation factor for each dimension of input. If set to k > 1, there will be k - 1

skipped cells between each filter element on that dimension. The dimension order is

determined by the value of dataFormat. Dilations in the batch and depth dimensions must

be set to 1.

Boolean value indicating whether or not to use CuDNN for the created op, if its placed on a GPU, as opposed to the TensorFlow implementation.

Name for the created op.

Created op output, which is a 4-D tensor whose dimension order depends on the value of dataFormat.

The conv2DBackpropInput op computes the gradient of the conv2D op with respect to its input tensor.

The conv2DBackpropInput op computes the gradient of the conv2D op with respect to its input tensor.

Integer vector representing the shape of the original input, which is a 4-D tensor.

4-D tensor with shape [filterHeight, filterWidth, inChannels, outChannels].

4-D tensor containing the gradients w.r.t. the output of the convolution and whose shape

depends on the value of dataFormat.

Stride of the sliding window along the second dimension of input.

Stride of the sliding window along the third dimension of input.

Padding mode to use.

Format of the input and output data.

The dilation factor for each dimension of input. If set to k > 1, there will be k - 1

skipped cells between each filter element on that dimension. The dimension order is

determined by the value of dataFormat. Dilations in the batch and depth dimensions must

be set to 1.

Boolean value indicating whether or not to use CuDNN for the created op, if its placed on a GPU, as opposed to the TensorFlow implementation.

Name for the created op.

Created op output, which is a 4-D tensor whose dimension order depends on the value of dataFormat.

The cos op computes the cosine of a tensor element-wise.

The cos op computes the cosine of a tensor element-wise. I.e., y = \cos{x}.

Input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, INT32, INT64,

COMPLEX64, or COMPLEX128.

Name for the created op.

Created op output.

The cosh op computes the hyperbolic cosine of a tensor element-wise.

The cosh op computes the hyperbolic cosine of a tensor element-wise. I.e., y = \cosh{x}.

Input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, INT32, INT64,

COMPLEX64, or COMPLEX128.

Name for the created op.

Created op output.

The countNonZero op computes the number of non-zero elements across axes of a tensor.

The countNonZero op computes the number of non-zero elements across axes of a tensor.

Reduces input along the axes given in axes. Unless keepDims is true, the rank of the tensor is reduced

by 1 for each entry in axes. If keepDims is true, the reduced axes are retained with size 1.

If axes is null, then all axes are reduced, and a tensor with a single element is returned.

IMPORTANT NOTE: Floating point comparison to zero is done by exact floating point equality check. Small values are not rounded to zero for the purposes of the non-zero check.

For example:

// 'x' is [[0, 1, 0], [1, 1, 0]] countNonZero(x) ==> 3 countNonZero(x, 0) ==> [1, 2, 0] countNonZero(x, 1) ==> [1, 2] countNonZero(x, 1, keepDims = true) ==> [[1], [2]] countNonZero(x, [0, 1]) ==> 3

IMPORTANT NOTE: Strings are compared against zero-length empty string "". Any string with a size greater

than zero is already considered as nonzero.

For example:

// 'x' is ["", "a", " ", "b", ""] countNonZero(x) ==> 3 // "a", " ", and "b" are treated as nonzero strings.

Input tensor to reduce.

Integer array containing the axes to reduce. If null, then all axes are reduced.

If true, retain the reduced axes.

Name for the created op.

Created op output with INT64 data type.

The countNonZero op computes the number of non-zero elements across axes of a tensor.

The countNonZero op computes the number of non-zero elements across axes of a tensor.

Reduces input along the axes given in axes. Unless keepDims is true, the rank of the tensor is reduced

by 1 for each entry in axes. If keepDims is true, the reduced axes are retained with size 1.

If axes is null, then all axes are reduced, and a tensor with a single element is returned.

IMPORTANT NOTE: Floating point comparison to zero is done by exact floating point equality check. Small values are not rounded to zero for the purposes of the non-zero check.

For example:

// 'x' is [[0, 1, 0], [1, 1, 0]] countNonZero(x) ==> 3 countNonZero(x, 0) ==> [1, 2, 0] countNonZero(x, 1) ==> [1, 2] countNonZero(x, 1, keepDims = true) ==> [[1], [2]] countNonZero(x, [0, 1]) ==> 3

IMPORTANT NOTE: Strings are compared against zero-length empty string "". Any string with a size greater

than zero is already considered as nonzero.

For example:

// 'x' is ["", "a", " ", "b", ""] countNonZero(x) ==> 3 // "a", " ", and "b" are treated as nonzero strings.

Input tensor for which to count the number of non-zero entries.

Name for the created op.

Created op output with INT64 data type.

The crelu op computes the concatenated rectified linear unit activation function.

The crelu op computes the concatenated rectified linear unit activation function.

The op concatenates a ReLU which selects only the positive part of the activation with a ReLU which selects only the *negative* part of the activation. Note that as a result this non-linearity doubles the depth of the activations.

Source: [Understanding and Improving Convolutional Neural Networks via Concatenated Rectified Linear Units](https://arxiv.org/abs/1603.05201)

Input tensor.

Along along which the output values are concatenated along.

Name for the created op.

Created op output.

The cross op computes the pairwise cross product between two tensors.

The cross op computes the pairwise cross product between two tensors.

a and b must have the same shape; they can either be simple 3-element vectors, or have any shape

where the innermost dimension size is 3. In the latter case, each pair of corresponding 3-element vectors

is cross-multiplied independently.

First input tensor.

Second input tensor.

Name for the created op.

Created op output.

The cumprod op computes the cumulative product of the tensor along an axis.

The cumprod op computes the cumulative product of the tensor along an axis.

By default, the op performs an inclusive cumulative product, which means that the first element of the input is identical to the first element of the output:

cumprod([a, b, c]) ==> [a, a * b, a * b * c] By setting the exclusive argument to true, an exclusive cumulative product is performed instead:

cumprod([a, b, c], exclusive = true) ==> [0, a, a * b]

By setting the reverse argument to true, the cumulative product is performed in the opposite direction:

cumprod([a, b, c], reverse = true) ==> [a * b * c, b * c, c]

This is more efficient than using separate Basic.reverse ops.

The reverse and exclusive arguments can also be combined:

cumprod([a, b, c], exclusive = true, reverse = true) ==> [b * c, c, 0]

Input tensor.

INT32 tensor containing the axis along which to perform the cumulative product.

Boolean value indicating whether to perform an exclusive cumulative product.

Boolean value indicating whether to perform a reverse cumulative product.

Name for the created op.

Created op output.

The cumsum op computes the cumulative sum of the tensor along an axis.

The cumsum op computes the cumulative sum of the tensor along an axis.

By default, the op performs an inclusive cumulative sum, which means that the first element of the input is identical to the first element of the output:

cumsum([a, b, c]) ==> [a, a + b, a + b + c] By setting the exclusive argument to true, an exclusive cumulative sum is performed instead:

cumsum([a, b, c], exclusive = true) ==> [0, a, a + b]

By setting the reverse argument to true, the cumulative sum is performed in the opposite direction:

cumsum([a, b, c], reverse = true) ==> [a + b + c, b + c, c]

This is more efficient than using separate Basic.reverse ops.

The reverse and exclusive arguments can also be combined:

cumsum([a, b, c], exclusive = true, reverse = true) ==> [b + c, c, 0]

Input tensor.

INT32 tensor containing the axis along which to perform the cumulative sum.

Boolean value indicating whether to perform an exclusive cumulative sum.

Boolean value indicating whether to perform a reverse cumulative sum.

Name for the created op.

Created op output.

Returns the variable getters in the current scope.

Returns the variable getters in the current scope.

Returns the variable scope in the current scope.

Returns the variable scope in the current scope.

Returns the variable store in the current scope.

Returns the variable store in the current scope.

The decodeBase64 op decodes web-safe base64-encoded strings.

The decodeBase64 op decodes web-safe base64-encoded strings.

The input may or may not have padding at the end. See encodeBase64 for more details on padding.

Web-safe means that the encoder uses - and _ instead of + and /.

Input STRING tensor.

Name for the created op.

Created op output.

$OpDocParsingDecodeCSV

$OpDocParsingDecodeCSV

STRING tensor where each string is a record/row in the csv and all records should have the same format.

One tensor per column of the input record, with either a scalar default value for that column or empty if the column is required.

Output tensor data types.

Delimiter used to separate fields in a record.

If false, the op treats double quotation marks as regular characters inside the

string fields (ignoring RFC 4180, Section 2, Bullet 5).

Name for the created op.

Created op outputs.

$OpDocParsingDecodeJSONExample

$OpDocParsingDecodeJSONExample

STRING tensor where each string is a JSON object serialized according to the JSON mapping

of the Example proto.

Name for the created op.

Created op output.

$OpDocParsingDecodeRaw

$OpDocParsingDecodeRaw

STRING tensor interpreted as raw bytes. All the elements must have the same length.

Output tensor data type.

Boolean value indicating whether the input bytes are stored in little-endian order. Ignored

for dataType values that are stored in a single byte, like UINT8.

Name for the created op.

Created op output.

$OpDocParsingDecodeTensor

$OpDocParsingDecodeTensor

STRING tensor containing a serialized TensorProto proto.

Data type of the serialized tensor. The provided data type must match the data type of the serialized tensor and no implicit conversion will take place.

Name for the created op.

Created op output.

The depthToSpace op rearranges data from depth into blocks of spatial data.

The depthToSpace op rearranges data from depth into blocks of spatial data.

More specifically, the op outputs a copy of the input tensor where values from the depth dimension are moved

in spatial blocks to the height and width dimensions. blockSize indicates the input block size and how the

data us moved:

blockSize * blockSize from depth are rearranged into non-overlapping blocks of size

blockSize x blockSize.inputDepth * blockSize, whereas the height is inputHeight * blockSize.blockSize * blockSize. That is, assuming that input is in the shape [batch, height, width, depth], the shape of the output will be:

[batch, height * blockSize, width * blockSize, depth / (block_size * block_size)].

This op is useful for resizing the activations between convolutions (but keeping all data), e.g., instead of pooling. It is also useful for training purely convolutional models.

Some examples:

// === Example #1 === // input = [[[[1, 2, 3, 4]]]] (shape = [1, 1, 1, 4]) // blockSize = 2 depthToSpace(input, blockSize) ==> [[[[1], [2]], [[3], [4]]]] (shape = [1, 2, 2, 1]) // === Example #2 === // input = [[[[1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12]]]] (shape = [1, 1, 1, 12]) // blockSize = 2 depthToSpace(input, blockSize) ==> [[[[1, 2, 3], [4, 5, 6]], [[7, 8, 9]], [[10, 11, 12]]] (shape = [1, 2, 2, 3]) // === Example #3 === // input = [[[[ 1, 2, 3, 4], // [ 5, 6, 7, 8]], // [[ 9, 10, 11, 12], // [13, 14, 15, 16]]]] (shape = [1, 2, 2, 4]) // blockSize = 2 depthToSpace(input, blockSize) ==> [[[[ 1], [ 2], [ 5], [ 6]], [[ 3], [ 4], [ 7], [ 8]], [[ 9], [10], [13], [14]], [[11], [12], [15], [16]]]] (shape = [1, 4, 4, 1,])

4-dimensional input tensor with shape [batch, height, width, depth].

Block size which must be greater than 1.

Format of the input and output data.

Name for the created op.

Created op output.

The diag op constructs a diagonal tensor using the provided diagonal values.

The diag op constructs a diagonal tensor using the provided diagonal values.

Given a diagonal, the op returns a tensor with that diagonal and everything else padded with zeros. The

diagonal is computed as follows:

Assume that diagonal has shape [D1,..., DK]. Then the output tensor, output, is a rank-2K tensor with

shape [D1, ..., DK, D1, ..., DK], where output(i1, ..., iK, i1, ..., iK) = diagonal(i1, ..., iK) and 0

everywhere else.

For example:

// 'diagonal' is [1, 2, 3, 4] diag(diagonal) ==> [[1, 0, 0, 0], [0, 2, 0, 0], [0, 0, 3, 0], [0, 0, 0, 4]]

This op is the inverse of diagPart.

Diagonal values, represented as a rank-K tensor, where K can be at most 3.

Name for the created op.

Created op output.

The diagPart op returns the diagonal part of a tensor.

The diagPart op returns the diagonal part of a tensor.

The op returns a tensor with the diagonal part of the input. The diagonal part is computed as follows:

Assume input has shape [D1, ..., DK, D1, ..., DK]. Then the output is a rank-K tensor with shape

[D1,..., DK], where diagonal(i1, ..., iK) = output(i1, ..., iK, i1, ..., iK).

For example:

// 'input' is [[1, 0, 0, 0], [0, 2, 0, 0], [0, 0, 3, 0], [0, 0, 0, 4]] diagPart(input) ==> [1, 2, 3, 4]

This op is the inverse of diag.

Rank-K input tensor, where K is either 2, 4, or 6.

Name for the created op.

Created op output.

The digamma op computes the derivative of the logarithm of the absolute value of the Gamma function applied

element-wise on a tensor (i.e., the digamma or Psi function).

The digamma op computes the derivative of the logarithm of the absolute value of the Gamma function applied

element-wise on a tensor (i.e., the digamma or Psi function). I.e., y = \partial\log{|\Gamma{x}|}.

Input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, INT32, INT64,

COMPLEX64, or COMPLEX128.

Name for the created op.

Created op output.

The divide op divides two tensors element-wise.

The divide op divides two tensors element-wise. I.e., z = x / y.

NOTE: This op supports broadcasting. More information about broadcasting can be found [here](http://docs.scipy.org/doc/numpy/user/basics.broadcasting.html).

First input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, UINT8,

INT8, INT16, INT32, INT64, COMPLEX64, or COMPLEX128.

Second input tensor that must be one of the following types: HALF, FLOAT32, FLOAT64, UINT8,

INT8, INT16, INT32, INT64, COMPLEX64, or COMPLEX128.

Name for the created op.

Created op output.

The dropout op computes a dropout layer.

The dropout op computes a dropout layer.

With probability keepProbability, the op outputs the input element scaled up by 1 / keepProbability,

otherwise it outputs 0. The scaling is such that the expected sum remains unchanged.

By default, each element is kept or dropped independently. If noiseShape is specified, it must be

[broadcastable](http://docs.scipy.org/doc/numpy/user/basics.broadcasting.html) to the shape of input, and only

dimensions with noiseShape(i) == x.shape(i) will make independent decisions. For example, if

x.shape = [k, l, m, n] and noiseShape = [k, 1, 1, n], each k and n component will be kept independently

and each l and m component will be kept or not kept together.

Input tensor.

Probability (i.e., number in the interval (0, 1]) that each element is kept.

If true, the outputs will be divided by the keep probability.

INT32 rank-1 tensor representing the shape for the randomly generated keep/drop flags.

Optional random seed, used to generate a random seed pair for the random number generator, when combined with the graph-level seed.

Name for the created op.

Created op output that has the same shape as input.

The dropout op computes a dropout layer.

The dropout op computes a dropout layer.

With probability keepProbability, the op outputs the input element scaled up by 1 / keepProbability,

otherwise it outputs 0. The scaling is such that the expected sum remains unchanged.

By default, each element is kept or dropped independently. If noiseShape is specified, it must be

[broadcastable](http://docs.scipy.org/doc/numpy/user/basics.broadcasting.html) to the shape of input, and only

dimensions with noiseShape(i) == x.shape(i) will make independent decisions. For example, if

x.shape = [k, l, m, n] and noiseShape = [k, 1, 1, n], each k and n component will be kept independently

and each l and m component will be kept or not kept together.

Input tensor.

Probability (i.e., scalar in the interval (0, 1]) that each element is kept.

If true, the outputs will be divided by the keep probability.

INT32 rank-1 tensor representing the shape for the randomly generated keep/drop flags.

Optional random seed, used to generate a random seed pair for the random number generator, when combined with the graph-level seed.

Name for the created op.

Created op output that has the same shape as input.

Creates an op that partitions data into numberOfPartitions tensors using indices from partitions.

Creates an op that partitions data into numberOfPartitions tensors using indices from partitions.

For each index tuple js of size partitions.rank, the slice data[js, ...] becomes part of

outputs[partitions[js]]. The slices with partitions[js] = i are placed in outputs[i] in lexicographic order

of js, and the first dimension of outputs[i] is the number of entries in partitions equal to i. In detail:

outputs(i).shape = [sum(partitions == i)] + data.shape(partitions.rank::) outputs(i) = pack(js.filter(partitions(_) == i).map(data(_, ---))

data.shape must start with partitions.shape.

For example:

// Scalar partitions. val outputs = dynamicPartition( data = Tensor(10, 20), partitions = 1, numberOfPartitions = 2) outputs(0) ==> [] outputs(1) ==> [[10, 20]] // Vector partitions. val outputs = dynamicPartition( data = Tensor(10, 20, 30, 40, 50), partitions = [0, 0, 1, 1, 0], numberOfPartitions = 2) outputs(0) ==> [10, 20, 50] outputs(1) ==> [30, 40]

See dynamicStitch for an example on how to merge partitions back together.

Tensor to partition.

Tensor containing indices in the range [0, numberOfPartitions].

Number of partitions to output.

Name for the created op.

Created op outputs (i.e., partitions).

The dynamicRNN op creates a recurrent neural network (RNN) specified by the provided RNN cell.

The dynamicRNN op creates a recurrent neural network (RNN) specified by the provided RNN cell. The op performs

fully dynamic unrolling of the RNN.

RNN cell to use.

Input to the RNN loop.

Initial state to use for the RNN, which is a sequence of tensors with shapes

[batchSize, stateSize(i)], where i corresponds to the index in that sequence.

Defaults to a zero state.

Boolean value indicating whether the inputs are provided in time-major format (i.e.,

have shape [time, batch, depth]) or in batch-major format (i.e., have shape

[batch, time, depth]).

Number of RNN loop iterations allowed to run in parallel.

If true, GPU-CPU memory swapping support is enabled for the RNN loop.

Optional INT32 tensor with shape [batchSize] containing the sequence lengths for

each row in the batch.

Name prefix to use for the created ops.

RNN cell tuple after the dynamic RNN loop is completed. The output of that tuple has a time axis

prepended to the shape of each tensor and corresponds to the RNN outputs at each iteration in the loop.

The state represents the RNN state at the end of the loop.

InvalidArgumentException If neither initialState nor zeroState is provided.

InvalidShapeException If the inputs or the provided sequence lengths have invalid or unknown shapes.

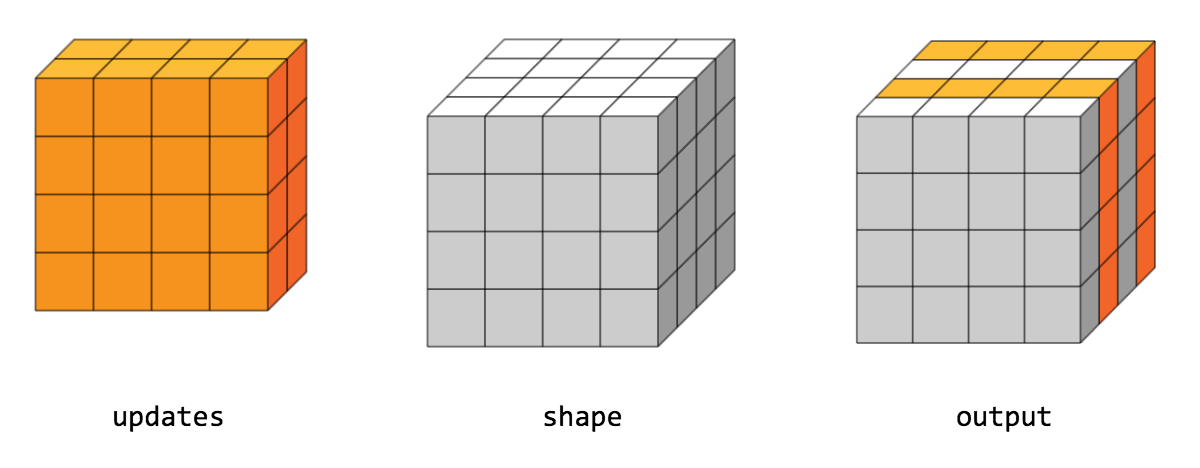

Creates an op that interleaves the values from the data tensors into a single tensor.

Creates an op that interleaves the values from the data tensors into a single tensor.

The op builds a merged tensor such that:

merged(indices(m)(i, ---, j), ---) = data(m)(i, ---, j, ---)

For example, if each indices(m) is scalar or vector, we have:

// Scalar indices. merged(indices(m), ---) == data(m)(---) // Vector indices. merged(indices(m)(i), ---) == data(m)(i, ---)

Each data(i).shape must start with the corresponding indices(i).shape, and the rest of data(i).shape must be

constant w.r.t. i. That is, we must have data(i).shape = indices(i).shape + constant. In terms of this

constant, the output shape is merged.shape = [max(indices)] + constant.

Values are merged in order, so if an index appears in both indices(m)(i) and indices(n)(j) for

(m,i) < (n,j), the slice data(n)(j) will appear in the merged result.

For example: